Featherless.ai raised $20 million in Series A funding co-led by AMD Ventures and Airbus Ventures on May 1, 2026. The Singapore-founded startup runs a serverless platform supporting 30,000+ open-source AI models—production-grade infrastructure challenging OpenAI and Anthropic’s proprietary APIs. The investor roster tells the real story: AMD wants an alternative to NVIDIA’s AI dominance, Airbus is betting specialized models beat general-purpose LLMs, and BMW needs cost-effective automotive AI. Corporate titans are backing open-source because they see the writing on the wall.

Strategic Bets Against the Closed Ecosystem

This isn’t venture capital diversification. AMD Ventures, Airbus Ventures, and BMW i Ventures are making strategic counter-moves to OpenAI, Anthropic, and NVIDIA monopolies. AMD’s partnership ensures popular open-source models run natively on its ROCm platform—a direct challenge to NVIDIA’s CUDA lock-in. Airbus Ventures is betting that “millions of specialized, fine-tuned models” will replace the few general-purpose systems everyone’s racing to scale. That’s a bet against GPT-5 and Claude Opus 5 before they even launch.

Featherless co-founder Eugene Cheah, creator of the RWKV model architecture, framed it plainly: “Open-source is the only real check on that, and it only works if the infrastructure to run it actually exists.” The funding builds that infrastructure—global serverless deployment, AMD ROCm optimization, and a marketplace for specialized models launching soon.

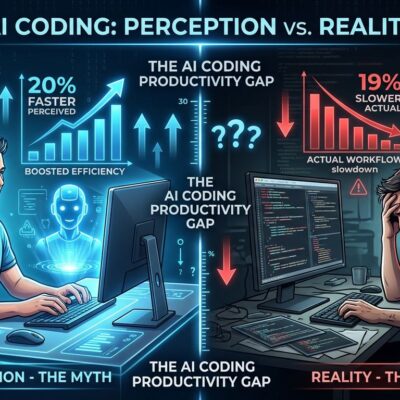

The Economics Aren’t Close—They’re Overwhelming

The gap between closed and open AI models isn’t narrowing. It’s inverting. Closed models cost six times more than open alternatives, according to MIT Sloan research. Reallocating demand from closed to open could cut global AI spending by 70%—$25 billion annually. One fintech company cut monthly AI costs from $47,000 to $8,000 (83% reduction) by switching to open models via platforms like Featherless.

Performance? Llama 3, Mistral, Qwen, and DeepSeek now match GPT-4 and Claude on most benchmarks. DeepSeek trained its V3 model—competitive with GPT-4o—for $5.5 million. OpenAI reportedly spends hundreds of millions per training run. Open-source models deliver ~90% of closed model performance at release and catch up within months. Context windows hit 128K tokens across major families. Inference tooling matured: Ollama, llama.cpp, and platforms like Featherless run production workloads without DevOps overhead.

AMD’s Cost Advantage Is Real

AMD’s ROCm platform delivers 25-40% cost savings versus NVIDIA equivalents, with a performance gap that’s shrunk to 10-30% (and closing). AMD’s MI355X chips now score within single-digit percentage points of NVIDIA’s B200 on inference benchmarks. For memory-bandwidth-heavy workloads—large model prefill, long-context generation—ROCm is genuinely competitive.

Featherless’s native AMD ROCm support gives developers a credible NVIDIA alternative. If you’re running inference at scale, that 25-40% cost difference compounds fast. AMD isn’t a charity case anymore. It’s a strategic hedge.

Specialized Models vs. General LLMs: The Airbus Bet

Airbus Ventures isn’t investing in “better ChatGPT.” Its thesis: the next phase of AI adoption won’t be GPT-5 getting bigger and more general. It’ll be millions of domain-specific models—aerospace diagnostics, fraud detection, autonomous vehicle planning—trained on specialized datasets and fine-tuned for narrow tasks.

Why specialized models win: better performance on specific tasks, lower inference costs (smaller models), faster responses, and no data leaves your infrastructure. Featherless is launching a marketplace where developers can discover and deploy these specialized models. The platform already supports 30,000+ models. That number will grow as enterprises realize they don’t need GPT-5 for every task.

What Developers Get Today

Featherless offers 30,000+ open models (language, vision, audio, multimodal) via a serverless platform with flat-rate pricing. No per-token billing. No surprise invoices. Models load in under five seconds. The API is OpenAI-compatible, so migration from proprietary services takes hours, not weeks. Multi-region infrastructure (EU/US) handles data sovereignty requirements.

If you’re paying OpenAI or Anthropic per-token today, Featherless is a credible exit strategy: 90% cost savings with minimal quality loss. Developers report “removing the fear of the token meter” and gaining predictable monthly costs. The platform is already Hugging Face’s fastest-growing inference provider.

What Comes Next

The $20 million funds four priorities: expanding the model library, shipping an open-source agent runtime, deepening AMD hardware integration, and scaling enterprise deployments with private environments. The vision is “AI independence”—developers and companies controlling their AI stack instead of renting compute from Big Tech.

Corporate VCs don’t back open-source infrastructure on ideological grounds. They back it because closed ecosystems create strategic vulnerabilities. AMD needs competition to NVIDIA. Airbus needs specialized models for aerospace applications. BMW needs cost-effective, auditable AI for vehicles. Featherless is building the infrastructure that makes open-source AI production-ready at scale.

2026 isn’t the year open-source AI “catches up” to proprietary models. It’s the year it overtakes them on cost, flexibility, and strategic independence. The economics are overwhelming, the performance gap is closed, and the infrastructure now exists. Developers finally have leverage.