Flock Safety employees accessed live camera feeds from a children’s gymnastics room at the Marcus Jewish Community Center in Dunwoody, Georgia to demonstrate their surveillance product to other police departments. When resident Jason Hunyar uncovered this through a public records request, the city learned Flock had been using kids’ gymnastics practice, community pool sessions, and school playgrounds as live product demos – turning real people into unwitting sales pitches. The city’s response? Renewed the contract anyway. This is surveillance capitalism at its most predatory, and the decision shows how quickly abuse becomes business as usual.

SaaS 101: Use Staging, Not Kids

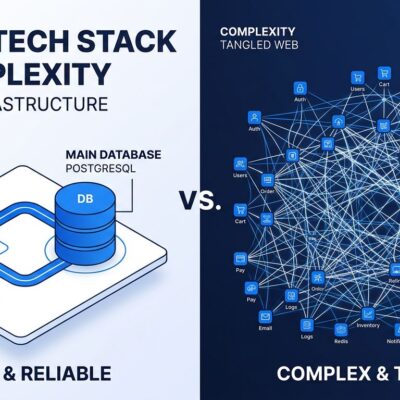

Here’s what every competent SaaS company does for sales demos: build a staging environment with synthetic data. Test users named “test@example.com” or “1111111.” Canned footage or dummy feeds. Keep production data and sales demonstrations completely separated. It’s not complicated – it’s standard practice for a reason.

Flock skipped all of this. Instead of setting up proper demo infrastructure, they accessed live customer cameras. Real children in gymnastics class became the demo. Real people at community pools became the sales pitch. As Hacker News commenters put it: “Why is there not a dedicated demo environment for demos, like practically every other software?” Another nailed the core issue: “Flock got lazy and used real tenants instead of setting up proper demo infrastructure.”

This isn’t just lazy engineering – it’s technical incompetence that enables ethical violations. When you skip SaaS best practices, you create conditions for surveillance abuse.

A Pattern, Not an Accident

This isn’t Flock’s first ethical failure. Throughout 2025, the Electronic Frontier Foundation documented a pattern of surveillance abuses: tracking protesters exercising First Amendment rights, more than 80 law enforcement agencies using discriminatory searches with racial slurs to target Romani people, and a Texas Sheriff’s Office allegedly using Flock to track a woman suspected of having an abortion. A data breach exposed 12 million searches logged by 3,900 agencies. Since February 2025, 23 jurisdictions have cancelled or rejected Flock contracts after organizing campaigns centered on EFF research.

The Dunwoody gym demo isn’t “poor judgement,” as CEO Garrett Langley called it when he apologized to the Jewish community center. It’s how the company operates. The architecture enables abuse. The business model incentivizes it. And when caught, they promise to do better while cities renew contracts anyway.

“We Had Permission” Isn’t Good Enough

Flock claims the city “authorized select employees” for product demos. The city confirms it approved a “demo partner program.” Neither bothered to tell residents their surveillance footage would be used for commercial sales pitches. Parents whose children were being watched had no knowledge. The Marcus Jewish Community Center – a private facility – may not have consented at all.

This is the surveillance industry’s favorite excuse: “We had permission.” But permission from whom? The city can’t ethically authorize access to private facilities or children’s spaces for commercial purposes. Parents whose kids were surveilled never consented – COPPA requires parental consent for children’s data, a principle Flock completely ignored. Technical authorization doesn’t create ethical permission to turn humans into unpaid product demos.

As developers, we need to ask harder questions: Who owns surveillance footage? Who has the right to consent to its use? Does municipal authorization extend to commercial exploitation? “We had permission” is where every surveillance abuse starts. It’s not where the ethical inquiry should end.

Normalization Is the Real Danger

Dunwoody discovered the abuse and renewed the contract anyway. That matters more than Flock’s apology. The message: public trust is negotiable, surveillance contracts aren’t.

This is how surveillance becomes infrastructure. First time it’s a violation, second time it’s standard practice. When cities renew contracts after discovering abuse, they’re teaching surveillance companies there are no consequences.

What Developers Need to Own

If you’re building surveillance systems, ask: Do we have proper staging environments? Who has access to production data? Can our system be abused? Are we building privacy by design, or adding it after getting caught?

The Flock case shows what happens when technical incompetence meets ethical failure. No demo environment leads to production data abuse. Weak access controls enable commercial exploitation. “We had permission” becomes the excuse for turning children’s gymnastics into a sales tool.

If you build the tools, you’re responsible for the harms. If your demos use production surveillance footage, you’re doing it wrong. The code you write has consequences. Own them.