The UK government intervened THIS WEEK after health data from 500,000 UK Biobank volunteers appeared for sale on Alibaba’s Chinese e-commerce platform. The breach, discovered April 20 and disclosed April 23 by the UK government, exposed a dual data security failure: three Chinese research institutions had their data listed for commercial sale, while 170 researchers across 14 countries accidentally leaked sensitive health records to GitHub 110 times since July 2025. The culprit wasn’t malicious hackers—it was the open science movement’s mandate to share code publicly.

This isn’t just a health privacy disaster. It’s a wake-up call for every developer who shares code to GitHub. Researchers leaked patient data while trying to comply with journal requirements for reproducible research, exposing a fundamental conflict between transparency and security that affects anyone handling sensitive information.

The Dual Breach: GitHub Leaks Meet Commercial Exploitation

UK Biobank filed 110 DMCA takedown notices to GitHub between July 2025 and April 17, 2026, targeting 197 repositories that exposed participant data. The leaks came from 170 researchers spanning 14 countries—24 in the United States, 21 in China, and others worldwide. One dataset alone contained hospital admission dates and diagnoses for 413,000 people, along with birth dates and sex.

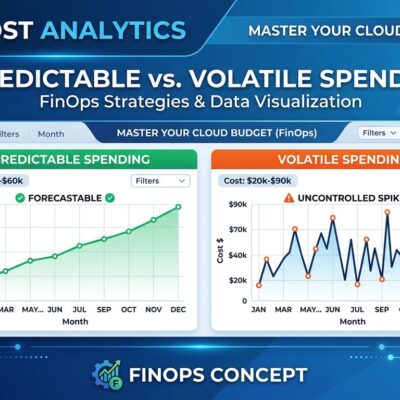

Meanwhile, three Chinese research institutions that had legitimate access to UK Biobank data somehow ended up with their datasets listed for sale on Alibaba. At least one listing offered the complete 500,000-participant dataset. The UK government worked with Chinese authorities to remove the listings before any confirmed sales occurred, then revoked access to all three institutions.

The scale proves this is systemic, not isolated. When 170 researchers across multiple countries make the same mistake over nine months, the problem isn’t individual incompetence—it’s broken infrastructure.

How Open Science Requirements Created This Mess

Here’s the impossible position researchers face: journals and funding bodies increasingly require code sharing on platforms like GitHub for reproducibility. You can’t publish without making your analysis public. However, UK Biobank strictly prohibits researchers from sharing participant data outside their systems. The problem? Code and data are often inseparable.

Consider the typical workflow. Jupyter notebooks embed data in cells alongside code. Analysis scripts reference local data files. Test cases include sample rows. Researchers trained to share their work for peer review uploaded repositories that inadvertently contained genetic data files, health phenotypes, and hospital records. Consequently, they weren’t ignoring security—they were caught between conflicting mandates.

Moreover, GitHub compounds the problem. Despite its ubiquity, it’s not a proper research repository. Repositories can be deleted at any time, GitHub offers no perpetual access guarantees, and it’s commercially owned by Microsoft with no organizational commitment to maintaining research data forever. Nevertheless, journals treat it as the de facto standard because that’s where developers already work.

Ultimately, every developer who works with sensitive data faces this risk. Customer information in debugging logs, API keys in example code, database dumps in test fixtures—the same tools that make collaboration easy make accidental exposure trivial. UK Biobank’s breach just happened at massive scale with life-altering data.

“Anonymous” Data That Isn’t Actually Anonymous

UK Biobank and the government initially claimed the exposed data was “anonymized”—no names, addresses, or NHS numbers included. That’s technically true and practically meaningless. In fact, journalists investigating the GitHub leaks successfully re-identified a volunteer using only an approximate birth date and the date of a single major surgery.

Furthermore, the leaked datasets contained enough to make re-identification straightforward: month and year of birth, hospital admission dates, diagnosis codes, and genetic markers. UK Biobank CEO Sir Rory Collins admitted the organization “cannot be wholly certain that it couldn’t be used to identify individuals if it ended up in the wrong hands.” That’s not anonymization—that’s anonymization theater.

For developers handling personal data, the lesson is stark: removing names doesn’t create anonymity. Rather, combinations of seemingly innocuous fields—birth dates, location data, transaction histories—can uniquely identify individuals with alarming accuracy. This has legal implications under GDPR and HIPAA, and trust implications when breaches inevitably happen.

Government Response and What Comes Next

The UK government called the breach “an unacceptable abuse” and took three immediate actions: pressuring Alibaba to remove listings, revoking access to the three Chinese institutions, and pausing all new UK Biobank data access indefinitely. No researcher can download data until UK Biobank implements a “technical solution” to prevent bulk downloads.

Parliament’s Science and Technology Committee was less diplomatic. “It’s deeply concerning that sensitive data held by UK Biobank had not been subject to proper controls,” the committee chair stated. “In February 2026, the government assured us standards would improve… today’s statement demonstrates how little progress has been made.”

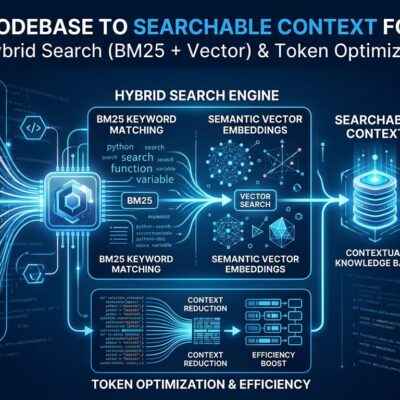

The pause affects thousands of researchers worldwide and delays medical research dependent on this data. The likely technical solution? Query-based access instead of raw downloads, secure enclaves where code can leave but data cannot, and stricter monitoring of who accesses what. UK Biobank will also issue new guidance on research data control, though given that researchers already received training that didn’t prevent 110 leaks, better tools matter more than better rules.

Broader implications extend beyond biomedical research. Academic institutions face mounting regulatory pressure to tighten data security. Furthermore, the open science movement must solve how to maintain transparency without compromising privacy—a challenge with no easy technical answer. Additionally, GitHub may face pressure to implement research data scanning, though detecting health records in natural language and code snippets is exponentially harder than catching hardcoded API keys.

Key Takeaways

- 500,000 UK Biobank volunteers had their health data exposed through dual breach: accidental GitHub leaks and deliberate Alibaba sale

- 170 researchers across 14 countries leaked data while complying with journal code-sharing requirements—a systemic failure, not individual negligence

- Open science mandates conflict with data privacy: journals require GitHub code sharing, but sensitive data can’t be separated from analysis code

- “Anonymized” data proved re-identifiable using just birth dates and surgery dates—removing names isn’t enough

- UK government paused all UK Biobank data access indefinitely; future access likely query-only through secure enclaves

- Every developer sharing code to GitHub risks accidentally exposing sensitive data through logs, test fixtures, or embedded samples