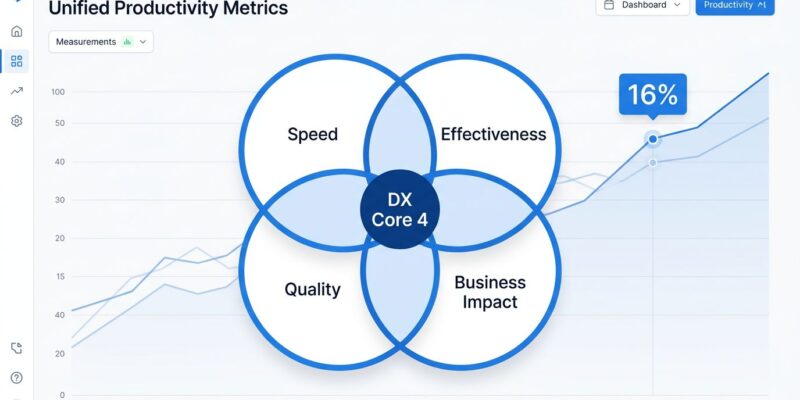

The DX Core 4 framework, announced April 16 at the DX Annual 2026 conference in San Francisco, unifies three major developer productivity measurement methodologies—DORA, SPACE, and DevEx—into four dimensions: Speed, Effectiveness, Quality, and Business Impact. Organizations have been drowning in fragmented metrics while spending $200-600 per month per engineer on AI tools without a way to prove ROI. Vendor claims promise 30-50% productivity gains. However, reality shows only 5-15% actual boost, despite developers saving 3 hours and 45 minutes weekly. DX Core 4, tested with over 300 organizations, solves the measurement chaos by providing a universal framework that engineering leaders can finally use to measure real productivity, not just perceived speed.

The Measurement Crisis That Demanded DX Core 4

Engineering leaders faced an impossible choice. Consequently, DORA metrics focus on deployment speed but miss developer experience entirely. Meanwhile, SPACE measures satisfaction across five dimensions but overwhelms teams with too many variables to prioritize. Furthermore, DevEx tracks developer happiness but lacks delivery performance context. Using all three together creates fragmented, redundant measurement impossible to compare across teams or organizations. None of them could answer the critical 2026 question: “Did our $1M AI coding tool investment actually work?”

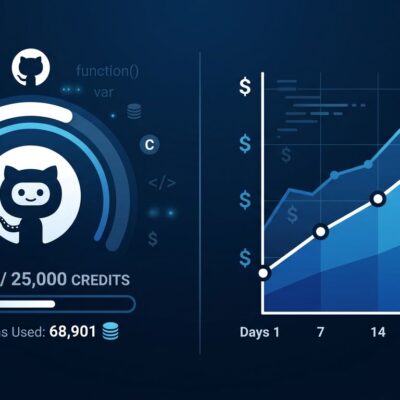

The AI productivity paradox made this crisis acute. Developers save 3 hours and 45 minutes weekly using AI tools, yet organizational productivity gains plateau at 5-15%—nowhere near the 30-50% vendor claims. Moreover, one randomized controlled study found experienced developers using AI took 19% longer to complete tasks while believing they were 20% faster, a staggering 39-point perception gap. The hidden costs tell the real story: 98% more pull requests, 91% longer review times, and code churn rising from 3.1% to 5.7%. In fact, time saved generating code gets spent reviewing and fixing it.

Meanwhile, 80% of large software organizations now have platform teams, and 41% of all code is AI-generated. Additionally, engineering leaders spend $200-600 per month per engineer on AI tools—not the $30-60 seat licenses early cost estimates assumed, but actual token spend for agentic tools like Claude Code that can hit $2,000+ per engineer monthly. Without unified measurement, proving value on these investments was impossible. Traditional DORA metrics can’t separate real AI productivity gains from hidden technical debt.

How DX Core 4 Unifies DORA, SPACE, and DevEx

DX Core 4 distills DORA’s 4 delivery metrics, SPACE’s 5 experience dimensions, and DevEx’s satisfaction focus into 4 balanced dimensions that prevent one-dimensional optimization. Speed measures delivery velocity through diffs per engineer—measured at the team level, not individual, to prevent gaming behaviors. Furthermore, Effectiveness captures output plus the Developer Experience Index (DXI), a composite score derived from 14 factors including code quality, focus time, and CI/CD processes. It even tracks “regrettable attrition,” measuring developer churn as a team health signal.

Quality incorporates DORA’s change failure rate and reliability metrics. In contrast, Business Impact—the most unique dimension compared to other frameworks—ties productivity directly to value delivered to the organization, not just activity. This balancing act addresses the core flaw of fragmented measurement: optimizing delivery speed at the expense of quality, or boosting individual output while team delivery slows.

The framework uses three complementary data collection approaches. System metrics provide automated, precise data from development tools and repositories. Self-reported surveys capture experience perception and quality assessment where system metrics fall short. Additionally, experience sampling collects real-time feedback while developers work, measuring concrete productivity gains from tools like Copilot. Consequently, organizations can deploy DX Core 4 in weeks, not the multi-year dashboard efforts traditional productivity measurement demanded. Start with self-reported baselines while building system metric infrastructure. Transparency matters—communicate data collection and usage across all levels to prevent the fear and counterproductive behaviors isolated throughput metrics create.

Booking.com Case Study: 16% AI Productivity Lift Proven at Scale

Booking.com deployed DX Core 4 across 3,500 developers and measured a 16% productivity lift specifically attributed to AI—not perceived speed, but actual measured impact. The deployment achieved a 65% increase in AI adoption, a 31% total increase in engineering throughput, a 16% higher PR merge rate for AI users, and saved 150,000 hours in year one. This wasn’t a small pilot. This was enterprise-scale validation of the framework’s ability to separate vendor claims from reality.

Across 300+ organizations that tested DX Core 4 before its public launch, the results converge on measurable gains: 3-12% improvement in overall engineering efficiency, 14% increase in R&D time allocated to feature development instead of toil, and 15% enhancement in employee engagement scores. Moreover, the Developer Experience Index (DXI) provides the financial translation: a single-point increase saves 13 minutes per week per developer, or 10 hours annually. At 100 developers, a 1-point DXI improvement equals roughly $100,000 annually in saved developer time. Furthermore, top-quartile DXI scores correlate with development speed and quality 4-5 times greater than bottom-quartile teams, plus 43% higher employee engagement.

Major customers using DX Core 4 include Adyen, Dropbox, Vanguard, and Booking.com. The framework was developed in collaboration with the authors of DORA, SPACE, and DevEx, including Dr. Nicole Forsgren. This isn’t a new vendor trying to displace established metrics. This is the original researchers consolidating their work into something actionable.

Why DORA, SPACE, and DevEx Failed Individually

DORA metrics focus on four delivery indicators: deployment frequency, lead time for changes, change failure rate, and mean time to recovery. They excel at measuring CI/CD performance and getting executive buy-in through clear delivery metrics. However, they fail at capturing developer experience, satisfaction, or the non-coding work that consumes developer time. Optimizing solely for DORA metrics can drive burnout—faster deployments don’t mean happier or more effective teams.

SPACE assesses productivity through five dimensions: Satisfaction and well-being, Performance, Activity, Communication and collaboration, and Efficiency and flow. It’s comprehensive—too comprehensive. Furthermore, five dimensions with multiple factors each create measurement paralysis. Teams struggle to prioritize improvements or translate SPACE findings into action. Survey data from 2,000 developers shows 78% cite tooling as their top satisfaction factor, but SPACE doesn’t tell you which tools deliver ROI.

DevEx, introduced in January 2024 by the same authors who created SPACE, focuses specifically on developers’ satisfaction with their work. It addresses SPACE’s breadth problem but swings too far toward experience without delivery performance context. Consequently, a team can score high on DevEx while shipping slowly or producing low-quality code. Developer happiness matters, but it’s not the only metric that matters.

Using all three together creates fragmentation. You have 14+ metrics across incompatible frameworks with no way to compare results across teams or organizations. Engineering leaders can’t answer “Are we more productive than last quarter?” without choosing which framework’s lens to view through. As a result, DX Core 4 solves this by providing a universal language for productivity measurement.

Practical Implications for Engineering Leaders

Engineering leaders can now compare AI tools objectively across a unified framework. When evaluating Claude Code versus GitHub Copilot versus Cursor, DX Core 4 provides comparable metrics for speed, effectiveness, quality, and business impact. You’re no longer comparing vendor marketing claims—you’re comparing measured outcomes using the same yardstick.

Proving ROI on AI tool investments becomes quantifiable. If your organization spends $400 per month per engineer on AI coding tools (a realistic average when including token costs), you need to demonstrate value. Consequently, DX Core 4 shows whether that spending improved throughput, maintained quality, and delivered business impact—or just created more PR review bottlenecks. The productivity paradox taught us that individual time savings don’t automatically translate to organizational value. Therefore, measure the full delivery pipeline, not just coding speed.

Platform engineering investments get the same treatment. With 80% of large organizations running platform teams and 75% providing self-service portals, justifying continued investment requires proof these platforms actually improve developer productivity. Furthermore, DX Core 4 measures whether your internal developer platform reduces friction (Effectiveness), accelerates delivery (Speed), maintains reliability (Quality), and delivers business value (Impact). Teams with strong developer experience perform 4-5 times better than those with poor experience—now you can measure which bucket your organization falls into and track improvement over time.

The framework balances competing priorities engineering leaders constantly juggle. You can optimize for speed without sacrificing quality by tracking both dimensions simultaneously. You can adopt AI tools while monitoring whether they introduce technical debt through the Quality dimension. Additionally, you can invest in developer experience improvements while ensuring they translate to business impact. Organizations achieve 30-50% faster deployments through platform engineering, but only when they measure and optimize across all four dimensions rather than speed alone.

Key Takeaways

- DX Core 4 unifies DORA, SPACE, and DevEx into four actionable dimensions: Speed, Effectiveness, Quality, and Business Impact

- Announced April 16, 2026 at DX Annual conference, developed with Dr. Nicole Forsgren and original framework authors

- Booking.com proved 16% AI productivity lift at scale across 3,500 developers, saving 150,000 hours in year one

- DXI metric quantifies developer experience in financial terms: 1-point improvement saves $100K annually per 100 developers

- Engineering leaders can finally prove ROI on AI tools costing $200-600/month per engineer, not just vendor claims

- Framework addresses the productivity paradox by measuring the full delivery pipeline, not just coding speed gains