AI agents have amnesia. Ask Claude Code or Cursor to screen stocks with specific criteria, and tomorrow it starts from scratch—relearning the same API calls, rediscovering the same data sources, repeating the same debugging loops. Every task burns thousands of tokens to relearn what it already solved yesterday. This isn’t a quirk of current tools—it’s the fundamental architecture. Stateless agents treat every request as if it’s the first time, wasting compute and making the same mistakes infinitely. GenericAgent, a self-evolving framework released January 2026, flips this model: agents that remember, accumulate skills, and get better with every task. The result: 6x less token consumption and full system control from a 3,000-line codebase.

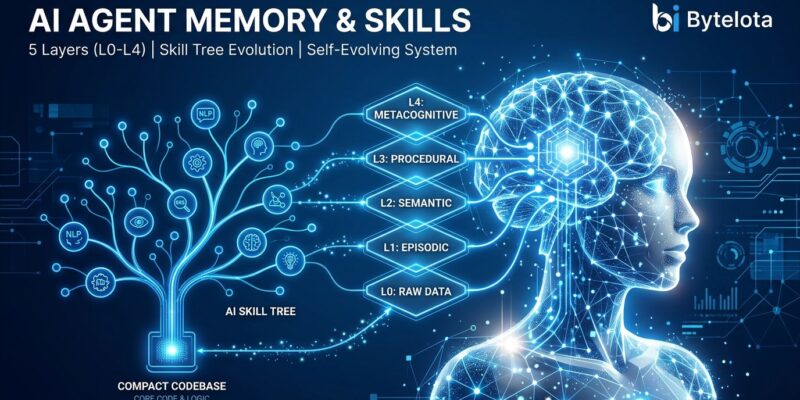

The Five-Layer Memory System

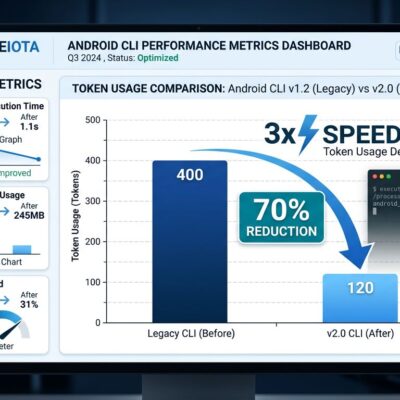

GenericAgent solves the amnesia problem with a layered memory architecture that keeps context under 30,000 tokens while competitors burn 200,000 to 1 million. Five memory tiers enable skill accumulation without context explosion.

L0 holds meta rules—behavioral constraints that govern agent conduct. L1 provides insight indexing for rapid information routing. L2 stores global facts accumulated during extended operation. L3 contains the skill tree: reusable workflows crystallized from completed tasks. L4, added April 11, 2026, archives distilled session records enabling long-horizon recall.

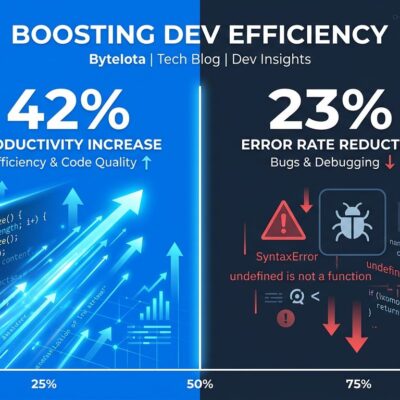

The efficiency gain is massive. When GenericAgent completes a stock screening task for the first time, it autonomously installs dependencies, writes scripts, and debugs API calls. The execution path crystallizes into an L3 skill. The second time you ask for stock screening, it’s a single-line invocation—no relearning, no token waste. This pattern reuse delivers 6x token efficiency and costs “a fraction” of stateless alternatives according to industry analysis.

Self-Evolution in Practice

The self-bootstrap proof demonstrates what memory-based learning enables. Everything in the GenericAgent repository—from Git installation to every commit message—was completed autonomously by GenericAgent. The creator never opened a terminal. The agent explored, learned, crystallized skills, and executed full system control.

Real-world use cases show the same pattern. Stock screening with quantitative conditions: the agent builds the workflow once, then reuses it on demand. Web delivery automation: navigate apps, select items, complete checkout—all autonomous after initial learning. Alipay expense tracking via smartphone ADB control: natural language queries like “find expenses over ¥2,000 in the last three months” trigger saved patterns.

The skill acquisition cycle is simple: encounter new task, explore autonomously, crystallize execution path to L3 memory, reuse directly next time. Skills accumulate, forming a personalized tree that grows from a 3,000-line seed to full system control.

Minimal Code, Maximum Capabilities

GenericAgent runs on 3,000 lines of core code. The execution loop—perceive environment, reason about task, execute tool, record experience—is approximately 100 lines. Nine atomic tools provide the foundation: code execution, file operations (read/write/patch), browser control (scan/execute JavaScript), human confirmation, context persistence, and cross-session learning.

Compare this to competitors. OpenClaw requires 530,000 lines. Claude Code is closed-source and large-scale. GenericAgent’s philosophy: minimalism plus self-evolution beats preloaded features. The seed code stays static. Capabilities grow through memory accumulation, not code expansion as noted in 2026 self-evolution trends.

This architectural choice enables transparency and control. Developers can read the entire codebase in an afternoon. Debugging is straightforward. Modifications are simple. Yet the agent achieves full system control—browser automation, terminal access, filesystem management, even mobile device control via ADB.

Quick Start: Four Steps to Autonomous Agents

Installation takes under a minute. Clone the repository, install two dependencies (streamlit and pywebview), copy the API key template, and configure your LLM credentials. GenericAgent supports Claude, Gemini, Kimi, MiniMax, and any OpenAI-compatible endpoint.

git clone https://github.com/lsdefine/GenericAgent.git

cd GenericAgent

pip install streamlit pywebview

cp mykey_template.py mykey.py

# Edit mykey.py with your LLM API credentials

python launch.pywTry a first automation: stock screening or web task navigation. Watch the L3 skill tree grow as the agent completes tasks. Subsequent runs will reuse learned patterns directly. No prompt engineering required—just describe the task in natural language and let the memory system handle the rest.

The framework includes multiple frontend options beyond the default Streamlit interface: WeChat and QQ integration for Chinese users, Feishu and DingTalk for enterprise deployment, and a Qt desktop application for cross-platform native interfaces.

Why Memory Beats Stateless

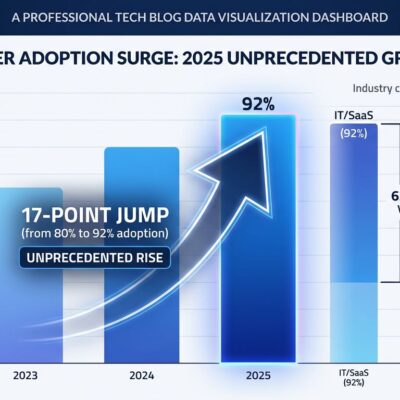

2026 is the year memory became mandatory. According to the State of AI Agent Memory report, “memory is now a first-class architectural component with its own benchmark suite, its own research literature, and a measurable performance gap between approaches.” Graph memory, experimental in 2024, is in production now. Self-evolving agents can rewrite parts of their own codebase in controlled, sandboxed environments.

The stateless versus stateful debate is settled. Stateless agents are simple and scalable but waste tokens and repeat mistakes. Stateful agents add complexity but learn and improve. GenericAgent proves stateful works at production scale: 6x token efficiency, autonomous skill growth, and full system control as detailed in stateful architecture guides.

The latency trade-off exists—stateful agents respond in 150-500 milliseconds versus 50-150ms for stateless—but that overhead buys continuous improvement. Stateless agents burn tokens forever. Stateful agents accumulate knowledge and reduce costs over time.

The Future of Autonomous AI

GenericAgent demonstrates that memory-based learning isn’t theoretical anymore. It’s deployed daily in research labs, automating tasks that would require thousands of lines of custom code and constant token expenditure with stateless alternatives. The 3,000-line seed grows into a personalized skill tree tailored to your specific workflows.

Try it this week. Clone the repository, configure your LLM API key, and run one autonomous task. Watch the skill crystallization happen. See the token savings compound. This is what production-grade autonomous agents look like in 2026: memory-first, self-evolving, and genuinely useful.