Alibaba’s Qwen team released Qwen3.6-35B-A3B on April 16, 2026—an open-source AI coding model that uses a Mixture-of-Experts architecture to activate only 3 billion of its 35 billion parameters per query, yet outperforms dense models with 30B+ active parameters. On SWE-bench Verified, it scores 73.4%, beating Google’s Gemma4-31B by 21.4 points. Released under Apache 2.0 license, the model runs locally on consumer hardware when quantized to 21GB. It gained immediate traction on Hacker News with 758 points and 352 comments.

3 Billion Active Beats 30 Billion Dense

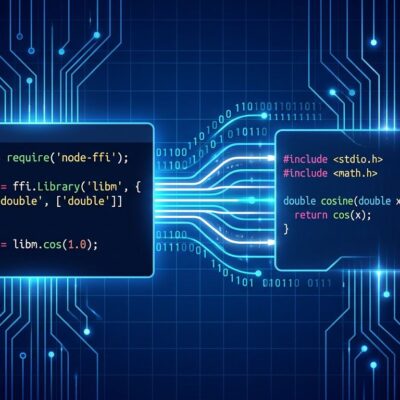

The efficiency breakthrough comes from Qwen3.6’s sparse Mixture-of-Experts architecture. The model contains 256 expert sub-networks but only activates 8 experts plus 1 shared expert per layer—just 9 total. This results in 3 billion active parameters despite a 35 billion parameter model, reducing compute by ~90% versus a traditional dense architecture.

That efficiency translates to practical deployment. A quantized 21GB version runs on an $800 RTX 4090 or M5 MacBook. Compare this to a dense 35B model requiring 8x A100 enterprise GPUs. For developers paying $0.03 per 1K tokens for GPT-4 API access—roughly $20-30 per day for heavy use—local deployment pays for itself in 30-60 days while eliminating cloud latency and keeping proprietary code off external servers.

On Terminal-Bench 2.0, which tests shell command generation, Qwen3.6 scores 51.5% versus Gemma4-31B’s 42.9%. The SWE-bench Verified score of 73.4% means the model can autonomously resolve 3 out of 4 real-world GitHub issues—production-viable for automated bug triaging and code refactoring.

Apache 2.0 Removes the Legal Gray Areas

Qwen3.6-35B-A3B ships under Apache 2.0 license, meaning developers can use, modify, and distribute it freely for any purpose—commercial or non-commercial—with no usage restrictions. This matters more than most licensing discussions acknowledge.

Llama 3 requires a special license above 700 million monthly active users. That’s fine for most startups today, but creates uncertainty for future acquisitions. Healthcare companies can’t use GPT-4 for HIPAA-covered patient data due to terms of service prohibitions. Defense contractors can’t use cloud APIs in air-gapped environments. Apache 2.0 removes all these barriers. One Hacker News commenter put it plainly: “Finally, a powerful model without legal ambiguity.”

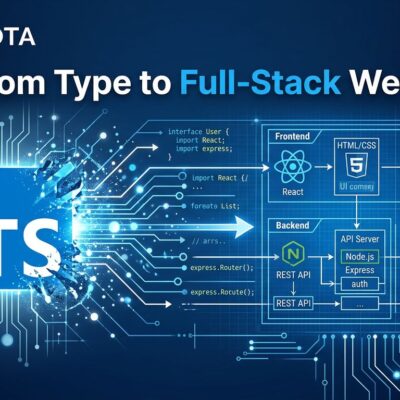

Multimodal Support for Visual Coding Tasks

Qwen3.6 is natively multimodal, supporting text, image, and video inputs simultaneously. It can process hour-long videos with up to 224K video tokens and scored 83.7% on VideoMMU, the highest in its class for video understanding benchmarks. This enables “design to code” workflows: upload a Figma mockup or hand-drawn sketch, and the model generates HTML/CSS/React components.

Simon Willison tested Qwen3.6 against Claude Opus 4.7 on his satirical “pelican riding a bicycle” SVG generation benchmark. Qwen produced superior results: “The bicycle frame is the correct shape. There are clouds in the sky,” while Opus 4.7’s frame was “entirely the wrong shape.” Willison emphasized the narrow scope of this test: “I very much doubt that a 21GB quantized version of their latest model is more powerful or useful than Anthropic’s latest proprietary release.” The point: models can excel at narrow specialties without being generally superior.

The Benchmark Gap Reality Check

Qwen3.6’s 73.4% SWE-bench score is impressive on paper. In practice, developers report 50-60% success rates on proprietary codebases versus the benchmark’s standardized tests. That gap isn’t unique to Qwen—it’s a systemic issue with how AI coding models are evaluated.

Benchmark overfitting concerns persist, though no evidence yet suggests Qwen trained on test sets. The real issue is simpler: standardized tests don’t capture domain-specific complexity, legacy code patterns, or undocumented dependencies that characterize real codebases.

Simon Willison’s satirical benchmark illustrates this precisely. His “pelican test” showed Qwen beating Claude Opus 4.7, but as he noted, “the loose connection to utility has been broken.” Models trained on specific visual patterns (SVG structure, common shapes) perform well on those patterns without generalizing to other tasks.

The practical takeaway: test on your own evaluation sets before deploying to production. Qwen3.6 is production-viable for code refactoring and bug fixing based on the 73.4% score, but human review remains necessary for complex systems. The model excels at coding—especially agentic workflows with tool use—but don’t expect GPT-4’s general knowledge breadth.

What This Means for Local AI Development

Qwen3.6 represents an inflection point for local AI development, not because it’s the best model available—it isn’t—but because it’s the first open-source coding model that combines production-grade performance (73.4% SWE-bench) with consumer hardware viability (21GB quantized) and unrestricted licensing (Apache 2.0).

The Mixture-of-Experts efficiency breakthrough proves that 3 billion active parameters can rival 30 billion dense models when properly optimized. That architectural shift will ripple through the industry. Dense models are computationally wasteful for deployment. Expect future releases—open-source and proprietary—to adopt similar sparse architectures.

Apache 2.0 licensing matters more for enterprise adoption than any benchmark score. Companies can fine-tune on proprietary datasets, embed in commercial products, and deploy in air-gapped environments without legal risk. That removes the adoption barriers that keep many organizations dependent on cloud APIs despite cost and privacy concerns.

The open-source AI gap is closing. In 2023, open models lagged proprietary alternatives by 12-18 months. In 2026, that gap has narrowed to under 6 months for coding tasks. Moonshot’s Kimi K2.5, released in January 2026, scores 76.8% on SWE-bench Verified—beating Qwen3.6 and matching estimated GPT-4 performance. If this trajectory continues, open-source and proprietary models will reach parity by Q4 2026.

Run Qwen3.6 via Ollama for local deployment, or access it through Alibaba Cloud’s API for cloud-based inference. The 21GB quantized version requires an RTX 4090 or M5 MacBook with 64GB RAM. Full precision (BF16) requires 8x H100 GPUs for optimal throughput.