Nvidia announced a $26 billion investment in open-source AI models this week, positioning the chip giant as a direct competitor to OpenAI and Anthropic days before GTC 2026 kicks off March 16. First reported by Wired on March 9 and confirmed by Nvidia executives March 12, the move marks a strategic pivot: give away AI software for free to drive explosive demand for Nvidia’s GPU hardware. Jensen Huang’s keynote Monday (March 16, 11 AM PT) is expected to unveil enterprise AI platforms and a Groq-powered inference chip—while Nvidia simultaneously pulls back investments in OpenAI and Anthropic.

The Strategy: Commoditize Software, Dominate Hardware

Nvidia’s $26 billion investment funds open-source AI model development specifically to drive GPU sales. The playbook is straightforward: release powerful models for free (Nemotron 3 family with open weights, training recipes, and datasets), lower the barrier to entry for developers, and watch the explosion of AI applications—all requiring Nvidia GPUs for training and inference. This isn’t charity. It’s strategic lock-in disguised as openness.

The Nemotron 3 Super model demonstrates the technical quality. With 120 billion parameters, a native 1 million token context window, and 449.5 tokens per second throughput, it delivers three times the speed of Claude while ranking #1 on PinchBench for agentic reasoning. Perplexity, Palantir, ServiceNow, Cursor, and CrowdStrike already use Nemotron for AI agent deployments. However, the models run fastest on Nvidia hardware—CUDA optimization and native NVFP4 training on Blackwell GPUs create performance advantages that disappear on competing hardware.

Anthropic recently dropped its long-context premium, offering 1M tokens at standard pricing for Opus 4.6 and Sonnet 4.6. Nvidia’s Nemotron 3 matches that context window but goes further—fully open weights with no API lock-in. The catch? Best performance requires Nvidia silicon.

The Irony: Competing with Your Own Customers

While investing $26 billion in competing AI models, Nvidia simultaneously reduces its stake in OpenAI and Anthropic. Jensen Huang signaled at the Morgan Stanley Tech Conference in early March that these are likely Nvidia’s final private investments in both companies. Nvidia’s participation in OpenAI’s $110 billion funding round finalized at $30 billion, with infrastructure plans revised down to 5 gigawatts. This creates an awkward dynamic: Nvidia sold GPUs to these AI labs, invested in them, and now competes against them while reducing financial support.

Nemotron 3 Super benchmarks near Claude Opus 4.5 Haiku—direct competition in the mid-tier intelligence range. OpenAI and Anthropic offer no open-weight models, leaving that market to Chinese labs (DeepSeek, Qwen, Alibaba). Nvidia steps into this gap, targeting both proprietary Western competitors and hardware-agnostic Eastern alternatives. The question developers face: Can Nvidia be a neutral platform while competing with its customers?

Related: LeCun Raises $1.03B to Prove AI Industry Wrong on LLMs

GTC 2026 This Weekend: What to Expect

GTC 2026 runs March 16-19 in San Jose with over 30,000 attendees from 190+ countries. Jensen Huang’s keynote Monday (March 16, 11 AM PT at SAP Center) is expected to reveal the practical implementation of the $26 billion bet. Anticipated announcements include a Groq-powered inference chip delivering hundreds or thousands of tokens per second for real-time AI applications, possible details on the rumored “NemoClaw” enterprise AI agent platform (unconfirmed, based on Wired sources), and Rubin platform specifications promising 10x inference cost reduction and 4x fewer GPUs for training MoE models. Rubin enters full production with partner availability in H2 2026.

The conference features over 1,000 sessions covering AI factories, agentic AI, and inference infrastructure, with 240+ Nvidia Inception startups participating. CNBC reports the CPU will take center stage alongside traditional GPU focus, suggesting a broader compute architecture reveal. The timing is strategic: announce a $26 billion investment days before the conference, reveal implementation details in the keynote, and provide hands-on access through workshops and labs.

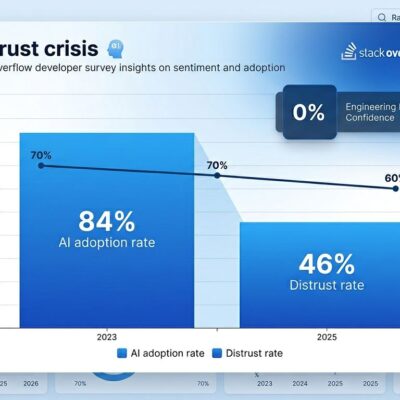

Developer Reaction: Quality Meets Skepticism

Developers appreciate Nemotron 3’s technical quality—best-in-class agentic reasoning, 1 million token context, and genuinely open weights with training recipes and datasets. Task compliance and tool call success rates test well in production deployments. However, skepticism emerges around the “openness” framing when models require CUDA optimization for competitive performance. Igor’s Lab captured the sentiment: “When ‘open source’ suddenly smacks of platform strategy.”

Critics note Nvidia attempting to occupy every AI stack layer: hardware (GPUs), runtime, frameworks, models, benchmarks, and deployment infrastructure. Chinese open-source models like DeepSeek and Qwen remain truly hardware-agnostic, running efficiently across various architectures. Nemotron’s open weights don’t guarantee neutral platform status when performance advantages lock developers into Nvidia workflows and infrastructure. This creates the paradox of open AI models—accessible code doesn’t prevent strategic lock-in through optimization.

What It Means

Nvidia’s $26 billion investment commoditizes the AI software layer while maintaining hardware dominance. Developers gain access to powerful free models comparable to mid-tier commercial offerings (Claude Opus 4.5 Haiku level), but the “openness” comes with CUDA optimization that ties performance to Nvidia infrastructure. The GTC 2026 keynote Monday will reveal whether this strategy extends beyond models to full enterprise platforms.

The competitive dynamic shifts: Nvidia moves from neutral GPU supplier to active participant in the model layer, directly challenging OpenAI, Anthropic, and Chinese labs while reducing investments in former partners. For developers, the trade-off is clear—free, powerful models for agentic AI workflows, with optimal performance requiring commitment to Nvidia’s ecosystem. Watch the Jensen Huang keynote Monday, March 16 at 11 AM PT to see how $26 billion reshapes enterprise AI infrastructure.