Python 3.14.5, released May 10, reverted the incremental garbage collector that shipped four months ago after production deployments reported up to 5x memory increases. The Python core team pulled the release forward from June 9 to ship the emergency fix faster. Python now restores the traditional 3-generation GC from version 3.13, abandoning Mark Shannon’s incremental collector that promised pause time improvements but caused memory pressure serious enough to crash production systems.

This marks the second failure for incremental GC. Python attempted the same optimization in 3.13, rolled it back before release, tried again in 3.14, and reverted again within months.

What Broke: Memory Exploded in Production

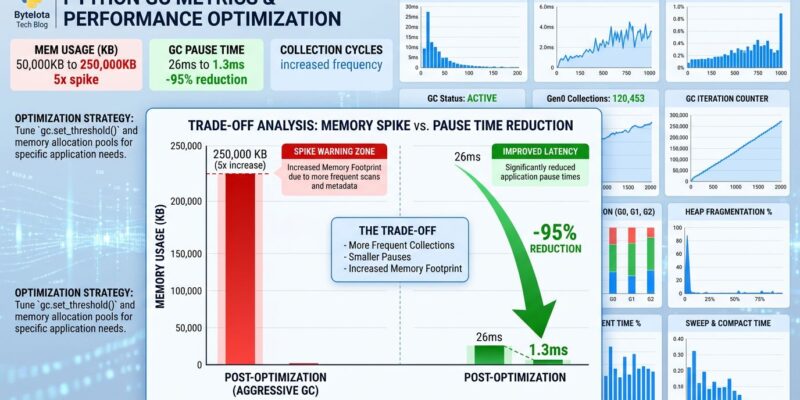

The incremental garbage collector cut pause times from 26ms to 1.3ms—a 20x improvement in benchmarks. However, production told a different story. Peak memory usage climbed 2.7x to 5x depending on workload. In some cases, no full collection ran until iteration 20,000, leaving 18,000 trash cycles sitting in memory waiting to be reclaimed.

Django developer Adam Johnson documented the failure in detail. His team migrated a Django project to Python 3.14 and hit out-of-memory errors during database migrations on Heroku review apps with 512MB RAM. Memory escalated progressively: 527M (101.5%) → 977M (190.3%) → 1033M (201.7%) → SIGKILL. The root cause was temporary model classes in ModelState.render() hanging around instead of being collected. His workaround: force gc.collect() after each successful migration. The incremental collector couldn’t keep pace with Django’s creation of cyclical objects between model classes and field objects.

The gap between lab results and production failures reveals a testing problem. Mark Shannon’s benchmarks showed 3% overall speedup and 50% gains on GC-heavy workloads. Moreover, those benchmarks measured pause times and throughput, not memory accumulation patterns. Production exposed what the tests missed: pauses got shorter while total garbage in memory did NOT decrease.

The Engineering Tradeoff Python Chose

Neil Schemenauer’s analysis crystallized the decision: 1.3ms max pause with 2.7x peak RSS (incremental) versus 26ms max pause with baseline memory (generational). Python chose memory.

Most Python applications don’t have strict latency requirements. Web apps tolerate occasional 26ms pauses. Data processing workloads don’t need real-time guarantees. Nevertheless, memory constraints are hard limits. Cloud deployments bill by GB. Containers enforce memory caps. Serverless functions like AWS Lambda charge by MB-seconds. Consequently, a 26ms pause is slow. A 5x memory increase is a crash.

The incremental GC optimized for the wrong metric. Low latency matters for gaming, audio, video—workloads Python doesn’t dominate. Meanwhile, memory efficiency matters for the deployments Python actually powers: web apps, data pipelines, automation scripts running in constrained environments.

Timeline: Two Attempts, Two Failures

Python 3.13 was the first attempt. The incremental GC caused Sphinx slowdowns during development and was rolled back before final release.

Python 3.14.0 shipped with Mark Shannon’s implementation in October 2025. The design reduced generations from 3 to 2 and walked the old generation in small increments rather than all at once. Furthermore, it promised “order of magnitude” pause time reductions for large heaps. Notably, the feature skipped the PEP review process and rolled out as an experimental change.

Reports of memory pressure surfaced within months. On April 16, 2026, release manager Hugo van Kemenade announced the revert decision. Python 3.14.5 shipped May 10—pulled forward from the original June 9 schedule to get the fix into production faster. The generational GC from 3.13 is back. Three generations are restored. Developers can verify with gc.get_count(): 3.14.0-3.14.4 returns 2 counts, 3.14.5+ returns 3.

What This Means for Developers

If you’re running Python 3.14.0-3.14.4 in production and experiencing unexplained memory growth, you now have an explanation. Upgrade to 3.14.5. The traditional generational GC handles memory-constrained environments better than the incremental approach.

Check which GC you’re using:

import gc

print(gc.get_count()) # (young, mid, old) in 3.14.5+

Two values means incremental (3.14.0-3.14.4). Three values means generational (3.14.5+).

Mid-release reverts are rare in Python, but the core team moved quickly when production systems started failing. That’s the right call. Innovation matters, but not at the cost of breaking existing deployments.

The Future: Opt-In, Not Default

Incremental GC isn’t dead. Python’s core team left the door open for a return in version 3.16, but this time through formal PEP review. Neil Schemenauer proposed fixes: trigger collection every 2,000 objects, use size-based increments to force full passes more frequently. In addition, a future implementation might offer incremental GC as a runtime flag—python -X gc=incremental—letting developers with latency-sensitive workloads opt in while keeping memory efficiency as the default.

The lesson is clear: benchmarks need real-world validation. Lab tests showed impressive pause time reductions. Production revealed memory accumulation the benchmarks didn’t measure. Therefore, Python 3.14 shipped a feature that looked good on paper but broke under production load. The team reverted quickly, which is the second-best outcome. The best outcome would have been catching this before release, but at least the process worked: reports came in, the team analyzed the data, and the fix shipped fast.

Key Takeaways

- Python 3.14.5 (May 10) reverted incremental GC after 5x memory reports

- Incremental GC reduced pause times (1.3ms vs 26ms) but increased memory 2.7x-5x

- Production failures included Django OOM kills, memory-constrained container crashes

- Python chose memory efficiency over low latency—the right call for most workloads

- Possible future: opt-in incremental GC via runtime flag in Python 3.16