On April 30, 2026, Loris Cro, VP of Community at the Zig Software Foundation, published a defense of Zig’s strict AI contribution ban, introducing “Contributor Poker” as their philosophy. Unlike 96% of open source projects, Zig bans ALL LLM-generated code—issues, pull requests, even bug tracker comments. Only 3 other projects out of 112 surveyed (NetBSD, GIMP, qemu) take this extreme stance. Zig’s argument: “play the person, not the cards,” investing maintainer time in developing trusted human contributors rather than reviewing ChatGPT’s output.

The policy has sparked intense debate on Hacker News (208 points, 93 comments) with developers divided on whether this is wise stewardship or anti-innovation gatekeeping.

What “Contributor Poker” Means for Open Source

“Contributor Poker” is Cro’s framework: “You play the person, not the cards.” Open source projects should bet on the contributor—their potential for growth, understanding, and long-term engagement—not just the quality of their first pull request. The best contributors need familiarity with the codebase and problem domain. LLM-assisted contributors lack both.

As Cro writes: “Even if an LLM helps you submit a perfect PR, the time the Zig team spends reviewing it does nothing to help them add new, confident, trustworthy contributors.” The issue isn’t code quality. Reviewing AI output doesn’t build relationships or develop contributor expertise. Maintainers invest time mentoring new contributors expecting them to become trusted collaborators. LLM users can’t fulfill this because they don’t understand the codebase deeply—the AI does.

AI Contributions Shift Burden to Maintainers

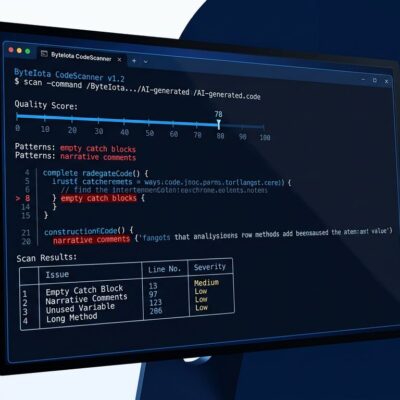

Zig maintainers report receiving LLM-generated PRs that “wouldn’t even compile,” including “insane 10 thousand line long first time PRs” with no design discussion. Contributors submit without testing, expecting maintainers to debug. When questioned, some deny using LLMs but “regurgitate mistake-filled replies” clearly copied from ChatGPT. Maintainers across GNOME, OCaml, Python, and Django report the same pattern.

The EFF’s policy on LLM contributions notes: “Code reviews turning into code refactors for maintainers if the contributor doesn’t understand the code.” LLM users shift effort from implementation to review. Maintainer time is the scarcest resource in open source. Every hour debugging an LLM’s hallucinations is an hour NOT spent on feature development or mentoring quality contributors.

Only 3.5% of Open Source Projects Ban AI

Of 112 major projects surveyed in March 2026, only 4 ban AI contributions entirely: Zig, NetBSD, GIMP, and qemu—just 3.5%. Most projects require disclosure (like LLVM’s “human in the loop” policy) or have no explicit policy. Zig’s stance is extreme and deliberate.

Their Code of Conduct explicitly states: “No LLMs for issues. No LLMs for pull requests. No LLMs for comments on the bug tracker, including translation.” Zig extends the ban to ALL contributions, even trivial ones, based on principle: contributor development matters more than code accumulation.

The Bun Fork Controversy

Bun (a JavaScript runtime built on Zig, acquired by Anthropic in late 2025) operates its own fork of Zig claiming “4x faster compilation times.” They haven’t attempted to upstream this code, possibly due to Zig’s AI policy. The Zig compiler team disputes the performance claims and notes Bun’s approach has “non-deterministic compilation behavior” causing random failures.

Critics say Zig rejects good code due to policy dogma. Supporters say the code quality was poor, and Zig’s roadmap already addresses it better. Zig may miss some one-off optimizations, but they avoid technical debt from contributors who can’t maintain their code.

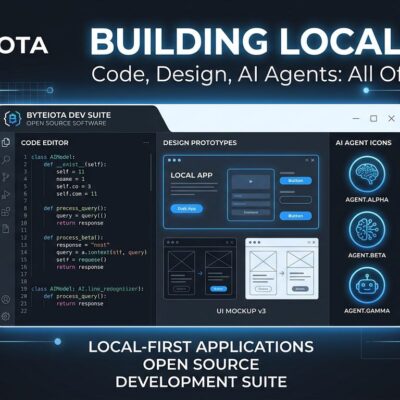

Why Maintainers Are Right to Ban AI Code

Open source maintainers are not free code reviewers for ChatGPT. If you can’t explain your PR, test it yourself, or iterate on feedback, you shouldn’t submit it. Zig’s policy protects maintainer time and builds a sustainable contributor pipeline. The alternative—drowning in AI-generated drive-by PRs—is already happening at GNOME, Python, Django, and others.

The legal issue alone justifies caution: The US Copyright Office ruled “only works created by a human can be copyrighted,” meaning AI-generated code enters the public domain. This undermines copyleft licenses like GPL and LGPL. Case in point: chardet, a 12-year LGPL project, was rewritten using Claude AI and relicensed as MIT without original author consent.

This isn’t anti-innovation—it’s pro-sustainability. Zig is betting that deep human expertise will outlast AI-generated velocity. At minimum, they’re asking the right question: What is open source FOR? Code accumulation, or community building?