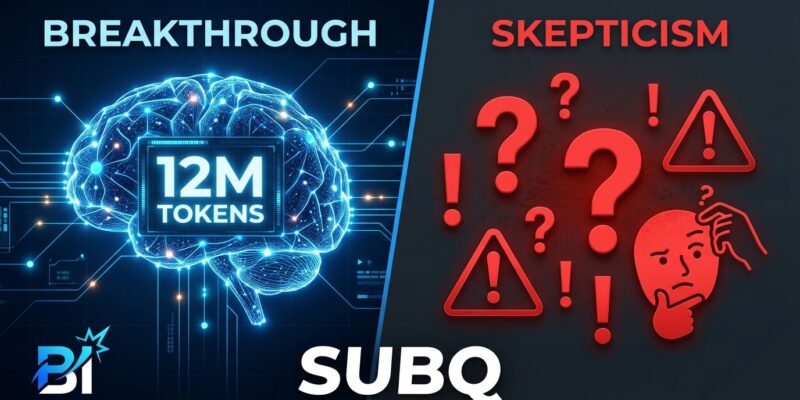

On May 5, Miami-based Subquadratic emerged from stealth claiming they’ve built the first large language model to escape transformer attention’s quadratic scaling curse. Their model, SubQ, promises a 12 million token context window at 1,000 times lower compute than frontier models like Claude or GPT-4. Within hours, AI commentator Dan McAteer captured the binary mood: “SubQ is either the biggest breakthrough since the Transformer… or it’s AI Theranos.”

The stakes are real. If Subquadratic’s claims hold, they’ve solved the core bottleneck limiting AI coding agents and long-context applications. If they don’t, it’s another cautionary tale in overhyped AI infrastructure.

The Bold Claims

SubQ’s headline feature is a 12 million token context window—enough to load an entire enterprise codebase in a single prompt. For comparison, Claude Opus 4.6 maxes out at 1 million tokens (GA as of March 2026), and even Google’s Gemini 3 Pro only reaches 10 million. But context size means nothing if it’s prohibitively expensive to use.

That’s where Subquadratic’s efficiency claims get interesting. The company says SubQ runs 52 times faster than dense attention at 1 million tokens and costs 300 times less than Claude Opus on standard benchmarks. On their RULER 128K test, SubQ allegedly delivered 95% accuracy for $8 versus Claude’s $2,600. At the full 12 million token window, they claim a 1,000x compute reduction.

The secret sauce is SSA—Subquadratic Sparse Attention. Unlike transformers that compare every token to every other token (O(n²) complexity), SSA learns which token-to-token comparisons actually matter and skips the rest. The result: linear O(n) scaling instead of quadratic. Doubling your context window doubles compute, not quadruples it.

Subquadratic has also launched two products: the SubQ API (available now) and SubQ Code, a CLI coding agent in beta that can load entire multi-repo projects into a single context window. The company raised $29 million in seed funding from investors including Tinder co-founder Justin Mateen and early backers of Anthropic and OpenAI.

The Skepticism Wave

Here’s the problem: extraordinary claims require extraordinary evidence, and the evidence so far is thin.

Subquadratic published benchmark results and a technical blog post explaining SSA, but researchers are demanding more. There’s no independent third-party testing, no peer-reviewed academic paper, and no model weights for verification. The company ran each benchmark only once, citing “high inference cost.”

The AI community has recent reason to be skeptical. In August 2024, Magic.dev announced a 100 million token context window with similar efficiency claims and raised $500 million. Eighteen months later, there’s still no public evidence of Magic’s LTM-2-mini being used outside the company. That precedent hangs heavy over SubQ’s launch.

Why This Matters for Developers

The transformer scaling problem is real. Standard attention mechanisms scale quadratically—going from 512 tokens to 4,096 tokens requires 64 times more compute. Even an 80GB GPU maxes out around 50,000 tokens for inference. This quadratic tax makes long-context AI prohibitively expensive for most developers.

Sub-quadratic attention isn’t science fiction. It’s an active research area, and SSA’s content-dependent token selection approach is technically plausible. Subquadratic’s team includes PhDs from Meta, Google, and Oxford who describe SSA as a “ground-up redesign from first principles.” The question isn’t whether sub-quadratic attention can work—it’s whether Subquadratic actually delivered it.

If SubQ’s claims hold, the implications are significant. Entire codebases in context means no chunking, no retrieval systems, and no lost cross-file dependencies. AI coding agents, which hit 74% developer adoption in January 2026, could finally operate at codebase scale instead of file scale. Affordable 12 million token contexts would democratize access to long-context AI for startups.

But that’s a big “if.”

Watch Closely, Don’t Bet the Farm

SubQ deserves attention, not blind faith. Here’s what developers should watch for over the next three to six months:

Red flags: No independent benchmarks by end of May, no academic paper by Q3 2026, developers report reliability issues, or the company delays its Q4 target of 50 million tokens without explanation.

Green lights: Third-party researchers confirm efficiency claims, the developer community adopts SubQ Code CLI for real work, production use cases emerge, or an academic paper gets validated by peers.

Until then, test SubQ carefully if you have access, but keep using established tools like Claude or GPT-4 for production work. Don’t rebuild your infrastructure around unproven claims.

SubQ is either a game-changer or another Magic.dev. The next few months will tell us which.