Google announced its eighth-generation Tensor Processing Units on April 22, 2026 at Google Cloud Next, becoming the first major cloud provider to completely split AI training and inference into separate chips. The TPU 8t handles model training while the TPU 8i optimizes for inference, each purpose-built for fundamentally different workloads. Google claims 2.8× faster training and 80% better price-performance for inference compared to previous generations—a direct shot at Nvidia’s dominance in AI infrastructure.

Why Google Split Its TPUs Into Two Chips

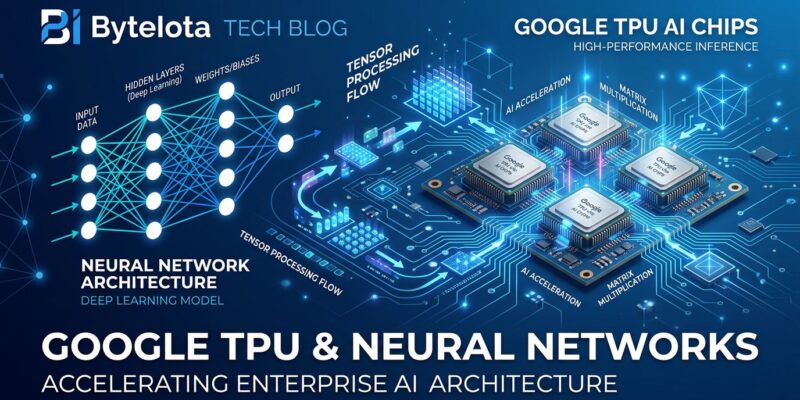

Training and inference have opposing requirements that a single chip can’t optimize simultaneously. Model training demands maximum compute throughput and efficient gradient synchronization across thousands of chips. Meanwhile, inference prioritizes low latency, high memory capacity, and cost efficiency for serving millions of queries.

The TPU 8t packs 12.6 petaFLOPS of compute with 216 GB of high-bandwidth memory for training workloads. In contrast, the TPU 8i delivers 10.1 petaFLOPS but includes 288 GB of memory—42 GB more than the training variant—and triple the on-chip SRAM (384 MB versus 128 MB). That extra memory matters for inference: larger models with extensive key-value caches fit entirely on-chip, eliminating memory bottlenecks during real-time serving.

Additionally, the inference chip introduces a Collective Acceleration Engine that reduces synchronization latency five-fold compared to the SparseCores used in previous TPUs. When you’re serving a model to millions of users simultaneously, those microseconds compound into massive cost savings.

Google vs Nvidia: Scale Over Single-Chip Performance

Nvidia’s competing Rubin GPU delivers 35 petaFLOPS per chip—nearly 3× the compute of Google’s TPU 8t. On paper, Nvidia wins the spec race. However, Google’s engineering team made a calculated bet: when training frontier models, collective performance across thousands of chips matters more than individual chip speed.

Google’s optical-circuit networking supports pods of 9,600 TPUs communicating at 19.2 terabits per second chip-to-chip. Conversely, Nvidia’s NVLink maxes out at 576 GPUs per domain before falling back to Ethernet interconnects. For massive training runs, Google’s architecture scales further without hitting networking bottlenecks. Nevertheless, whether that theoretical advantage holds up in production remains to be tested.

The inference economics tell a clearer story. Midjourney reduced infrastructure costs by 65% after migrating from GPUs to Google’s previous-generation TPUs. Consequently, if the 80% price-performance improvement claim holds, more companies running high-volume inference workloads might finally challenge Nvidia’s 80-90% market stranglehold.

The “Agentic Era” Positioning: Autonomous AI Systems

Google is framing TPU-8 for what it calls the “agentic era”—autonomous AI agents that plan multi-step workflows, execute tasks, and collaborate without constant human oversight. Unlike chatbots that respond to prompts, agentic systems receive high-level goals (“optimize our cloud spending”) and autonomously break them into subtasks, call tools, query databases, and adapt based on feedback.

The adoption numbers suggest this isn’t just marketing fluff. As of mid-2026, 54% of enterprises have integrated AI agents into core operations—not experimental pilots, but production systems handling document processing, compliance monitoring, and workflow coordination. Furthermore, the agentic AI market is projected to grow from $9 billion in 2026 to $139 billion by 2034, a 40% compound annual growth rate.

The TPU 8i’s design choices align with agentic inference demands: high memory capacity for running large models with extensive context windows, low-latency synchronization for real-time multi-agent coordination, and cost-efficient operation for systems that run continuously rather than on-demand. Therefore, Google is betting that autonomous agents become the infrastructure workload that defines the next decade of cloud computing.

Vertical Integration: Custom CPUs and Optical Networking

Beyond the TPUs themselves, Google replaced the x86 host processors with custom Arm-based Axion CPUs built on TSMC’s 3nm process. The Axion chips deliver 50% higher performance and 60% better energy efficiency than comparable x86 processors while handling data preparation and application server tasks that complement the TPUs’ inference work.

Moreover, the optical-circuit switches enabling 9,600-TPU pods represent Google’s infrastructure advantage. Where Nvidia sells standalone GPUs that customers integrate into their own data centers, Google controls the entire stack—from silicon design through networking topology to managed storage delivering 10 terabytes per second directly to accelerator memory. That vertical integration lets Google optimize in ways Nvidia’s commodity GPU approach can’t match.

Google claims 97% training goodput—the percentage of time chips spend on actual computation versus sitting idle due to infrastructure failures or network congestion. If accurate, that near-perfect uptime addresses one of the most expensive problems in large-scale training: runs that fail halfway through and waste weeks of compute time.

Smart Engineering, But Ecosystem Still Matters

Google’s dual-chip strategy is sound engineering. Specializing training and inference chips separately lets each maximize performance for its workload instead of compromising with a unified design. The vertical integration, custom networking, and claimed cost improvements are compelling—if you’re committed to Google Cloud.

That’s the catch. TPUs only run on Google Cloud Platform. In contrast, Nvidia’s CUDA ecosystem, despite its flaws, runs everywhere: AWS, Azure, on-premises data centers, even laptops. Developers invest years learning CUDA, PyTorch builds on it, and the entire AI training stack assumes Nvidia compatibility. Ultimately, Google has better hardware locked to one cloud provider. Nvidia has good-enough hardware with universal compatibility.

The agentic AI bet is the interesting wildcard. If autonomous agents become the dominant workload—continuous, low-latency, cost-sensitive inference at massive scale—Google’s purpose-built TPU 8i infrastructure advantage compounds. Conversely, if agentic AI turns out to be another overhyped trend, Google just built expensive specialized hardware for a market that never materialized. Either way, the TPU-8 launch shows Google finally taking Nvidia’s AI chip dominance seriously enough to fight back with architectural innovation instead of just price cuts.