AI coding assistants promised to make developers more productive, but 2026 surveys reveal a paradox: developers now spend more time reviewing AI-generated code (11.4 hours per week) than writing new code (9.8 hours per week). This workflow reversal—where verification exceeds creation—has created what engineering teams are calling the “AI code verification bottleneck.”

While developers feel 20% more productive, telemetry data from over 10,000 developers shows they’re actually 19% slower, creating a 39-point perception gap between how fast teams feel and how fast they actually deliver. This isn’t about whether AI coding tools work—84% of developers already use them. It’s about the hidden costs emerging at scale: longer code reviews, quality issues in production, and velocity gains that evaporate when measured at the organization level.

The Workflow Reversal: More Time Reviewing Than Writing

According to Digital Applied’s 2026 survey, developers now spend 11.4 hours per week reviewing AI-generated code versus 9.8 hours per week writing new code—a complete reversal from 2024, when developers spent more time writing than reviewing. This shift represents a fundamental change in how software is created.

LinearB’s 2026 benchmarks, analyzing 8.1 million pull requests across 4,800 engineering teams, show that teams with high AI adoption merge 98% more pull requests, but PR review time increases 91% per PR. The volume-time multiplication creates a bottleneck: while individual developers complete 21% more tasks, the review queue grows faster than teams can process it.

The bottleneck has migrated from code creation—now AI-accelerated—to code verification, which remains human-dependent. This explains why individual productivity gains don’t translate to faster organizational delivery. The constraint simply moved downstream.

The Trust Deficit: Why Developers Don’t Trust AI Code

Sonar’s 2026 State of Code Developer Survey found that 96% of developers do not fully trust the functional accuracy of AI-generated code, and only 3% report “highly trusting” AI output. More developers actively distrust AI tools (46%) than trust them (33%), marking a trust decline from 40% in 2024 to 29% in 2025.

This trust deficit is justified by quality data: AI-generated code introduces 1.7× more total issues than human-written code, with logic and correctness errors appearing 1.75× more often. Stanford and MIT’s March 2026 analysis of 2 million AI-generated code snippets found 14.3% contained security vulnerabilities, compared to 9.1% in human code for equivalent tasks.

When 96% don’t trust AI code but only 48% always verify it before committing, you have a dangerous gap between awareness and action. This creates a “someone else will catch it” assumption that leads to the next problem: production failures.

Related: Visual Studio 2026 Debugger Agent: AI-Powered Debugging

The Production Reality: When AI-Generated Code Fails

Lightrun’s April 2026 State of AI-Powered Engineering report found that 43% of AI-generated code requires manual debugging in production, even after passing QA or staging tests. On average, it takes three manual redeploy cycles to verify and fix a single AI-suggested code fix in production.

The “almost right” problem is the top developer frustration, cited by 66%: AI solutions that appear correct but have subtle bugs in edge cases, error handling, or business logic that only surface under real-world conditions. Debugging AI-generated code is rated “more time-consuming” than debugging human code by 45% of developers.

The verification problem doesn’t end at code review—it extends into production. This means the true cost of AI coding includes not just longer reviews, but also emergency debugging sessions, customer impact from failed deployments, and extended time-to-resolution for production issues.

The Perception Gap: Feeling Fast, Actually Slow

Faros AI’s study of over 10,000 developers across 1,255 teams uncovered a 39-point perception gap: developers report feeling 20% more productive with AI tools, but telemetry data shows they’re actually 19% slower when measured by delivery velocity.

Individual developers complete 21% more tasks and merge 98% more pull requests, but there’s “no significant correlation between AI adoption and improvements at the company level” in throughput, DORA metrics, or quality KPIs. The disconnect happens because developers measure their own speed—task completion—but not the downstream impact: review bottlenecks, increased bugs (up 9% per developer), and longer integration cycles.

This is the most dangerous finding. Teams can feel productive while actually slowing down. Without end-to-end delivery metrics tracking idea-to-production time, engineering leaders will celebrate vanity metrics—PRs merged, tasks completed—while missing the reality that software isn’t shipping faster.

What Engineering Teams Are Building to Fix This

Engineering teams are responding to the verification crisis by implementing multi-layer quality gate stacks and, ironically, using AI to verify AI-generated code. Independent benchmarks from March 2026 evaluated 17 AI code review tools across 200,000+ pull requests, with the best tool (Propel) achieving a 64% F-score—showing significant room for improvement but also demonstrating that automated verification is actively being developed.

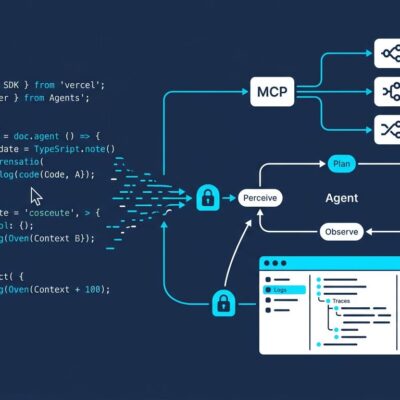

Enterprise teams are deploying five-layer verification stacks: linting and formatting, TypeScript strict-mode type checking, security scanning with Semgrep and Gitleaks, automated test generation, and agentic behavioral testing. Cloudflare’s approach uses multiple specialized AI reviewers in parallel—security AI, performance AI, quality AI, documentation AI, compliance AI—with humans synthesizing findings and making final decisions.

These systems reduce mechanical review time by 40-60% while preserving human judgment for strategic decisions. The industry isn’t abandoning AI coding tools—it’s building verification infrastructure to make them viable at scale. This represents the next wave: shifting from AI code generation to AI code validation, with humans as orchestrators rather than reviewers of mechanical details.

Key Takeaways

- The bottleneck has migrated from code creation to code verification: developers now spend 11.4 hours/week reviewing AI code versus 9.8 hours writing, reversing the traditional workflow

- The perception gap is real and dangerous: developers feel 20% more productive but are actually 19% slower—teams celebrating individual output gains while organizational velocity stagnates

- Trust and quality issues are justified: 96% of developers don’t trust AI code accuracy, and data shows AI code has 1.7× more issues and 14.3% security vulnerability rates versus 9.1% in human code

- Production failures are common: 43% of AI-generated code requires manual debugging in production, averaging three redeploy cycles per fix even after passing QA

- The industry response is verification-first: multi-layer quality gates, AI code review tools (best achieving 64% F-score), and systems like Cloudflare’s multi-AI reviewer approach are emerging to make AI coding viable at scale

The AI code verification bottleneck is real, measurable, and reshaping engineering workflows. The teams that succeed won’t be those that generate code fastest—they’ll be those that verify it most efficiently while maintaining quality standards.