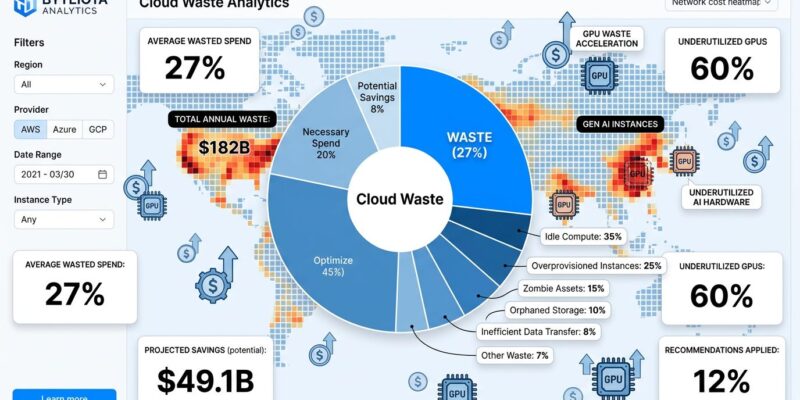

Cloud infrastructure spending hit $675 billion in 2025, and $182 billion of it—27%—was wasted. That waste rate hasn’t budged since 2019 despite 82% of organizations calling optimization their top priority. The reason isn’t technical. It’s cultural. Sixty percent of cloud waste comes from idle compute and overprovisioned instances—decisions developers make every day. Yet 39% of organizations struggle to gain engineer buy-in for cost management, and FinOps teams have migrated from finance departments to CTOs because this is fundamentally an engineering problem.

Your Forgotten Instances Cost $182 Billion

The math is brutal. Idle compute accounts for 35% of cloud waste. Overprovisioned instances add another 25%. Together, these two categories—both controlled by developer decisions—comprise 60% of all wasted spend. Development teams spin up environments for testing, QA, and debugging, then forget to delete them. Fifteen to 25% of cloud resources sit completely idle, burning cash while doing nothing.

The scale has changed. A forgotten EC2 instance used to cost $100 per month. Now a single forgotten NVIDIA H100 GPU costs $30-50 per hour—$21,900 to $36,500 per month. AI startups burning $50,000 monthly on GPUs watch $16,000 vanish into idle silicon. Kubernetes clusters show 5% GPU utilization and 8% CPU utilization, down from 10% last year. This isn’t a rounding error anymore.

AI Workloads Accelerate the Crisis

GPU idle time is the fastest-growing waste category. Average GPU utilization sits at just 23%, meaning 77% of capacity is wasted. AI workloads grew 62% year-over-year in 2025, yet fewer than 20% of organizations have automatic shutdown policies for GPU instances. The problem isn’t ignorance—98% of organizations now manage AI spend, up from 31% two years ago. The problem is execution.

You finally secure a cluster of NVIDIA H100s after months of waiting, only to realize your training job barely scratches the surface of available VRAM. Or worse, you keep instances running over the weekend because the setup process is so brittle you’re afraid to turn them off. Even 4 hours of forgotten GPU time costs $50-200. Multiply that across teams and months, and you understand why GPU waste is exploding.

The Culture Problem: “Not My Job”

According to the State of FinOps 2026 report, 39% of organizations struggle to gain broad buy-in from engineers for cloud cost management. This isn’t a tooling problem—it’s a culture problem. Developers focus on implementing solutions, not counting resources. They’re rewarded for shipping features fast, not shipping efficiently. There’s no penalty for overprovisioning “just to be safe,” and no credit for designing cost-efficient architectures from the start.

The organizational response tells the story. Seventy-eight percent of FinOps practices now report to the CTO or CIO, not the CFO. The industry finally recognizes that cloud waste is an engineering problem, not a finance problem. Yet the incentive structures haven’t caught up. State of FinOps identifies a critical measurement gap: “Once you fix it, it’s gone… how do we give developers credit for shift-left activities?” Cost avoidance is invisible. Developers who design efficient systems get no recognition, while those who fix waste become heroes. The incentives are backwards.

Without clear ownership, cloud spend becomes a shared problem that no one solves. Developers provision resources, ops teams get blamed when bills spike, and finance pays. This disconnect is why the waste rate stayed at 27% for seven straight years despite awareness, tools, and stated priorities.

What Actually Works

Organizations with structured FinOps programs reduce waste from 40% to 15-20%, delivering 25-30% monthly savings. They’re 2.5x more likely to meet cloud ROI expectations. The successful model isn’t dashboards nobody opens—it’s shift-left cost estimation plus federated ownership.

Shift-left means forecasting costs before deployment, not optimizing after the bill arrives. Infrastructure reviews include cost estimates the same way they include security reviews. Tools like Infracost integrate with GitHub and GitLab to show cost impact directly in pull requests:

This PR will increase monthly costs by $120:

- RDS instance resize: +$80/mo

- Additional S3 bucket: +$40/moFederated ownership means small central FinOps teams (2-4 people) set standards for tagging, allocation, and optimization, while embedded engineers on product teams own day-to-day accountability. Eighty-one percent of organizations use this “centralized enablement with federated execution” model. It works because cost data lives in developers’ existing workflows—their IDE, CI/CD pipelines, and GitOps tools—not in separate FinOps dashboards.

The cultural shift reframes cost as a feature metric, not a finance metric. Cost per transaction sits alongside latency, error rates, and throughput. Teams own their spend with monthly cost budgets. Engineers see infrastructure efficiency as their responsibility, not ops’ problem.

The Accountability Question

Here’s the uncomfortable truth: Seven years at 27% waste proves voluntary optimization doesn’t work. The industry is starting to experiment with accountability mechanisms. Some organizations integrate cost efficiency into performance reviews. Others track unit costs per team and tie results to bonuses. A few use dedicated recognition budgets for developers who avoid costs through smart architecture.

Only 44% of organizations implement chargeback or showback systems today. That’s changing. The shift is from “finance owns the budget” to “teams own their spend.” But the harder question remains: If a developer routinely provisions 8-core instances for workloads that need 2 cores, is that a performance issue? If a team runs 20% over their cost budget month after month, should it affect compensation?

The industry hasn’t settled this debate. But the data is clear: Tools exist, frameworks work, and best practices are documented. What’s missing is cultural transformation. Developers need to own the cost impact of their infrastructure decisions the same way they own the performance and reliability impact.

Cloud waste won’t fix itself. The question isn’t whether cost optimization matters—it’s whose job it is. The $182 billion answer suggests it’s everyone’s job, starting with the people making provisioning decisions every day.