On March 31, 2026, Anthropic accidentally shipped 512,000 lines of Claude Code’s source in a routine npm update—thanks to a missing line in their .npmignore file and a known Bun bundler bug. But the real story isn’t the leak itself. It’s what happened next: a proxy project called free-claude-code is trending #1 on GitHub TODAY (April 26) with 11,682 stars, routing Claude Code API calls to free alternatives and bypassing Anthropic’s $20/month subscription entirely. The controversy isn’t just about leaked code. It’s about whether Anthropic can even enforce copyright when their own lead engineer admitted the codebase is “entirely AI-written,” and US law requires human authorship for protection.

AI-Generated Code Isn’t Copyrightable (and Anthropic Knows It)

Here’s the uncomfortable truth: Anthropic’s lead engineer publicly stated his Claude Code contributions are “entirely AI-written.” Under US copyright law, works “predominantly generated by AI, without meaningful human authorship, are not eligible for copyright protection” (US Copyright Office, January 2025). The Supreme Court reaffirmed this position on March 2, 2026, denying review of cases challenging the human authorship requirement. If Claude Code’s codebase is substantially AI-authored, Anthropic may lack the legal protection needed to enforce against copying.

This isn’t theoretical. Anthropic issued 8,000+ DMCA takedowns after the leak, successfully removing direct copies. However, AI-rewritten versions survived. Within 24 hours, a Python rewrite called claw-code gained 100,000+ GitHub stars—generated by feeding the leaked code to a competing AI. Legal firm Bean, Kinney & Korman put it bluntly: “Whether the developer personally read the code line by line is beside the point; the AI tool that performed the reimplementation was given the actual proprietary source as its input.” Traditional clean room defenses require isolated teams. AI-powered rewrites collapse that model—the AI directly accesses leaked source, then outputs functionally equivalent code in a different language.

Trade secret protection offers an alternative, but enforcement is far harder. It requires proving improper access, not just copying. Moreover, decentralized hosting and private forks sit beyond practical enforcement. The Claude Code leak exposed that the entire AI tooling business model is built on legally uncertain IP foundations.

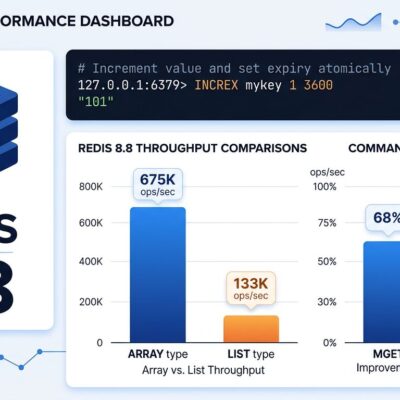

How Developers Are Routing Around Anthropic’s Paid API

The free-claude-code proxy intercepts Claude Code API requests on localhost:8082 and routes them to free or cheap alternatives: NVIDIA NIM (40 requests/min free), OpenRouter (hundreds of models), DeepSeek (pennies per million tokens), or fully local models via LM Studio and llama.cpp. The proxy translates Anthropic’s API format into provider-specific formats, enabling zero-cost Claude Code functionality. Installation takes minutes: clone the repo, configure provider keys in .env, run the uvicorn server.

The cost comparison is brutal. Anthropic charges $20/month for Claude Code. NVIDIA NIM offers a free tier with 40 requests/min—sufficient for most individual developers. DeepSeek runs at roughly $2-5/month for equivalent usage. Consequently, developers can map expensive Opus calls to NVIDIA’s free Nemotron-70B model, route cheap Haiku requests to DeepSeek, and keep local Sonnet inference on LM Studio for privacy-sensitive code. The proxy even optimizes by intercepting trivial API calls (quota checks, title generation) locally, reducing actual provider consumption.

The project’s explosive growth reveals developer appetite for alternatives to paid APIs. GitHub shows 11,682 total stars with 4,007 gained TODAY (April 26). That’s not a slow burn—it’s a wildfire. Anthropic faces a dilemma: aggressively enforce weak copyright claims and risk public backlash, or tolerate proxy use and normalize circumvention.

Related: AI Coding Tools Hit $200/Engineer: 2026 Pricing Reality

512,000 Lines Leaked Thanks to a Bun Bug and Missing .npmignore

Anthropic acquired Bun JavaScript runtime in late 2025 and built Claude Code on it. On March 11, 2026, developers filed Bun issue #28001 reporting that source maps ship in production builds despite development: false configuration. Twenty days later, Anthropic pushed Claude Code v2.1.88 to npm with a 59.8 MB source map exposing 1,906 TypeScript files. The leak wasn’t a hack—it was a configuration mistake combined with a known bundler bug.

Security researcher Chaofan Shou discovered it at 04:23 UTC and tweeted the download link. Anthropic pulled the package by 08:00 UTC—under four hours response time. Nevertheless, the damage was done. The leak exposed complete architecture: tool systems, agent loop flows, 44 hidden feature flags. Anthropic’s official response: “This was a release packaging issue caused by human error, not a security breach.” Translation: we didn’t verify that production builds excluded source maps when adopting a new runtime.

This wasn’t malicious. It was preventable operational failure. Anthropic moved fast on Bun adoption but failed basic build verification. The lesson: even leading AI companies struggle with configuration basics when adopting new tools under pressure.

Use the Free Proxy or Pay for Legal Safety?

Developers face an ethical and legal choice: use free-claude-code (save $20/month but risk IP liability) or pay Anthropic (for code that may not be copyrightable). Legal experts warn that even “clean room” implementations can be derivative works if they access leaked material. However, developers counter with a pointed question: if Anthropic built Claude on others’ code under fair use arguments, why shouldn’t they use leaked insights?

The Hacker News community is split. Some embrace free alternatives as legitimate technical solutions. Others warn of trade secret misappropriation claims even if copyright fails. One commenter captured the tension: “Anthropic built their models on other people’s code under the fair use argument, but the moment their own code leaks they reach for DMCA takedowns.” The perceived hypocrisy stings.

For developers considering free-claude-code, the risk calculus matters. Personal projects: individual liability contained, relatively low risk. Work projects: employer faces IP contamination claims, significantly higher stakes. The safer path exists: Cursor ($20/month, independent codebase, clear licensing), Cline (free, Apache 2.0 licensed, bring-your-own-key), or Aider (free, open source, multi-provider). Additionally, local models via LM Studio avoid third-party API legal questions entirely.

ByteIota’s take: don’t let cost savings override legal prudence, especially in professional contexts. The $20/month you save today could cost far more in legal exposure tomorrow.

The AI Industry’s IP Model Just Broke

This is the first major test of whether AI companies can protect AI-generated code. If Anthropic’s copyright enforcement fails, expect more leaks, more proxies, more legal chaos. The industry needs a new IP framework. Options include: (1) strengthen human authorship documentation (prove meaningful human creative input), (2) rely on trade secret protection (harder to enforce), or (3) accept that AI-generated code enters a legal grey area resembling open source.

Legal analysts predict the practical protection calculus has fundamentally changed. Bean, Kinney & Korman wrote: “AI compression of months-long reimplementation into overnight work fundamentally changes the practical protection calculus.” Courts will take 3-5 years to establish precedents. Meanwhile, the industry operates on uncertainty.

The Claude Code leak isn’t just Anthropic’s problem—it’s a preview of industry-wide IP vulnerability. Every AI company using AI to build AI tools faces the same question: can you copyright code that AI writes? Until courts answer definitively, developers should expect more proxies, more leaks, more grey-area tools. The lesson for AI companies: paid APIs need to compete on value (model quality, reliability, support), not just legal threats.

Key Takeaways

- Anthropic’s leak exposed that AI-generated code may lack copyright protection under US law’s human authorship requirement, creating legal uncertainty for the entire AI tooling industry

- free-claude-code offers genuine technical value (cost savings, provider choice, local model privacy) but operates in a legal grey area with potential IP liability for users

- Safer alternatives with clear licensing exist: Cursor ($20/month, independent codebase), Cline (free, Apache 2.0 licensed), and Aider (free, open source, multi-provider support)

- The AI industry needs a new IP framework—current copyright law wasn’t built for AI-generated code, and trade secret protection offers limited practical enforcement

- Developers should prioritize legal safety over cost savings, especially for work projects where employer IP contamination risks are significant

The leak didn’t break Anthropic’s code—it broke the legal assumptions the AI industry was built on. Courts will spend years sorting out whether code-generating AI companies can protect their own AI-generated code. In the meantime, developers navigate the grey area, proxy projects proliferate, and the question remains: who owns code that AI writes?