AI coding tools are everywhere. Ninety-three percent of developers use them, and half of all code on GitHub is now AI-assisted. These tools promise 46% time savings, and companies spent 2025 racing to adopt them. However, new research reveals a troubling tradeoff: AI code contains 1.7 times more bugs than human code, and 45% harbors known security flaws. The productivity gains are real. So is the quality crisis.

The Data Behind the Crisis

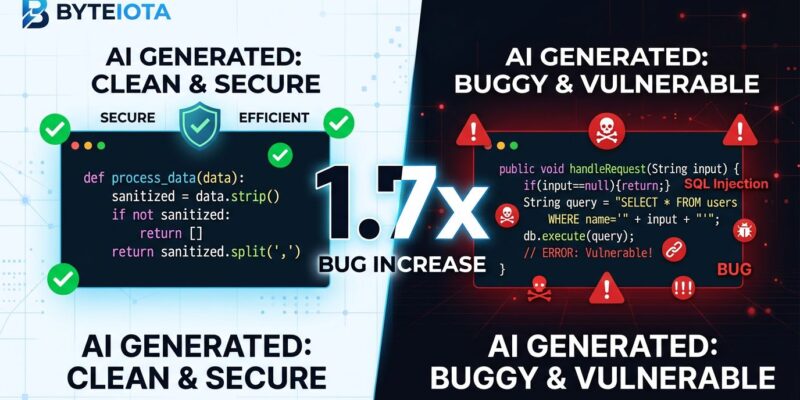

CodeRabbit analyzed 470 open-source pull requests and found AI code contained 10.83 issues per PR compared to 6.45 for human code. That 1.7x multiplier is systematic across every category.

Logic issues are 1.75 times higher. Performance regressions are eight times worse. Security vulnerabilities are 2.74 times more common. Moreover, Stanford and MIT research found 45% of AI code introduces known security flaws.

This is not a small sample. GitHub reports 51% of code committed in early 2026 is AI-assisted. The quality gap is shipping to production daily. Furthermore, Stanford’s 2026 AI Index found 62% of organizations cite security as the top barrier to scaling AI tools. The industry is using them at massive scale before figuring out how to use them well.

From Speed to Quality: The 2026 Pivot

In 2025, engineering organizations prioritized velocity. Leaders tracked PR throughput and AI-generated code percentages as competitive advantages. Microsoft and Google bragged about how much of their code was AI-assisted.

But hidden costs emerged. Production incidents pointed to AI code. Operational failures, missed SLAs, and reliability regressions eroded promised cost savings. Developers felt empowered but uneasy. Reviewing AI code proved more cognitively demanding than writing it. Subtle errors slipped through large diffs.

The time saved writing code was spent reviewing, fixing bugs, and managing incidents. Consequently, productivity gains did not translate to value if the code broke in production.

In 2026, the industry is pivoting from “how much can AI produce?” to “how confidently can we ship it?” Companies are implementing defect metrics, validation tools, multi-agent workflows, and governance policies.

The Productivity Paradox

The productivity gains are real. McKinsey found AI tools reduce time on routine tasks by 46%. GitHub shows developers complete tasks 55% faster with Copilot. Eighty-four percent of enterprises use AI tools.

But here is the paradox: despite 93% adoption, productivity gains have barely moved past 10% for most teams. AI code makes up 26.9% of production, but quantity does not equal impact.

The disconnect: AI code is faster to write but harder to validate. It lacks business logic understanding, drifts from conventions, and recreates legacy security patterns. The time saved writing is consumed reviewing.

Teams seeing real gains treat AI as a partner, not a replacement. They build workflows around AI’s known weaknesses. The tool alone does not improve productivity. The workflow does.

What Developers Should Do

Do not stop using AI tools. The gains are legitimate, and tools are improving. But change how you use them.

Adopt the “review sandwich”: AI review first for style and common bugs, then human review for architecture and business logic. This cuts review time 30 to 50% while maintaining defect detection. DORA found teams using AI code review see 42 to 48% better bug detection.

Implement agent-driven testing loops where AI writes code, generates tests, runs them, and fixes failures before opening a PR. This catches problems before code review.

Make security scanning mandatory. Never let AI handle authentication or encryption without expert review. Additionally, track AI code churn: if AI code is rewritten more than 1.5x as often as human code, usage is too high for your processes.

Tools are improving. CodeRabbit achieves 46% bug detection accuracy. Devin hits 70% auto-fix rates. These numbers will rise as tools learn from failures. Nevertheless, better tools do not eliminate the need for better developer workflows.

The Road Ahead

This crisis is not permanent. It is maturation. The industry spent 2025 prioritizing speed and discovering limits. In 2026, focus is shifting to confidence and security.

Organizations are standardizing on multi-agent workflows, governance policies, and validation tools. The insight: AI tools augment developers rather than replace them. The question is not whether to use AI but how to integrate it into quality-preserving workflows.

Teams that figure this out will ship faster and more reliably. Teams that do not will chase velocity while production burns. The data is clear. The solutions exist. The question is whether your team is adapting.