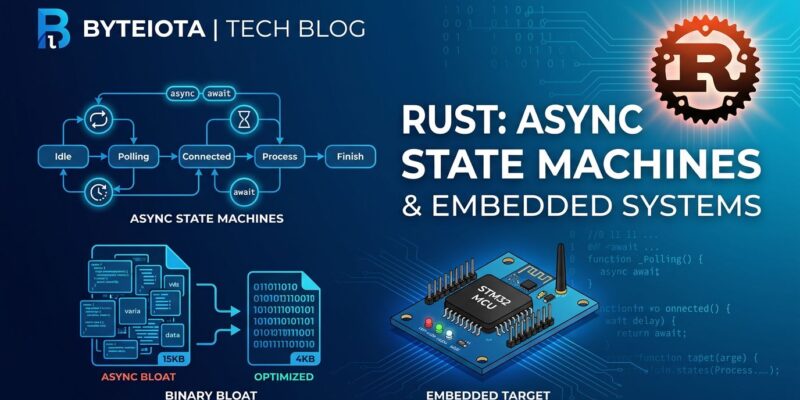

After seven years since stabilization, async Rust still carries fundamental design issues that prevent it from delivering on its “zero-cost abstraction” promise. Yesterday (May 4, 2026), Tweede golf, a Rust consultancy, published technical evidence proving that async Rust generates 2-5% binary bloat through unnecessary state machines and failed compiler optimizations. For embedded systems where every byte counts, this isn’t just inefficient – it prevents deployment entirely.

The analysis challenges Rust’s maturity narrative. If a core concurrency feature remains stuck in MVP state after seven years – with broken async drop, unstable AsyncIterator, and Pin complexity – developers need honest answers about when async makes sense and when simpler alternatives work better.

The Evidence: 360 Lines of Compiler Output For a Simple Async Block

Tweede golf’s MIR (mid-level intermediate representation) analysis reveals the scale of the async Rust bloat problem. A simple async function generates 360 lines of compiler output compared to 23 lines for equivalent synchronous code. That’s 15x more generated code, translating to 2-5% binary size overhead in production firmware.

The bloat stems from unnecessary state machine complexity. Even trivial async functions generate elaborate enums with “Unresumed,” “Returned,” and “Panicked” states. Futures that never panic still carry panic-handling overhead. Async blocks without await points still generate full state machines. LLVM struggles to optimize any of this away once futures grow complex or when optimizing for size.

// What the compiler generates for simple async fn

enum SimpleFuture {

Unresumed, // State before first poll

Returned, // State after completion (panics if polled)

Panicked, // State if panic occurred

}

// Each state adds binary size overhead

// LLVM cannot optimize these away in complex casesHere’s what makes this worse: compiler-level fixes exist and work. Tweede golf demonstrated that removing panics in the “Returned” state saves 2-5% binary size. Eliminating unnecessary state machines for never-awaited blocks saves another 0.2%. These optimizations aren’t theoretical – they’re implementable. They’re just not implemented.

Embedded Systems Crisis: Choosing Between Ergonomics and Fitting on Hardware

For embedded developers, async Rust’s bloat isn’t an annoyance – it’s a deployment blocker. Microcontrollers typically ship with 32KB to 512KB of flash memory. When 2-5KB of async overhead prevents your firmware from fitting, you’re stuck with an impossible choice: abandon async’s clean syntax or abandon Rust entirely.

The situation is serious enough that an embedded engineer submitted a Rust Project Goal requesting €30,000 in funding to implement the compiler optimizations that would fix async bloat. The estimate exists. The problem is quantified. Yet seven years after async stabilization, embedded teams continue choosing RTOS over async Rust purely because of binary size constraints.

This represents a strategic failure for Rust. Embedded systems are a prime use case for Rust’s memory safety guarantees without garbage collection overhead. Async should be a selling point – clean concurrency without RTOS complexity. Instead, teams evaluate async Rust, measure the binary bloat, and revert to C with FreeRTOS. Every byte of async overhead is a missed opportunity for Rust adoption.

Seven Years of Unresolved MVP Issues

Binary bloat isn’t the only problem with async Rust. Fundamental language features remain broken or unstable after years of “stable” status. AsyncIterator has been tracked in issue #79024 since 2020 – six years and counting. The feature remains unusable in stable Rust because design problems keep blocking stabilization: async closures are still unstable, method resolution conflicts cause breaking changes, and dynamic dispatch issues with Stream::next remain unsolved.

Then there’s async drop, a fundamental lifetime issue preventing proper resource cleanup in async contexts. No timeline for resolution exists. Pin and Unpin continue confusing even experienced Rust developers, and their complexity is baked into async Rust’s foundation. These aren’t minor rough edges – they’re core features that async code depends on, marked “stable” but incomplete.

The pattern suggests async Rust was stabilized prematurely. When a feature is still marked MVP after seven years, “MVP” stops being a development phase and becomes a permanent state. Developers building production systems need APIs that work completely, not features that remain half-finished indefinitely.

When to Use Async Rust (And When to Skip It)

Despite these issues, async Rust still makes sense for specific workloads. Network I/O servers handling thousands of concurrent connections benefit from async’s ability to multiplex tasks onto few OS threads. The Tokio ecosystem (Axum, Tower, Hyper) is production-proven for web services. For these use cases, 2-5% binary bloat is acceptable overhead for the concurrency model.

Here’s when to avoid async entirely: CPU-bound computation (use rayon or std::thread instead), embedded systems with tight memory constraints (RTOS is more predictable), WebAssembly with size limits, and simple applications where OS threads are clearer. Binary size matters for more applications than the Rust community acknowledges.

The decision framework is straightforward: If your workload is I/O-bound and you’re running on servers or desktops with memory to spare, async Rust works well. If you’re memory-constrained, CPU-bound, or building for embedded systems, stick with synchronous Rust and OS threads. Async isn’t “better” than threads – it’s a trade-off with real costs that you need to measure, not assume away.

Key Takeaways

- Async Rust generates 2-5% binary bloat through unnecessary state machines – 15x more compiler output than equivalent sync code, contradicting the “zero-cost abstraction” promise

- Embedded systems developers face impossible choices: async Rust’s bloat can prevent firmware from fitting on microcontrollers, forcing teams back to RTOS despite preferring Rust’s ergonomics

- After seven years, fundamental features remain broken (async drop) or unstable (AsyncIterator tracked since 2020), suggesting premature stabilization

- Use async for I/O-bound workloads (web servers, databases) where concurrency benefits justify the overhead; avoid it for embedded systems, CPU-bound tasks, or when binary size matters

- Compiler optimizations exist to fix the bloat (€30k implementation estimate) but remain unimplemented – the problem is acknowledged but apparently not prioritized

Async Rust isn’t failing everywhere – it works well for network services. But seven years of MVP-state issues reveal a pattern: rushed stabilization, incomplete features, and unresolved design problems. For embedded developers and anyone optimizing for binary size, the “zero-cost” promise remains unfulfilled. Measure your workload’s characteristics before choosing async. The overhead is real.