Google’s AlphaEvolve coding agent has been running in production for a year, and the ROI numbers are in: 0.7% of Google’s worldwide compute resources recovered continuously. At Google’s scale, that’s thousands of servers and millions in operational savings. This isn’t a benchmark or a lab demo – it’s production code orchestrating data centers, optimizing GPUs, and improving the AI that trains AlphaEvolve itself.

One Year in Production: The Borg Case Study

AlphaEvolve discovered a “simple yet remarkably effective heuristic” to help Google’s Borg scheduler orchestrate vast data centers more efficiently. The solution has been in production for over a year, continuously recovering an average of 0.7% of Google’s worldwide compute resources.

That 0.7% might sound modest until you grasp the scale. Google operates hundreds of thousands of servers across dozens of data centers globally. Consequently, a 0.7% efficiency gain translates to thousands of servers worth of compute capacity – equivalent to millions of dollars in operational savings annually. The code runs autonomously, makes real-time scheduling decisions, and produces human-readable output that engineers can interpret and debug.

Cross-Domain Generalization: One Tool, Multiple Domains

What separates AlphaEvolve from point solutions is its scope. A single system optimizing data centers, GPUs, quantum computers, hardware circuits, and pure mathematics isn’t supposed to work. However, it does.

Infrastructure: Beyond Borg, AlphaEvolve reduced write amplification in Google Spanner by 20%, cut software storage by 9%, and discovered cache replacement policies in two days – work that previously took months.

AI and GPUs: Moreover, AlphaEvolve achieved a 32.5% speedup for the FlashAttention kernel in Transformer models. This matters because compilers already heavily optimize GPU instructions – finding improvements is exceptionally difficult. Additionally, the agent optimized the AI training that powers AlphaEvolve itself, achieving 1% faster training runs.

Hardware and Quantum: For Google’s upcoming TPUs, AlphaEvolve proposed a Verilog rewrite that removed unnecessary bits in matrix multiplication circuits. Meanwhile, on the Willow quantum processor, it achieved 10x lower error rates for molecular simulations.

Commercial ROI: Klarna doubled transformer training speed. FM Logistic saved 15,000+ km annually through routing optimization. Schrödinger saw a 4x speedup in drug discovery simulations.

If you optimize anything – cache policies, GPU kernels, circuit designs, algorithms – AlphaEvolve can do your job. The evidence is a year of production deployment across domains that shouldn’t generalize but do.

The Self-Improvement Loop Closes

Perhaps the most significant detail is buried in the technical documentation: “AlphaEvolve enhanced the efficiency of training the large language models underlying AlphaEvolve itself.”

The AlphaEvolve coding agent optimized the code that makes the coding agent better. The meta-optimization loop that theorists warned about is now production reality at Google. When AI systems can autonomously improve the infrastructure that makes them faster, the optimization curve stops being linear.

How It Actually Works

AlphaEvolve employs a dual-model strategy using Google’s Gemini LLMs. Gemini Flash maximizes the breadth of ideas explored. Gemini Pro provides critical depth with insightful suggestions. The ensemble generates diverse algorithmic variations.

The system orchestrates an autonomous pipeline where LLMs make direct code changes, receive continuous feedback from evaluators, and iteratively improve algorithms. It’s evolutionary optimization at AI speed – testing variations, measuring results, keeping winners. The output is human-readable, verifiable, production-ready code.

For hardware optimizations, proposals must pass robust correctness checks before deployment. For Borg scheduling, the code must be interpretable so engineers understand what changed. Production requirements force quality that benchmarks don’t.

What This Means for Developers

The career implications are unavoidable. Performance optimization engineering – squeezing efficiency from systems through deep technical expertise – is being automated. Cache policies that took expert engineers months now take AlphaEvolve two days. Furthermore, GPU kernel optimizations that compiler teams couldn’t improve get 32.5% speedups from AI.

Terence Tao, one of the world’s leading mathematicians, said “Tools such as AlphaEvolve are giving mathematicians useful new capabilities.” If Fields Medal-level mathematicians are using AI to solve problems they couldn’t solve alone, optimization engineers should pay attention.

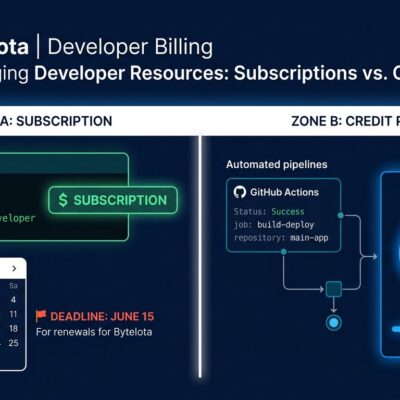

The shift isn’t from human to AI – it’s from human optimization to human-AI collaboration, with the balance tilting heavily toward AI autonomy. Google is commercializing AlphaEvolve through Google Cloud. OpenEvolve, an open-source implementation, already exists. The technology isn’t locked in labs – it’s productizing.

The ROI That Matters

CFOs will ask one question: What’s the return on investment? AlphaEvolve has answers: 0.7% compute recovery means millions in annual savings, 32.5% GPU performance gains, 2x training speed improvements, and measurable logistics and drug discovery speedups.

These aren’t benchmark scores. They’re production deployments with measured business impact. The AlphaEvolve coding agent proves its value in dollars saved and capabilities unlocked, not leaderboard rankings.

AI coding tools are past the hype phase. They’re infrastructure now. The optimization engineering profession is changing whether you’re ready or not. AlphaEvolve just showed what one year of production AI looks like at scale.

The question is what year two brings.