Model Context Protocol (MCP) hit 97 million monthly SDK downloads in just 14 months—with 10,000+ production servers deployed and backing from every major AI platform. Microsoft just launched “MCP for Beginners,” a free open-source curriculum signaling mainstream acceptance. The protocol solves integration hell: before November 2024, connecting ChatGPT to your database required different code than Claude, Copilot, or Cursor. MCP standardizes AI tool integration the way HTTP standardized web communication. If you’re building AI-powered applications in 2026, understanding MCP is no longer optional.

The 97M Download Explosion

MCP went from 100,000 downloads at launch in November 2024 to 8 million by April 2025, then exploded to 97 million monthly SDK downloads by December 2025. The protocol now powers 10,000+ production MCP servers with 75+ connectors in Claude’s directory alone. Every major AI platform adopted it within months: ChatGPT, Claude, Cursor, Gemini, Microsoft Copilot, and VS Code all support MCP.

OpenAI CEO Sam Altman announced support in March 2025: “People love MCP and we are excited to add support across our products.” This isn’t incremental adoption—it’s exponential. When every major AI platform (OpenAI, Anthropic, Google, Microsoft) adopts the same standard within months, you’re witnessing infrastructure formation. The numbers back it up: 5,800+ MCP servers in the ecosystem, 300+ MCP clients, and enterprise adoption from highly regulated industries like healthcare, finance, and manufacturing.

Developers no longer need to build separate integrations for each LLM platform. Write an MCP server once, and it works everywhere. That’s the value proposition driving this explosion.

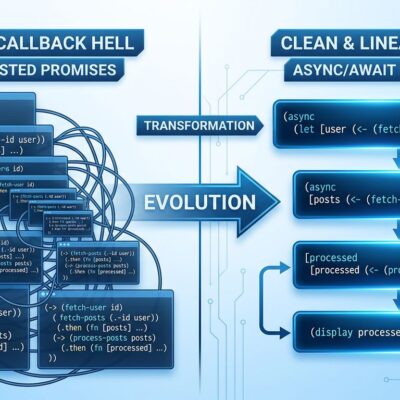

What MCP Actually Solves: The Integration Hell Problem

Before MCP, connecting AI platforms to external tools required custom code for every platform. ChatGPT needed one integration, Claude another, Copilot a third. If you wanted your database accessible to all three, you maintained three separate codebases. However, MCP provides a universal client-server protocol—write an MCP server once, and it works across all AI platforms that support MCP.

The technical architecture is straightforward: three components work together. The MCP Host contains the LLM (your IDE, conversational AI, or application). Meanwhile, the MCP Client lives inside the host, translating LLM requests for the server and converting server responses back for the LLM. The MCP Server is your external service—database, API, file system—providing data and tools to the LLM. Moreover, the protocol defines three core primitives: Tools (actions the LLM can execute), Resources (data sources it can access), and Prompts (pre-configured templates). It’s transported over JSON-RPC 2.0, re-using concepts from Language Server Protocol.

The real-world benefit is immediate: standardization equals velocity. Instead of maintaining five integration codebases, developers maintain one MCP server. This is why adoption exploded—the value proposition is obvious and the pain point was universal.

Microsoft’s Curriculum: Mainstream Acceptance Signal

Microsoft launched “MCP for Beginners” in early 2026—a free, open-source curriculum with hands-on examples in .NET, Java, TypeScript, JavaScript, Rust, and Python. The 9-module course covers basics through advanced topics, with guided exercises for building MCP-enabled applications. Furthermore, it’s trending on GitHub with over 100 stars and active development. The course focuses on practical techniques: “Building modular, scalable, secure AI workflows from session setup to service orchestration.”

This isn’t a side project. Microsoft also pushed Azure Functions MCP support to General Availability in January 2026, supporting .NET, Java, JavaScript, Python, and TypeScript with a self-hosted deployment option. When Microsoft invests in education infrastructure, they’re betting on long-term adoption. Consequently, they did this with TypeScript—built training, tooling, and ecosystem support. MCP is getting the same treatment.

The signal is clear: MCP has moved beyond early adopter experimentation to mainstream developer education. Curricula like this reduce learning curves and create standardized knowledge bases. That’s how you go from 97 million downloads to true ubiquity.

2026: From Experimentation to Enterprise Production

2026 marks the shift from experimentation to enterprise-wide deployment. All three major cloud providers launched production-grade MCP support within months. Microsoft’s Azure Functions MCP went GA in January. Additionally, AWS released the AWS API MCP Server in developer preview last July, enabling natural language AWS API calls. Google Cloud launched a gRPC transport package for MCP in February, plus fully-managed remote MCP servers with global endpoints. Each cloud provider is competing on deployment ease and service integrations.

The enterprise adoption drivers are real. Gartner predicts 40% of enterprise applications will include AI agents by end of 2026, up from less than 5% today. The MCP market is projected to reach $1.8 billion in 2025. In December 2025, Anthropic donated MCP to the Agentic AI Foundation under the Linux Foundation, co-founded with Block and OpenAI, backed by Google, Microsoft, AWS, Cloudflare, and Bloomberg. That donation removes single-vendor concerns—no one company controls MCP’s future.

Cloud provider support equals production readiness. When AWS, Azure, and GCP all build hosting infrastructure for your protocol, enterprises get the reliability, security, and compliance they require. The standardization race is on.

Challenges Ahead: Adoption Doesn’t Mean Maturity

Despite explosive adoption, MCP faces real developer pain points. Early implementations show installation friction—developers describe it as a “Byzantine process” involving copying JSON blobs and hard-coding API keys. Furthermore, tool management breaks down at scale: accumulating dozens or hundreds of tools creates discovery and organization problems with no good solution. The protocol lacks built-in workflow orchestration, so clients must manually manage multi-step task sequencing, which gets brittle fast.

Context bloat is another issue. MCP servers can flood conversations with excessive information, exhausting token limits and making interactions unwieldy. Additionally, developers report tool invocation complexity—crafting “secret incantations” to trigger the right functionality, treating AI assistants more like command-line interfaces than intelligent agents. Agent portability remains a challenge: users want their agents available across environments without constant reconfiguration.

These are the 2026 focus areas. MCP solved the integration problem, but workflow orchestration, tool management, and scaling challenges remain unsolved. Therefore, adoption doesn’t equal maturity. Developers need to understand current limitations before committing to production deployments. The protocol gives you a universal pipe, but not the orchestration logic.

Key Takeaways

- MCP hit 97 million monthly downloads in 14 months—unprecedented adoption for an AI infrastructure protocol

- Microsoft’s “MCP for Beginners” curriculum signals mainstream acceptance and long-term strategic commitment

- Cloud providers (AWS, Azure, GCP) all support MCP with production-grade hosting and enterprise features

- Standardization is real: Write one MCP server, works with ChatGPT, Claude, Copilot, Cursor, and Gemini

- Challenges remain: Tool management at scale, workflow orchestration, and installation friction need solving

- For developers: Learn MCP fundamentals now. The Microsoft curriculum is free and covers six languages. This is infrastructure formation, not hype.