Addy Osmani just released agent-skills, an open-source project that exploded to 32,800 GitHub stars in its first three months and gained 3,058 stars in the last 24 hours alone. The timing isn’t coincidental. With 41% of all new code now AI-generated and teams seeing 30-41% increases in technical debt within six months of AI tool adoption, Osmani’s solution addresses the industry’s AI code quality crisis head-on: 20 production-grade workflows that force AI coding agents to follow the same discipline senior engineers apply to reliable software—specs, tests, reviews, and verification—instead of taking shortcuts that create technical debt.

The AI Code Quality Crisis Nobody’s Talking About

The data is damning. 88% of developers report at least one negative impact from AI coding tools. Pull requests containing AI-assisted code have 1.7 times more issues than human-written code. And perhaps most concerning: 76% of developers admit generating code they don’t fully understand.

This creates what the industry calls “comprehension debt”—code you can’t debug, extend, or refactor tomorrow because you never built a mental model of how it works. GitClear tracked an 8-fold increase in code duplication during 2024. Stack Overflow summarized it bluntly: “AI can 10x developers…in creating tech debt.”

Organizations report technical debt increases of 30-41% within six months of widespread AI tool adoption. Teams experience a productivity paradox: churning out boilerplate at record speed while spending equal or greater time untangling “almost correct” AI suggestions that fail in production.

Process Over Prose: How Agent Skills Actually Works

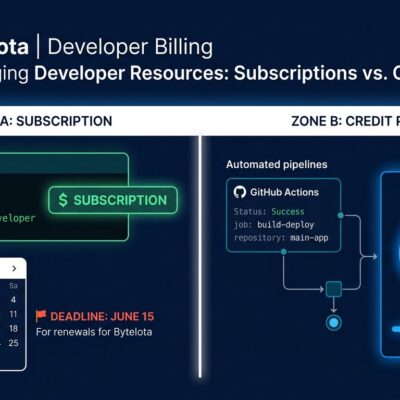

Agent Skills provides 20 structured workflows covering the full development lifecycle: Define → Plan → Build → Verify → Review → Ship. As Osmani explains: “Process over prose. Workflows over reference. Steps with exit criteria over essays without them.”

This isn’t documentation for agents to read—it’s executable workflows agents must follow. Each skill has explicit triggering conditions (slash commands like /spec, /test, /ship or automatic context detection), step-by-step processes, and anti-rationalization tables that prevent agents from skipping critical work.

The spec-driven-development skill forces agents to write a Product Requirements Document with success metrics and stakeholder approval before coding. The test-driven-development skill enforces Red-Green-Refactor cycle. The code-review-and-quality skill applies a five-axis evaluation framework—correctness, maintainability, security, performance, testing—with ~100-line PR sizing based on Google norms.

Anti-rationalization tables explicitly block common shortcuts like “we can write tests later” or “we don’t need a spec for this small change.” These aren’t suggestions. They’re checkpoints agents can’t bypass.

Cross-Tool Compatibility and Getting Started

Agent Skills works with all major AI coding tools: Claude Code, Cursor, GitHub Copilot, Windsurf, and Gemini CLI. Installation is straightforward:

# Claude Code (recommended)

/plugin marketplace add addyosmani/agent-skills

/plugin install agent-skills@addy-agent-skills

# Cursor

git clone https://github.com/addyosmani/agent-skills.git

cp -r agent-skills/skills .cursor/rules/

# Gemini CLI

gemini skills install https://github.com/addyosmani/agent-skills.git --path skillsOnce installed, skills activate via explicit commands (/spec starts spec-driven development) or automatically based on context (API design work triggers the api-and-interface-design skill). MIT licensed means teams can customize and extend for their specific practices.

Cross-tool compatibility means teams can standardize engineering practices regardless of which AI assistant individual developers prefer. A separate Skills Manager project provides unified skill management across multiple agents, enabling skill portability and team-wide consistency.

Google Engineering Culture, Now Accessible to Every Team

Agent Skills encodes specific practices from Google’s “Software Engineering at Google” book: Hyrum’s Law for API design, test pyramid principles, code review norms, Chesterton’s Fence for refactoring, trunk-based development, and the “Beyoncé Rule.”

These aren’t generic best practices—they’re battle-tested patterns from one of the world’s largest engineering organizations. Osmani’s background (Anthropic engineer, ex-Google Chrome DevRel) gives him direct access to this culture. Rather than inventing new practices, he’s making proven patterns at scale accessible through AI agent workflows.

The code-review-and-quality skill enforces Google’s ~100-line PR sizing norm, backed by research showing review thoroughness degrades with larger diffs. The test-driven-development skill implements test pyramid structure that prevents the slow, flaky test suites common when AI agents write only E2E tests. The incremental-implementation skill promotes thin vertical slices with feature flags for progressive rollout.

The Shift from Speed to Structure

The Hacker News discussion “Agents Need Control Flow, Not More Prompts” (230 points, 133 comments) reflects industry consensus: the problem isn’t that AI models lack capability—it’s that they lack discipline.

Junior engineers have the same issue: they can write code but skip the scaffolding that makes software reliable at scale. As Osmani frames it: “A senior engineer’s job is mostly the parts that don’t show up in the diff. Specs. Tests. Reviews. Scope discipline. AI coding agents skip all of it.”

The solution isn’t smarter models or better autocomplete—it’s explicit workflows with checkpoints and exit criteria that can’t be bypassed. Agent Skills signals industry maturation: developers want AI that works like senior engineers, not just AI that writes code fast.

The industry spent 2023-2024 chasing speed. But 2025-2026 revealed the cost: technical debt, comprehension debt, and quality issues. Teams realized that without structure, AI agents behave exactly like junior engineers who ship code that breaks at scale.

Key takeaways:

- AI code quality is a process problem, not a model problem

- Structure and discipline beat raw speed for production code

- Google’s engineering practices can scale to any team via agent workflows

- Install agent-skills today:

/specbefore coding,/code-simplifybefore PRs - The future of AI coding tools isn’t more features—it’s better guardrails