FastAPI dominates Python API development with 4.5 million daily downloads and production deployments at OpenAI, Anthropic, and Microsoft. However, Litestar claims 2x faster performance using msgspec instead of Pydantic for serialization. For backend engineers choosing Python API frameworks in 2026, the question isn’t just “which is faster?”—it’s “when does performance matter enough to trade FastAPI’s mature ecosystem for raw speed?”

FastAPI’s reign as the default Python API framework faces its first credible performance challenger. Moreover, the debate isn’t about features anymore—it’s about whether speed justifies leaving FastAPI’s ecosystem behind.

The Performance Gap: 2x Faster Isn’t Hype

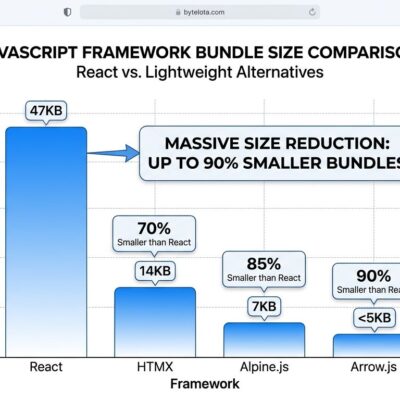

Litestar’s 2x performance advantage over FastAPI isn’t marketing—it’s measurable in performance benchmarks. The core difference lies in serialization: Litestar uses msgspec, which benchmarks show is 10-20x faster than Pydantic V2 and 150x faster than Pydantic V1. For instance, for simple data structures, msgspec is 6x faster. For nested structures, that gap widens to 24x.

Furthermore, real-world deployments back this up. Companies migrating from FastAPI to Litestar report 40-120% performance improvements in production. Additionally, msgspec achieves this through aggressive no-copy optimization for strings and primitives, avoiding the overhead of creating new Python objects for data already in memory. In contrast, Pydantic trades this speed for flexibility—custom validation, detailed error messages, and ecosystem integration with FastAPI, LangChain, and ORMs.

Consequently, when does this performance gap matter? High-throughput APIs handling thousands of concurrent requests see the difference immediately. For cost-sensitive deployments, 2x performance means half the server costs. Moreover, for latency-critical applications—real-time systems, trading platforms, gaming backends—the serialization overhead compounds. Therefore, FastAPI’s Pydantic works fine until it doesn’t, and at scale, that “fine” becomes expensive.

FastAPI’s Moat: The Ecosystem

FastAPI’s dominance isn’t accidental. With 70,000 GitHub stars and 4.5 million daily downloads, it’s the default choice for Python API frameworks. Additionally, OpenAI, Microsoft, Netflix, and Uber run FastAPI in production. The ecosystem is massive: authentication libraries, database integrations, monitoring tools, and thousands of community packages. Furthermore, FastAPI’s automated OpenAPI documentation generation saves hours of manual work. Its intuitive design patterns let teams “ship production APIs rapidly with minimal configuration overhead.”

However, Pydantic’s trade-offs matter here. Its custom validation logic, cleaner developer experience, and better error messages make debugging faster. Moreover, the ecosystem advantage extends beyond code—FastAPI has abundant learning resources, active community support, and battle-tested patterns for common problems. Therefore, when you hit a FastAPI issue, someone’s already solved it. In contrast, with Litestar, you might be the first.

Litestar’s Case: Built-In, Not Bolted-On

Litestar takes a different approach: include enterprise features out-of-the-box instead of relying on external dependencies. While FastAPI uses a plugin model, Litestar bundles first-class SQLAlchemy support, client/server-side sessions, response caching, and OpenTelemetry integration. Furthermore, built-in middlewares handle rate-limiting, CORS, CSRF, compression, and logging without third-party packages. Additionally, WebSockets integration includes automatic data validation and serialization.

The Litestar documentation makes this explicit: “Unlike frameworks such as FastAPI, Starlette, or Flask, Litestar includes many functionalities out of the box needed for a typical modern web application, such as ORM integration, client- and server-side sessions, caching, and OpenTelemetry integration.” As a result, fewer external dependencies means faster setup, less version conflict, and tighter integration. Therefore, for teams that need these features, Litestar eliminates the “glue code” problem.

Moreover, Litestar’s layered architecture and grouping constructs offer flexibility that FastAPI’s more opinionated structure doesn’t. It supports dataclasses, Pydantic, msgspec, attr, and custom integrations—choose your serialization strategy per use case. Consequently, this flexibility matters for teams with varied performance requirements across different endpoints.

Litestar vs FastAPI: Which Should You Choose?

This framework comparison isn’t “which is better” but “which fits your use case.” FastAPI and Litestar optimize for different priorities: ecosystem maturity vs raw performance, developer productivity vs server costs.

Choose FastAPI when you’re building MVPs or prototypes where development speed matters more than runtime performance. If your project needs extensive third-party integrations—authentication providers, database ORMs, monitoring services—FastAPI’s ecosystem saves weeks of work. Additionally, for teams new to async Python or projects where community support and learning resources matter, FastAPI’s maturity is worth the performance trade-off.

In contrast, choose Litestar when your APIs handle thousands of concurrent requests where serialization overhead compounds. For resource-constrained environments—startups optimizing cloud costs, edge deployments with limited resources—2x performance translates directly to 50% lower infrastructure spend. Furthermore, if you’re building performance-critical applications where latency impacts business metrics, Litestar’s speed advantage is non-negotiable. Therefore, teams willing to build some integrations from scratch in exchange for built-in enterprise features and raw speed benefit most.

Migration between frameworks is straightforward—both are ASGI frameworks built on similar principles. The official migration guide covers key differences: HTTPException syntax changes, cookie handling patterns, and dependency injection adjustments. Moreover, the industry pattern emerging: “FastAPI for general use, Litestar for performance-critical applications”—similar to Node.js/Express vs Go/Fiber trade-offs in other ecosystems.

Key Takeaways

- Litestar is 2x faster than FastAPI, driven by msgspec’s 10-20x serialization advantage over Pydantic V2

- FastAPI’s massive ecosystem (4.5M daily downloads, OpenAI/Microsoft/Netflix adoption) provides significant developer productivity advantages

- Choose FastAPI for rapid prototyping, extensive integrations, and ecosystem maturity

- Choose Litestar for high-throughput APIs, performance-critical applications, and cost optimization

- Migration is straightforward—both are ASGI frameworks with similar principles and official migration guides

Performance vs Productivity: The Trade-Off Becomes Explicit

FastAPI’s dominance in Python API development isn’t threatened by Litestar’s performance advantage alone. The 4.5 million daily downloads, production deployments at OpenAI and Microsoft, and massive ecosystem provide a moat that raw speed can’t breach. However, Litestar proves that you don’t have to sacrifice enterprise features for performance—it’s possible to have both.

For most projects, FastAPI remains the right choice. Its ecosystem maturity, extensive integrations, and battle-tested stability outweigh the performance gap. Nevertheless, for high-throughput APIs, cost-sensitive deployments, or latency-critical applications, Litestar’s 2x performance advantage makes it worth considering. Consequently, the choice depends on whether your bottleneck is development speed or runtime performance.

The emergence of Litestar forces a useful rethinking of framework selection criteria. Performance isn’t just a nice-to-have—it’s a business decision. Therefore, when server costs matter, when response times impact conversion rates, when scale compounds inefficiencies, Litestar’s speed advantage becomes strategic. The question isn’t whether Litestar will dethrone FastAPI—it won’t. However, the question is whether your use case is one of the growing number where performance justifies the trade-off.