The Standard Performance Evaluation Corporation released SPEC CPU 2026 on May 5, 2026 – the first new CPU performance standard in 9 years. This matters because every cloud instance type, server purchase decision, and infrastructure investment relies on these vendor-neutral metrics. When AWS markets Graviton 4 as “30% better price/performance,” when Intel claims Panther Lake beats AMD Bergamo by 20%, when your infrastructure team debates spending $10 million on a server refresh – SPEC CPU benchmarks validate those claims. After nine years, the old benchmarks finally caught up with reality.

29 New Benchmarks for Modern Workloads

SPEC CPU 2017 benchmarks used applications from the 2015-2016 era. They missed modern workloads entirely: no AI inference, no Python data science pipelines, no cloud-native patterns. The new suite brings 29 brand new applications plus 14 updated from 2017, totaling 52 benchmarks and 16.7 million lines of source code – more than double the 7.1 million in SPEC CPU 2017.

The new benchmarks include an LLVM optimizing compiler (reflecting modern toolchains everyone actually uses), a Python interpreter (critical for data science and machine learning workflows), a neural machine translation benchmark (AI inference workloads), the Stockfish chess engine (parallel search algorithms), a solar coronal magnetic field modeler (scientific computing), and a computer architecture simulator (hardware design validation). This is not incremental tweaking. Most benchmarks from the previous version were dropped entirely.

The technical upgrades run deep. Programming languages updated from C99 to C18, C++03 to C++17, and Fortran 2003 to Fortran 2018. Memory requirements quadrupled from 16GB to 64GB to handle modern high-density workloads. Parallelism support improved dramatically – SPEC CPU 2017 had only one out of ten integer speed benchmarks that were multithreaded. SPEC CPU 2026 has substantially more parallel content, reflecting the reality that nobody builds single-threaded CPUs anymore.

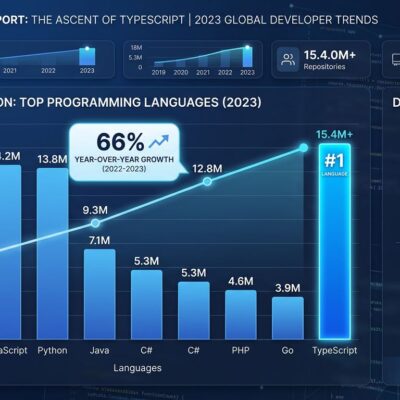

The practical impact is immediate. Cloud providers like AWS (Graviton 4), Microsoft (Cobalt), and Google (Axion) can now fairly compare ARM server processors against x86. Python-heavy workloads that dominate modern data pipelines and ML inference are finally represented. Modern compilers like LLVM 17 and GCC 13 get measured accurately instead of being evaluated against 15-year-old C++03 patterns.

Why 9 Years: CPU Architecture Evolved Completely

The 2017 baseline was Intel Skylake (monolithic dies, straightforward single-memory-channel designs), AMD Zen 1 (early chiplet experiments), and ARM Cortex-A75 (still primarily mobile-focused). The 2026 reality looks nothing like that world.

Chiplet architectures are now standard: AMD’s Zen 6 with 3D V-Cache stacking, Intel’s Foveros 3D packaging. Hybrid cores are mainstream: Intel P+E core designs, ARM big.LITTLE configurations now in servers, not just phones. AI accelerators are integrated into every CPU: NPUs, matrix engines, vector extensions. Memory technology leaped forward: DDR5, CXL interconnects, HBM for bandwidth-hungry applications. ARM servers went from experimental to production: AWS Graviton 4, Microsoft Cobalt, Google Axion powering significant portions of cloud infrastructure.

The old benchmarks couldn’t measure any of this. They couldn’t stress diverse execution units across P+E cores or AI accelerators. They missed modern memory hierarchies with CXL interconnects and HBM. They used C++03 patterns when modern code relies on C++17 threading primitives. Single-threaded focus meant nothing for multi-die chiplet designs where inter-die communication matters as much as core speed.

SPEC releases roughly every 10 years: 2006, 2017, now 2026. Development takes years because the committee evaluated over 70 candidate applications before selecting 38 that resulted in the final 52 benchmarks. The committee includes AMD, Intel, Arm, and SiFive – rare consensus in a competitive industry. That consensus matters. No single vendor can game benchmarks when all major players agree on the rules.

Real-World Infrastructure Decisions

Cloud providers define instance types and pricing based on SPEC CPU results. AWS c7g (Graviton3 ARM) versus c7i (Intel Sapphire Rapids x86) pricing claims of “30% better price/performance” get validated by these benchmarks. Fair ARM versus x86 comparison drives the economics of Graviton, Cobalt, and Axion adoption. If the benchmarks unfairly favor x86 legacy optimizations, AWS can’t justify ARM pricing. SPEC CPU 2026 levels that playing field.

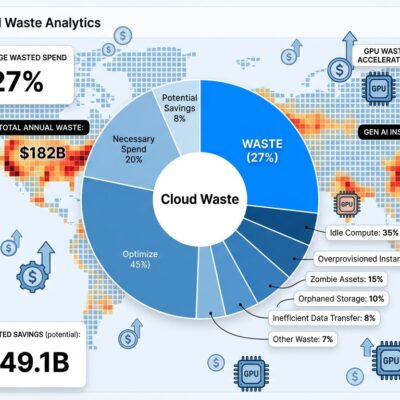

Infrastructure teams make server procurement decisions: should we buy AMD Bergamo with 128 cores or Intel Emerald Rapids with 64 cores? That’s a multi-million dollar question. Cost optimization matters when there’s over $100 billion in cloud waste in 2026 – accurate benchmarks prevent overprovisioning. Migration validation requires proof before moving production workloads to ARM. SPEC CPU provides that proof.

Chip vendors launch products citing these scores. AMD Zen 6, Intel Panther Lake, and ARM Neoverse V4 announcements will reference SPEC CPU 2026 benchmarks. Competitive claims like “20% faster than competitor on SPEC 2026 Integer” carry weight because the metrics are vendor-neutral and trustworthy. Architecture teams validate that chiplet designs delivered expected performance before committing to production.

Developers choose instance types: which AWS, Azure, or GCP instance runs Python applications fastest per dollar? Compiler teams validate that GCC or LLVM optimization flags actually improve SPEC CPU 2026 scores. Performance testing catches regressions: did this code change hurt CPU performance? The benchmarks provide the baseline.

The cost impact is substantial. Cloud providers spend billions on instances – accurate benchmarks save 10-20% through better resource matching. Enterprises making $10 million server refresh decisions rely on vendor-neutral validation. Developers choosing optimal instances save 30-40% monthly compute costs compared to blindly picking the biggest instance.

Pricing and Availability

SPEC CPU 2026 launched May 5, 2026 and is available now for immediate download. Pricing runs $3,000 for new commercial customers, $2,000 for SPEC CPU 2017 upgrades (discount ends November 3, 2026), $750 for non-profit organizations, and free for accredited academic institutions (requires professor or staff request, not students).

The $3,000 barrier excludes startups and independent developers, which is controversial. But free academic access means the research community can validate vendor claims independently. Vendor results get published publicly on the SPEC website, ensuring pricing transparency. The ROI calculation is straightforward: one wrong server purchase costing $100,000 or more far exceeds the $3,000 benchmark cost.

The package includes full source code for all 52 benchmarks, run rules, documentation, FAQ, technical support from SPEC, and optional energy efficiency measurement tools. Buyers get the right to publish results publicly, which matters for competitive vendor comparisons.

What SPEC CPU Doesn’t Measure

SPEC CPU 2026 doesn’t measure GPU performance (covered by separate SPEC Accel suite), real-world applications (only synthetic benchmarks), mixed workloads (like database plus web server plus background jobs), operating system overhead (Linux scheduler, Windows kernel), web workloads (JavaScript engines, browser rendering), or storage and network I/O (intentionally minimized to isolate CPU performance).

Benchmark gaming remains a concern. Vendors can optimize compilers specifically for SPEC benchmarks instead of real-world code. The 9-year release gaps mean technology moves faster than standards update. Vendor influence is inherent – AMD, Intel, and ARM sit on the committee, creating potential conflicts. Single-metric scoring oversimplifies complex tradeoffs between latency and throughput.

These limitations are known and acknowledged. SPEC CPU measures CPU performance, not total system performance. It provides a standardized comparison point, not the complete picture. Infrastructure teams should use SPEC CPU alongside application-specific benchmarks, not as the sole decision factor.

What’s Next

Vendor results will flood in over the coming months. AMD Zen 6, Intel Panther Lake, and ARM Neoverse V4 launches will cite SPEC CPU 2026 scores. Cloud providers will update marketing materials with Graviton 4 versus x86 comparisons. Academic research will validate new architectures. By late 2026, SPEC CPU 2026 will be the industry standard, and SPEC CPU 2017 scores will fade into historical archives.

The next iteration – SPEC CPU 2036, presumably – will likely include quantum-classical hybrid workloads, photonic computing benchmarks, neuromorphic processor tests, and edge AI inference patterns. For now, SPEC CPU 2026 defines the standard for the next decade of CPU performance measurement.

The benchmarks are available now. Download them, run them, validate vendor claims yourself. Or keep trusting marketing slides – your infrastructure budget will reflect that choice.