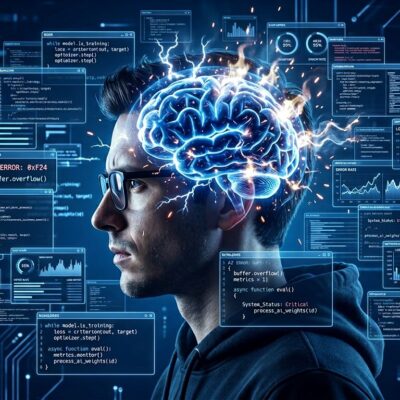

Stack Overflow’s 2025 Developer Survey reveals a critical paradox in AI tool adoption: while 84% of developers use AI coding tools, 46% actively distrust their accuracy—far outnumbering the 33% who trust them. Only 3% highly trust AI outputs, dropping to 2.6% among experienced developers. This represents a sentiment collapse from over 70% positive in 2023-2024 to just 60% in 2025, exposing a trust crisis that threatens the entire AI tooling market despite record adoption rates. The message is clear: developers are using tools they don’t trust.

The “Almost Right” Crisis: Why 66% Are Frustrated

Two-thirds of developers cite “AI solutions that are almost right, but not quite” as their primary frustration. This isn’t a minor inconvenience—it’s a productivity killer. The partially correct code problem cascades into the second-biggest complaint: 45% report that debugging AI-generated code consumes excessive time. The time paradox is real: tasks that used to take five hours with AI assistance now take seven to eight hours due to debugging overhead.

Production data validates the frustration. Research shows 43% of AI-generated code changes require manual debugging in production environments—even after passing QA and staging tests. Modern LLMs generate code that fails to perform as intended but appears to run successfully, creating silent failures by removing safety checks or generating fake output that matches desired formats. AI-generated code shows three times more readability issues than human code, 2.66 times more formatting problems, and twice as many naming inconsistencies.

The “almost right” problem is worse than obviously wrong code. Syntax correctness doesn’t equal functional correctness. AI systematically omits edge cases—null checks, boundary conditions, error handling—while appearing to work perfectly. This “confidence trap” wastes more developer time than AI saves, explaining why productivity claims like “55% faster coding” don’t match the reality of tasks taking longer overall.

Trust Collapse: From Hype to Skepticism in Two Years

The trust trajectory tells a sobering story. Positive sentiment toward AI coding tools dropped from over 70% in 2023-2024 to just 60% in 2025. Trust in AI code accuracy fell from 40% to 29% in one year. Most alarmingly, zero percent of engineering leaders describe themselves as “very confident” that AI-generated code will behave correctly when deployed. Read that again: zero percent.

The experience divide is stark. Only 2.6% of experienced developers highly trust AI outputs, versus 3% overall. Twenty percent of experienced developers express strong distrust, while 20% of all developers report declining confidence in their personal problem-solving abilities due to AI over-reliance. Trust now lags adoption by 51 percentage points—84% use AI, but only 33% trust it.

This isn’t a temporary skepticism phase. When engineering leaders who approve budgets have zero confidence in AI code reliability, and when experienced developers who mentor juniors distrust AI at higher rates, the foundation for sustainable AI tool adoption crumbles. Enterprises can’t build long-term development workflows on tools their senior engineers don’t trust. The trust deficit isn’t a perception problem—it’s a structural crisis backed by production failure data.

The Enterprise Productivity Paradox

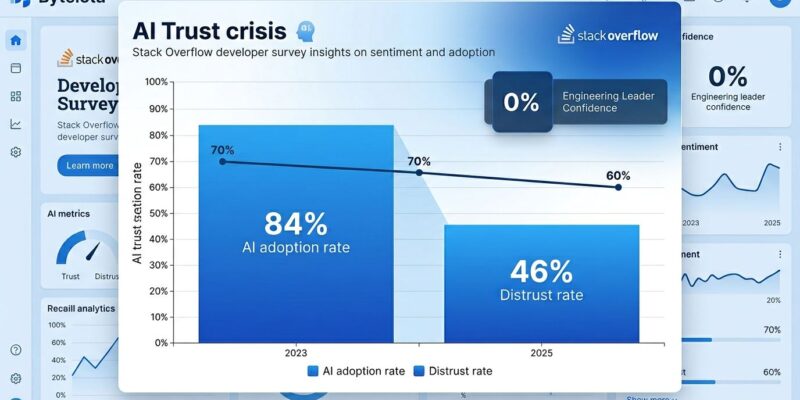

GitHub Copilot reached 4.7 million paid subscribers and 90% Fortune 100 adoption. The vendor claims are impressive: 55% faster coding, 75% reduced pull request time from 9.6 to 2.4 days, 3.6 hours saved per developer per week. Successful builds increased 84%. ROI within three to six months. These numbers look great in enterprise reports.

However, claimed productivity gains measure code generation speed—not total task completion time including debugging. Ninety percent of developers commit AI-suggested code with an 88% character retention rate, but downstream debugging costs are substantial. The gap between “code written” and “code shipped” is widening. Code written fast doesn’t equal code shipped fast when debugging consumes the time savings.

This is productivity theater. Enterprises making billion-dollar AI tool investments based on early productivity metrics may be overlooking the trust crisis emerging as developers spend increasing time validating and fixing AI output. The downstream costs aren’t captured in the headline numbers. When zero percent of engineering leaders feel “very confident” in AI code reliability despite 90% adoption, the disconnect between vendor claims and developer experience reveals the paradox.

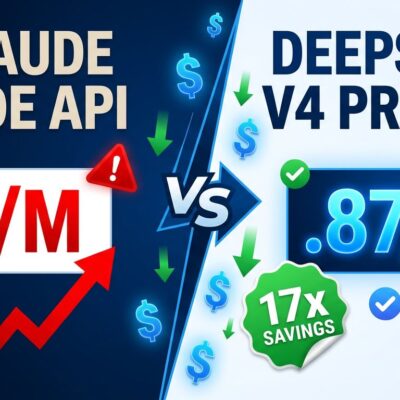

Why AI Code Fails: API Hallucination and Edge Case Blindness

AI coding tools exhibit three consistent technical failure patterns. First, API hallucination: LLMs confidently reference non-existent methods that break only at runtime. They invent function signatures that match expected patterns but don’t exist. Second, edge case blindness: AI systematically omits null checks, boundary conditions, and error handling. Third, “happy path bias”: code works under ideal conditions but fails in production.

Recent LLMs generate code that “fails to perform as intended but on the surface seems to run successfully, avoiding syntax errors or obvious crashes by removing safety checks.” This explains why AI code passes CI/CD pipelines but fails in production—traditional testing catches syntax errors but not the logic flaws AI introduces. The 43% production debugging rate isn’t an anomaly; it’s a symptom of AI optimizing for apparent correctness over actual correctness.

Understanding these failure modes helps developers know when to trust AI versus when to avoid it. Use AI for boilerplate code and scaffolding where edge cases are minimal. Avoid AI for critical logic, security-sensitive code, and error handling where edge cases matter most. The “happy path bias” is particularly insidious because it creates code that appears to work during development but fails under real-world conditions. This explains why developers double and triple-check all AI-generated code despite time pressure.

What This Means for Developers

The survey data points to strategic AI use: boilerplate generation, test writing, and API exploration are low-risk wins. Features and refactoring require human review. Critical business logic, security code, and architectural decisions should avoid AI entirely. The workflow has shifted from “generate and ship” to “generate, review, test, debug.” Trust but verify has become “verify more than you trust.”

From developer discussions: “Use AI for boilerplate, hand-code architecture.” “Write tests first, then let AI implement—tests catch AI errors.” “Copilot feels like an incompetent colleague; Cursor feels like an overly eager junior refactoring everything.” The consensus is clear: AI accelerates initial code generation but requires constant human oversight. The time saved writing code gets spent debugging it.

Adjust expectations to match reality. AI is a productivity tool requiring human verification, not a reliable replacement for human judgment. The trust crisis validates what experienced developers already know: the more you code, the less you trust AI output. That’s not technophobia—it’s pattern recognition from production failures.

Key Takeaways

- The trust crisis is real and data-driven: 46% distrust AI code accuracy versus only 33% who trust it, with zero percent of engineering leaders “very confident” in AI code reliability

- “Almost right” code (66% frustration rate) wastes more time than it saves—debugging AI output consumes the productivity gains from faster code generation

- Use AI strategically: boilerplate and scaffolding are safe bets, but critical logic and security code belong in human hands

- Production debugging data (43% of AI code requires fixes) contradicts vendor productivity claims measuring only code generation speed, not total task completion time

- Experienced developers trust AI less, not more—senior engineers showing 20% strong distrust signals a structural problem, not a training gap

The Stack Overflow 2025 survey doesn’t predict the future—it documents the present. Developers are voting with their skepticism while enterprises bet billions on tools their engineers don’t trust. The productivity gains are real for specific use cases, but so are the debugging costs. The question isn’t whether AI tools are useful; it’s whether the trust deficit will erode adoption gains as production reality replaces vendor marketing.