Cerebras Systems filed an amended S-1 with the SEC on May 4, 2026, targeting a $3.5 billion IPO at a $26.6 billion valuation—the largest tech IPO of 2026 if successful. The AI chip company is offering 28 million shares at $115-$125 each, with a mid-May Nasdaq listing under ticker CBRS. This marks Cerebras’ second IPO attempt after withdrawing in October 2025, but this time the company has a $20 billion OpenAI contract and a financial turnaround story: 76% revenue growth and $87.9 million profit, versus a $485 million loss the prior year.

For investors, this is the first public-market opportunity to bet on a credible Nvidia alternative. Cerebras’ wafer-scale chips deliver 21x faster AI inference than Nvidia’s flagship GPUs at 33% lower cost. The OpenAI partnership validates the technology at scale and provides multi-year revenue visibility—addressing the customer concentration concerns that shelved the 2024 IPO.

The $20B OpenAI Deal That Makes (or Breaks) This IPO

OpenAI signed a $20 billion multi-year contract with Cerebras in January 2026 for 2 gigawatts of AI compute capacity through 2030. This is the largest high-speed inference deployment in history. The contract includes 250 megawatts per year from 2026-2028, with a 1.25 gigawatt option through 2030. Cerebras received a $1 billion loan from OpenAI at 6% interest, while OpenAI received warrants for 33.4 million non-voting shares that fully vest only if OpenAI buys the full 2 gigawatts.

Here’s the catch: Cerebras’ S-1 filing states the OpenAI alliance “represents a substantial portion of our projected revenues over the next several years.” This is a “fool me once” situation for investors. The 2024 IPO failed because 87% of Cerebras’ revenue came from one customer (G42, an Abu Dhabi AI firm). Now G42 is down to 24% of revenue, but OpenAI has replaced it as the dominant single customer. Different name, same dependency risk.

If OpenAI builds custom chips with Broadcom (rumored) or shifts strategy, Cerebras’ $26.6 billion valuation collapses. This is both the bull case and the biggest risk.

21x Faster Than Nvidia Blackwell—How Wafer-Scale Wins on Inference

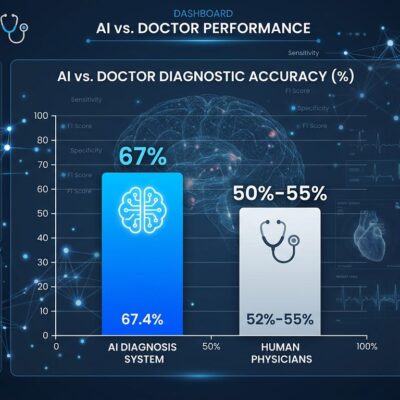

Cerebras’ WSE-3 chip delivers 1,800-2,100 tokens per second for AI inference—10-21x faster than Nvidia H100 GPUs, which manage ~90-150 tokens/sec. Latest benchmarks show Cerebras CS-3 systems are 21x faster, 33% cheaper, and 33% lower power than Nvidia’s flagship DGX B200 Blackwell.

The architectural advantage is straightforward. Cerebras preserves the entire silicon wafer as a single chip—46,225 mm² with 900,000 AI cores, compared to Nvidia H100’s 814 mm². All model weights fit on-chip in 44GB SRAM, eliminating the memory bandwidth bottleneck that limits GPU inference speed. Nvidia GPUs require transferring billions of parameters from VRAM for each token generated. Cerebras keeps everything on-chip.

The breakthrough that made this possible: 100x defect tolerance. Cerebras designed 970,000 physical cores but activates only 900,000, with routing logic bypassing defective cores. By making each core just 0.05 mm²—100x smaller than traditional cores—they reduced the area lost per defect to almost nothing. This solved the “impossible” wafer-scale manufacturing problem.

The emerging architecture: train large models on Nvidia GPUs (still best for training), deploy for inference on specialized Cerebras chips (optimized for speed). That’s why OpenAI chose Cerebras despite having access to Nvidia’s latest hardware.

Why the 2024 IPO Failed and How 2026 Is Different

Cerebras originally filed for IPO in September 2024 but withdrew before pricing. The reason: customer concentration. In the first half of 2024, G42 accounted for 87% of revenue. By 2025, customer mix diversified: Mohamed bin Zayed University of AI provided 62%, G42 dropped to 24%.

The financial turnaround strengthens the 2026 filing. Revenue hit $290.3 million in 2025 (76% YoY growth). The company turned profitable with $87.9 million net income, versus a $485 million loss in 2024. Valuation jumped from $22 billion (February 2026 Series H) to $26.6 billion in just three months.

However, look closely at the S-1 disclosures. OpenAI now represents “a substantial portion of projected revenues over the next several years.” This is the 2024 G42 problem with better optics. Single-customer dependency remains the central risk. The difference: OpenAI is more stable and credible than G42 as an anchor customer.

Can Cerebras Challenge Nvidia’s 80% Market Dominance?

Nvidia dominates with 80%+ market share and $130B+ revenue. Cerebras reported $290 million in 2025. This isn’t David vs Goliath—it’s a specialized niche player coexisting alongside an incumbent giant.

Cerebras targets inference workloads where speed is paramount. Nvidia dominates training, general-purpose compute, and the CUDA software ecosystem. Nvidia has a 15-year head start with CUDA. Every ML framework is optimized for Nvidia. Cerebras’ software stack is newer and has a smaller community.

The realistic case for Cerebras: they don’t need to beat Nvidia everywhere. If the inference market splits from the training market—train on Nvidia, serve on Cerebras—there’s room for a $26.6 billion valuation even at <10% market share. The risk: Nvidia Blackwell B200 is closing the inference performance gap. If Nvidia chips become "good enough" for inference, why would customers adopt specialized silicon?

What Investors Need to Know About the Cerebras IPO

- IPO Details: Mid-May 2026 listing, ticker CBRS, 28 million shares at $115-$125, $3.5B raise at $26.6B valuation

- Bull Case: OpenAI validation, 21x performance advantage, $100B+ inference market TAM, “train on GPUs, serve on specialized chips” architecture emerging as industry standard

- Bear Case: 92x revenue multiple ($26.6B / $290M), single-customer dependency on OpenAI, TSMC manufacturing risk, Nvidia Blackwell closing performance gap, unproven economics beyond one contract

- Dependency Risk: OpenAI contract “represents a substantial portion of projected revenues” per S-1—same concentration problem that shelved 2024 IPO, different customer

- Key Question: Is specialized inference silicon the future, or will Nvidia GPUs be “good enough”?

At a $26.6 billion valuation on $290 million revenue, Cerebras requires flawless execution and zero customer churn to justify the price. The OpenAI contract provides a revenue floor, but the dependency risk is undeniable. This is a high-risk, high-reward bet on an emerging architecture shift where training and inference use different hardware. If that bet pays off, Cerebras could own a significant share of a $100 billion inference market. If it doesn’t, this valuation looks absurd.