In a packed Oakland federal courtroom on May 1, Elon Musk admitted under oath that his AI company xAI “partly” distilled OpenAI’s models to train Grok—a confession that drew audible gasps from the audience. The admission came during cross-examination in Musk’s $150 billion lawsuit against OpenAI, where he’s accusing the company of betraying its nonprofit mission. The irony is hard to miss: Musk is suing OpenAI for alleged betrayal while simultaneously violating OpenAI’s terms of service, which explicitly ban model distillation.

The One-Word Admission That Weakens Musk’s Case

When OpenAI’s attorney William Savitt asked if xAI had distilled OpenAI’s technology, Musk responded with one word: “Partly.” Furthermore, he defended the practice by claiming “it is standard practice to use other AIs to validate your AI.” However, standard practice or not, OpenAI’s terms of service explicitly prohibit using their model outputs to develop competing AI products—exactly what xAI did with Grok.

The hypocrisy undermines Musk’s entire lawsuit. He donated $38 million to OpenAI when it was a nonprofit, and he’s now suing for $150 billion claiming the organization “stole the charity” by transitioning to a for-profit structure. Consequently, legal experts suggest admitting TOS violations while accusing others of betrayal won’t play well with the jury. During testimony, Musk even admitted “I literally was a fool” for not scrutinizing OpenAI’s nonprofit-to-profit transition documents. Moreover, his credibility took another hit when it emerged he’d texted OpenAI president Greg Brockman just two days before trial seeking a last-minute settlement.

What Model Distillation Actually Is and Why Everyone Does It

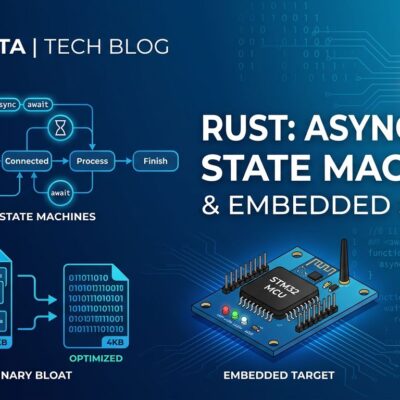

Model distillation is a technique where a smaller “student” AI is trained to mimic a larger, more capable “teacher” model. The teacher generates “soft targets”—probability distributions showing confidence across possible answers—which provide far richer training signals than simple right/wrong labels. As a result, this allows the student to run faster and cheaper while maintaining comparable performance.

The controversy isn’t the technique itself. Anthropic openly acknowledged that AI firms “routinely distill their own models to create smaller, cheaper versions.” Companies use distillation to deploy powerful model knowledge on mobile devices and edge computing. However, what makes Musk’s admission problematic is using a competitor’s proprietary model without permission. That’s not self-improvement—it’s extracting knowledge from someone else’s expensive infrastructure.

OpenAI Bans Distillation But Can’t Stop Major Players

OpenAI’s terms of service ban using “Output to develop artificial intelligence models that compete with OpenAI’s products and services.” They actively monitor accounts and revoke access when distillation is detected. In fact, developers on OpenAI’s forums report getting banned without warning, sometimes waiting 2+ weeks for support responses.

Yet Musk’s defense—”standard practice”—reveals the gap between TOS rules and reality. OpenAI even provides official distillation tools via their API while simultaneously banning external distillation. The enforcement is selective. Small developers get banned immediately. Chinese companies like DeepSeek get accused of IP theft with Congressional memos. However, well-funded US competitors like xAI? They operate openly, admit it in court, and face no consequences beyond cross-examination in a lawsuit Musk himself initiated.

The DeepSeek Double Standard Exposed

Just three months before Musk’s admission, OpenAI accused Chinese AI company DeepSeek of “illegally” distilling US models and submitted a warning to Congress on February 12. They claimed DeepSeek used “obfuscated third-party routers” to circumvent access restrictions and extract outputs at scale. Moreover, Anthropic joined in on February 24, accusing three Chinese companies of “industrial-scale” distillation campaigns. In April, the U.S. State Department issued a global warning about alleged China AI thefts.

Three months later, Musk admits xAI did the same thing. Both violated OpenAI’s TOS. Both used API outputs to train competing models. The difference? One gets framed as national security threats and IP theft. The other gets a shrug and “standard practice.” Consequently, the contrast reveals that distillation enforcement may be more about geopolitics and competitive positioning than principle.

What This Means for Developers and AI Training

The trial exposes a critical gray area: Can you use API outputs to improve your own models? The legal answer per TOS is no, if it competes. However, the practical reality per Musk is “standard practice.” Consequently, developers face account bans for behavior major companies admit openly in court.

Safe alternatives exist. Use open source models like Llama or Mistral with permissive licenses. Distill your own models. Pay for OpenAI’s official fine-tuning services. The riskiest path is using competitor APIs for training while hoping selective enforcement doesn’t target you. Until this trial establishes legal precedents, developers operate in uncertainty: follow restrictive TOS and fall behind, or adopt common practices and risk consequences.

The trial continues this week with OpenAI president Greg Brockman’s testimony. Musk’s admission has already exposed industry secrets about AI training practices. The outcome could reshape how API providers control downstream usage and whether “standard practice” becomes a valid legal defense.