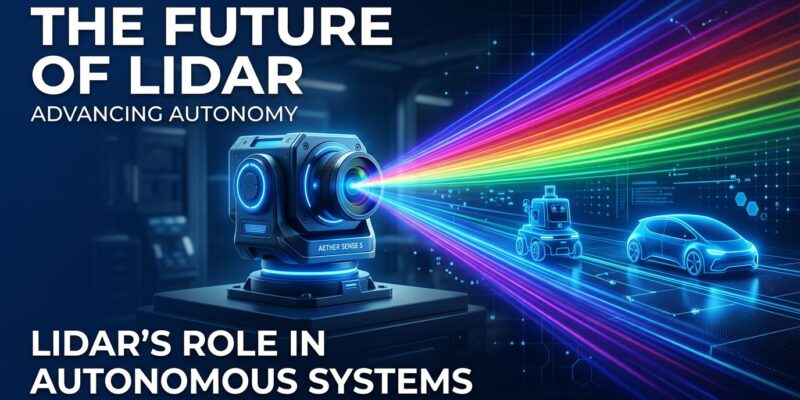

Ouster just solved what CEO Angus Pacala calls a “holy grail” problem: capturing color and depth in a single sensor. The company’s Rev8 lidar family, announced May 4, combines RGB imaging with 3D depth sensing at the silicon level—no software gymnastics required. Google, Volvo Autonomous Solutions, and dozens of robotics companies are already lined up to adopt it. If they’re right, we’re watching the beginning of the end for separate camera-plus-lidar setups.

The Sensor Fusion Mess

Every autonomous vehicle, robot, and drone faces the same problem: cameras capture color and texture but can’t judge distance accurately. Lidar measures depth precisely but sees the world in monochrome points. The industry’s answer for years has been to bolt both sensors onto a platform and fuse their data in software.

It’s uglier than it sounds. You need precise spatial calibration so the camera and lidar agree on what they’re looking at. You need temporal synchronization because they capture data at different rates. And you need complex processing pipelines to merge it all without adding latency. As one recent study put it: “A deficiency in any one of these areas will fundamentally undermine the integrity and safety of the entire autonomous system.”

Calibration drifts over time from vibration and temperature changes. Sync errors accumulate. Processing overhead burns compute. And you’re paying for two sensors, two integration efforts, and two potential failure points.

Hardware Fusion Cuts the Knot

Rev8 sidesteps all of this by doing fusion at the chip level. Ouster’s L4 silicon integrates Fujifilm color science directly into the sensor architecture. The result: a point cloud that arrives with color data already baked in. No calibration matrices. No extrinsic parameters. No sync pipeline.

The specs don’t compromise. Rev8 delivers 48-bit color depth—matching or exceeding most cameras. The flagship OS1 Max sensor hits 200 meters at 10 percent reflectivity, with a 500-meter maximum range that beats typical lidar systems. Its 116 dB dynamic range means it works from near-darkness (1 lux) to blinding sunlight (2 million lux) without switching modes.

Under the hood, the L4 chip processes 20 trillion photons per second with picosecond timing precision, spitting out 10.4 million points per second across 256 channels. It’s not a camera with depth sensing tacked on, and it’s not lidar with color filters added. It’s a ground-up rethink of how sensors perceive the world.

Who’s Buying In

Google and Volvo Autonomous Solutions don’t sign up for unproven tech. Their commitment to Rev8—alongside Field AI, Skydio, Flyability, PlusAI, and two dozen other robotics and autonomous vehicle companies—signals this is production-ready hardware, not a prototype fishing for funding.

The use cases span the autonomy spectrum. Robotaxis and self-driving trucks get simplified perception stacks. Warehouse robots lose calibration headaches. Drones (both indoor and outdoor) benefit from sensors that work in any lighting. Mining and construction equipment gain reliable sensing in dusty, vibration-heavy environments where camera-lidar calibration would drift within hours.

Ouster says Rev8 is shipping this quarter—Q2 2026. That timeline matters. Plenty of lidar announcements turn out to be vaporware or perpetually “coming soon.” Rev8 is available to order today, which means real-world deployments will start showing up in 2026 and 2027, not some theoretical future.

The Economics of Simplification

Current sensor costs tell the story. Individual cameras run $20 to $500, but autonomous vehicles need 8 to 12 of them. Mid-range lidar costs $200 to $1,000. High-end systems like those from Waymo and Cruise can hit $75,000 per unit. The total sensor stack for a Level 4 autonomous vehicle can exceed $100,000 in components alone.

Rev8’s pricing isn’t public yet, but the value proposition is clear even without numbers. One sensor instead of two means single procurement, simpler integration, and lower maintenance costs. More importantly, it removes entire categories of engineering problems. No calibration drift means fewer field service calls. No sync issues means more reliable operation. Simpler software stacks mean faster development cycles.

For developers, that simplification is the real win. Every robotics team has burned weeks (or months) getting camera-lidar calibration to work reliably across temperature swings and vibration. Rev8 makes that entire problem category disappear. Point clouds arrive with color. That’s it.

What Happens to Cameras

The camera industry won’t vanish overnight. Cameras are cheap, well-understood, and already deployed in millions of systems. But Rev8 creates a credible path to camera displacement in autonomous applications over the next few years.

Early adopters will likely be new deployments—robotaxi fleets that haven’t committed to a sensor architecture yet, warehouse automation projects that can design around Rev8 from day one. Existing systems with mature camera-lidar stacks won’t rip-and-replace, but they’ll watch early adopters closely. If Rev8 delivers on reliability and simplicity, the next generation of autonomous platforms will look very different from today’s.

The timeline is aggressive but plausible. If Google and Volvo start deploying Rev8-equipped systems in late 2026 or early 2027, other companies will have real-world data to evaluate. By 2028, color lidar could be the default choice for new autonomous projects. Cameras would stick around for legacy systems and specialized use cases, but the sensor fusion era would be over.

Available Now

Ouster’s Rev8 isn’t a concept or a research project. It’s shipping this quarter to companies that include some of the biggest names in autonomy. That’s the detail that matters most. For developers working on robotics, autonomous vehicles, or any system that needs to perceive its environment, the architecture just got simpler.

No more calibration. No more sync. Just color point clouds, available today.