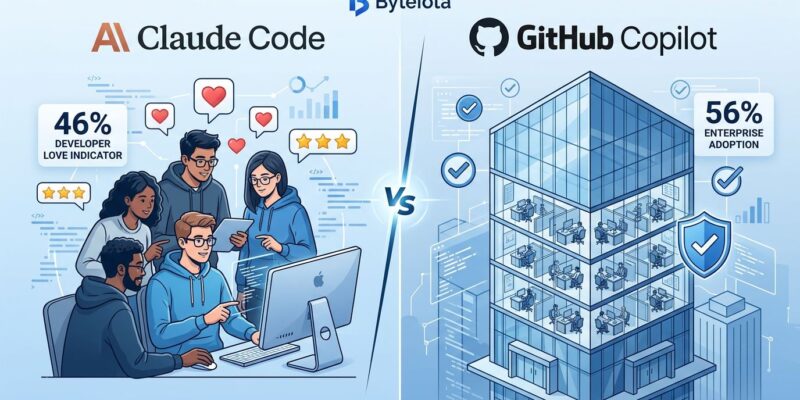

The AI coding tools market is consolidating fast in 2026, and the numbers tell a story most vendors won’t. Claude Code dominates developer satisfaction at 46% “most loved”—more than double Cursor’s 19% and five times GitHub Copilot’s 9%—according to the Pragmatic Engineer’s survey of 15,000 developers from February 2026. Yet GitHub Copilot still commands 56% of enterprises with over 10,000 employees per JetBrains’ research. Moreover, this gap between what developers want and what procurement departments buy is reshaping the industry, with market analysts predicting consolidation to just 2-3 dominant players by Q3 2026. Interestingly, 70% of engineers already run 2-4 tools simultaneously because no single option covers all use cases.

The Satisfaction-Adoption Paradox

According to JetBrains’ January 2026 survey of 10,000+ developers, GitHub Copilot leads workplace adoption at 29%, with both Cursor and Claude Code tied at 18%. However, flip to the Pragmatic Engineer’s survey of 15,000 developers from February 2026, and Claude Code obliterates the competition on satisfaction: 46% “most loved” versus Cursor’s 19% and Copilot’s paltry 9%. Meanwhile, Cursor holds the highest revenue at $2B ARR despite its middle-ground position, proving that market share, revenue, and developer preference each tell different stories.

Furthermore, this isn’t just a metrics quirk—it’s a retention crisis waiting to happen. Small companies under 100 employees overwhelmingly choose Claude Code (75% adoption), prioritizing the 91% customer satisfaction score and autonomous execution capabilities. In contrast, enterprises flip the script entirely: 56% opt for GitHub Copilot, trading developer happiness for IP indemnity protections and GitHub ecosystem integration. Consequently, developers are forced onto tools with 9% satisfaction ratings while knowing alternatives score 46%. That breeds shadow IT and turnover.

Claude Code’s 15x Surge in Under a Year

Claude Code exploded from 4% adoption in May 2025 to 18% by January 2026 (JetBrains data) and hit 63% in the Pragmatic Engineer’s February survey—a 15x surge in under a year. More impressive: it achieved $1 billion annualized run rate in just six months post-launch, the fastest in industry history. By early 2026, estimates put Claude Code’s ARR around $2.5 billion, representing 13% of Anthropic’s total ~$19 billion.

Additionally, the performance edge is real. Among developers using both Claude Code and Copilot, 61% rate Claude Code more accurate for complex debugging, while 73% give Copilot the speed crown for routine completions. Specifically, Claude Code dominates complex tasks with 44% market share versus Copilot’s 51% for simple autocomplete. Technical metrics back this up: 58.0% on SWE-bench Verified, 95% first-try correctness, and a 200K token context window (1M in beta) versus Copilot’s 64K limit. Meanwhile, JetBrains noted GitHub Copilot’s growth “stalled since 2025” and Cursor’s is “slowing.” Market momentum has shifted.

Consolidation Timeline: 2-3 Leaders by Q3 2026

The IDE-native AI coding category is heading for brutal consolidation. Market analysts predict just 2-3 dominant players by Q3 2026, with smaller entrants like Google Antigravity (6% adoption after November 2025 launch) and Windsurf struggling for oxygen. Currently, the leaders split by different metrics: GitHub Copilot owns adoption (29% workplace, 4.7M paid subscribers), Cursor claims revenue ($2B ARR, 1M+ paying users), and Claude Code dominates satisfaction (46% most loved, 91% CSAT).

Here’s what’s more interesting: 70% of engineers already use 2-4 AI coding tools simultaneously, and this multi-tool approach is expected to become the enterprise standard by mid-2027 according to market analysts. The most common pattern? Cursor for editing sessions + Claude Code for complex refactoring. Enterprise procurement is shifting from “which tool wins?” to “which tool stack covers our use cases?” This makes sense—Copilot wins autocomplete speed (73% rating), Claude Code wins debugging accuracy (61%), and Cursor balances both with 1.42x productivity improvement over Copilot on complex features per CTO analysis. No single tool dominates every scenario.

The Verification Bottleneck Crushing “10x” Claims

AI coding tool vendors love to tout “10x productivity,” but the data tells a messier story. Teams with high AI adoption saw PR review time increase 91%, and 96% of developers report they don’t fully trust AI-generated code is functionally correct according to productivity research. Reality check: productivity gains on routine tasks like boilerplate and test generation hit 25-39%, not 10x. For experienced developers tackling complex problems, AI tools can actually slow them down by 19% due to prompting and debugging overhead.

Stack Overflow’s 2025 developer survey identified the top AI frustration: “solutions that look correct but are slightly wrong.” Nearly half of developers say debugging AI-generated code takes longer than writing it themselves. Furthermore, add in the security concern—48% of AI-generated code contains vulnerabilities, with 27% of production code now AI-authored—and the verification burden becomes clear. The bottleneck has shifted from code generation (fast with AI) to code verification (slower, requires deep review). Consequently, enterprises need to invest in testing infrastructure, security scanning, and rigorous code review processes, especially for critical paths like authentication, payments, and PII handling. The higher accuracy ratings for Claude Code (61% for complex debugging) matter precisely because they reduce this verification tax.

Company Size Determines Your Tool

One-size-fits-all tool recommendations don’t work in this bifurcated market. Small companies under 100 employees pick Claude Code (75% adoption), maximizing developer satisfaction and autonomous capabilities despite less polished IDE integration. In contrast, enterprises with 10,000+ employees go with GitHub Copilot (56%), prioritizing IP indemnity and GitHub integration over the 9% satisfaction rating. Mid-market teams (5-30 developers) often land on Cursor as the balance play, achieving 1.42x productivity gains with its 1M token context window and multi-model flexibility.

Therefore, the takeaway: your company size largely predicts your tool. Small teams can optimize for satisfaction. Enterprises must balance compliance requirements with retention risks from forcing low-satisfaction tools on developers. Mid-market has the flexibility to choose based on workflow preferences. As consolidation accelerates toward 2-3 leaders by Q3 2026 and multi-tool strategies become the enterprise default by mid-2027, developers should match tools to task complexity rather than betting on a single platform. Copilot for quick autocomplete, Claude Code for complex refactoring, Cursor for balanced workflows—the market is segmenting by use case, and the smart play is embracing that reality instead of fighting it.