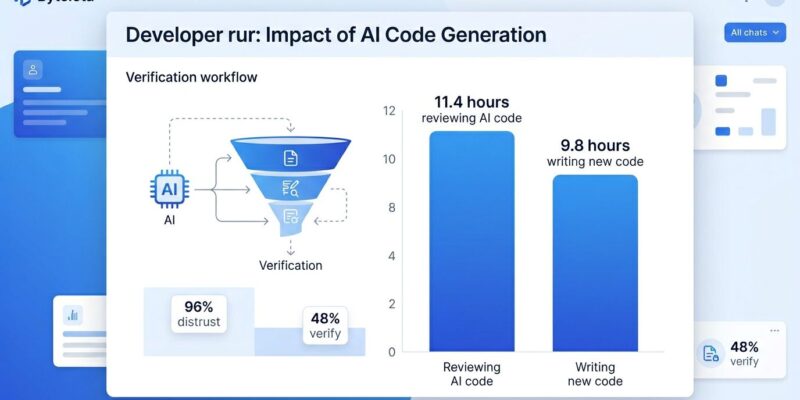

Developers now spend more time reviewing AI-generated code than writing new code—11.4 hours per week versus 9.8 hours, according to a 2026 survey of 2,847 developers. This reversal marks a fundamental shift in software engineering. AI promised productivity gains by accelerating code generation, and it delivered: coding velocity is up 50%. However, the productivity miracle came with a hidden cost—the AI verification bottleneck.

The Trust-Verification Gap

The numbers reveal a troubling paradox. The 2026 State of Code Developer Survey, conducted across 1,149 professional developers globally, found that 96% don’t fully trust AI-generated code output. Yet only 48% always verify it before committing. Moreover, this disconnect between concern and action defines what researchers call the “verification gap.”

The gap widens under scrutiny. While 72% of developers who tried AI use it every day, and 95% spend at least some effort reviewing AI output, a majority—59%—rate that verification effort as moderate or substantial. Furthermore, 59% of teams report verification has become an outright bottleneck, slowing development despite AI’s promise of speed.

Why don’t developers verify code they don’t trust? Time pressure is one factor. Workflow friction is another—old code review processes weren’t designed for AI velocity. Consequently, the core issue is simpler: developers assume AI-generated code is “close enough” and trust their tests to catch problems. That assumption fails in production.

What AI Code Lacks: Four Production Pillars

AI-generated code consistently misses four production pillars: error handling, idempotency, retry logic with backoff, and structured observability. These aren’t style issues or minor bugs. Instead, they’re the difference between code that works in demos and code that survives production.

Error handling is incomplete or absent. AI optimizes for the happy path, neglecting null checks and edge cases. Additionally, idempotency—ensuring operations are safe to retry—is rarely implemented. As one engineer put it: “If a tool call can create, refund, email, delete, or deploy, it must be safe to retry. Every write gets an idempotency key, and the gateway rejects duplicates. This one change prevents a huge class of production pain.” AI doesn’t make that change.

Retry logic and observability face similar neglect. AI skips exponential backoff patterns and structured logging that enable debugging. Therefore, the result is code that looks right, passes casual review, and breaks in production when edge cases emerge.

Production Failures: The 43% Problem

The gap between “passes tests” and “works in production” defines the verification bottleneck. Lightrun’s 2026 State of AI-Powered Engineering Report—a survey of 200 senior SRE and DevOps leaders across the US, UK, and EU—found that 43% of AI-generated code changes require manual debugging in production, even after passing QA and staging tests.

Zero percent of engineering leaders surveyed described themselves as “very confident” that AI-generated code will behave correctly once deployed. In fact, most require multiple redeploy cycles: 88% need two to three attempts to verify AI fixes work correctly, while 11% require four to six cycles.

Real-world incidents underscore the risk. In March 2026, Amazon suffered high-profile outages traced to AI-assisted code changes deployed to production without proper approval. The result: millions of lost orders and a public reminder that AI code verification matters.

Security amplifies the problem. Between 40% and 62% of AI-generated code contains security vulnerabilities—SQL injection, cross-site scripting, buffer overflows, hardcoded credentials. Consequently, AI-generated code now causes one in five security breaches, according to Aikido Security’s 2026 report. AI tools fail to prevent XSS in 86% of test cases and introduce log injection vulnerabilities in 88% of scenarios. QA catches syntax errors; it doesn’t catch security flaws hidden in “correct” code.

How Teams Are Adapting to the Verification Bottleneck

The most effective verification strategy is counterintuitive: write tests before AI generates implementation. When AI generates both code and tests, the tests validate the AI’s interpretation of requirements. However, when you write tests first, the tests validate actual requirements. That distinction matters.

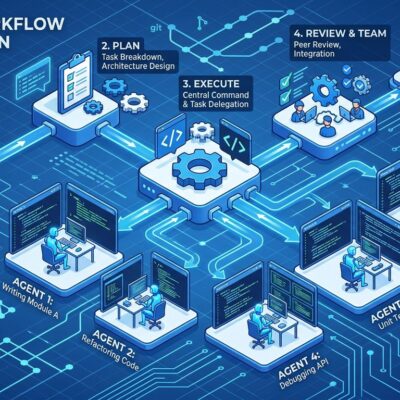

Teams are rebuilding workflows around AI’s strengths and weaknesses. The new pattern: AI review happens first, providing immediate analysis when a developer opens a pull request. The developer addresses AI-identified issues before requesting human review. Meanwhile, human reviewers focus on architecture, business logic, and user experience—not style inconsistencies or obvious bugs AI already caught.

This division of labor works, but it requires rigor. Teams without quality guardrails see a 35% to 40% increase in bug density within six months of adopting AI assistants. Therefore, effective guardrails include static analysis on every commit, security scanning in CI/CD pipelines, human review checkpoints for business logic, and production monitoring that specifically tracks AI-generated code quality.

Code ownership is critical. Every piece of AI-generated code needs a clear human owner—someone who can explain what it does, why it exists, and how to fix it when (not if) it breaks. Consequently, teams that skip this step discover verification gaps when incidents occur and no one understands the code.

The Developer Role Transformation

AI currently accounts for 42% of all committed code, expected to reach 65% by 2027. This isn’t a temporary trend. Furthermore, the developer’s job is transforming from writer to reviewer and verifier. The skill set is shifting: from “can I code this?” to “can I verify this?”

The implications are immediate. By 2026, 92% of US developers use AI tools daily. The 11.4 hours per week spent reviewing AI code will increase as AI’s share of commits climbs toward 65%. Therefore, developers who master verification—writing effective tests, architecting verification workflows, identifying what AI consistently misses—will thrive. Those who resist the shift from writing to reviewing will struggle.

Developer sentiment reflects the tension. Thirty-five percent report average productivity boosts, and 54% report higher job satisfaction from using AI tools. However, the same developers spend more time verifying output than generating it. The productivity gains are real. So is the verification cost.

The AI verification bottleneck won’t resolve by building better AI models. Instead, it resolves by training developers on verification skills and building workflows designed for AI velocity. Teams that adapt to this reality—writing tests first, enforcing rigorous verification, assigning code ownership—will capture AI’s productivity gains. Consequently, those that don’t will watch 43% of their AI code fail in production and wonder why velocity didn’t translate to delivery.

Key Takeaways

- The time reversal is real: Developers spend 11.4 hours per week reviewing AI code versus 9.8 hours writing new code

- The trust-verification gap persists: 96% of developers distrust AI-generated code, but only 48% always verify it before committing

- Production failures are common: 43% of AI-generated code requires manual debugging in production, even after passing QA

- Adaptation strategies work: Writing tests before AI generates implementation is the most effective verification approach

- The role is transforming: As AI code climbs from 42% to 65% of commits, developers shift from writers to reviewers and verifiers