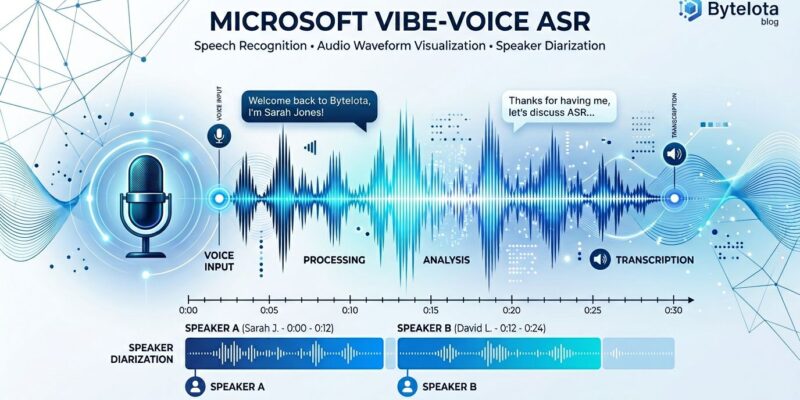

Long-form audio transcription traditionally means choosing between accuracy and convenience. Chunk your podcast into segments and lose quality at boundaries. Process the entire hour and wait while your pipeline stitches together transcripts, runs speaker diarization separately, then aligns timestamps manually. Microsoft’s VibeVoice ASR, open-sourced January 21, 2026 and integrated into Hugging Face Transformers in March, eliminates these trade-offs. It handles 60-minute recordings in one pass, delivering transcription, speaker identification, and timestamps simultaneously. MIT licensed and trending at 43,000 stars, it’s the first open-source ASR that makes long-form transcription genuinely practical.

What Makes VibeVoice Different From Whisper

Traditional speech recognition runs multi-stage pipelines. Whisper transcribes audio. Pyannote identifies speakers. Forced alignment tools map timestamps. You stitch the outputs together and hope speaker IDs match across segments.

VibeVoice uses a unified architecture combining acoustic and semantic tokenizers with an LLM decoder. One inference pass outputs WHO (speaker ID), WHEN (timestamps), and WHAT (transcription) simultaneously. No pipeline complexity. No quality loss at chunk boundaries. Consistent speaker tracking across the full hour.

Simon Willison tested this on a 99.8-minute podcast using a 128GB M5 Max MacBook Pro. Processing took 8 minutes 45 seconds—11.4x real-time. The output JSON identified three distinct speakers including separating the host’s intro voice from the conversation. Memory peaked at 61.5GB during prefill, settling to 18GB during generation.

Quick Start: Install and Transcribe in 60 Seconds

The Transformers integration makes deployment straightforward. Install the library, load the model, transcribe. No Docker containers, no vLLM plugins unless you want them.

from transformers import pipeline

asr_pipeline = pipeline("automatic-speech-recognition",

model="microsoft/VibeVoice-ASR")

result = asr_pipeline("podcast_episode.mp3")

print(result)This handles audio up to 60 minutes in a single call. The default max_tokens setting of 8,192 processes roughly 25 minutes. For full hour-long transcription, increase it manually to 32,768 tokens. Output formats include “parsed” for JSON with speaker IDs and timestamps, or “transcription_only” for plain text.

File format compatibility is solid. Simon’s test used both WAV and MP3 without issues. The model accepts common audio formats without preprocessing.

When to Choose VibeVoice Over Whisper

VibeVoice isn’t a Whisper replacement. It’s a complementary tool for different use cases. Whisper delivers 8.06% WER accuracy, the industry benchmark for speech recognition. VibeVoice trades slightly lower accuracy (exact WER unpublished) for built-in diarization and unified output.

Choose VibeVoice when you need speaker diarization for podcasts, meetings, or interviews. The unified pipeline eliminates the complexity of running Whisper for transcription, then pyannote for diarization, then a separate alignment tool for timestamps. You get everything in one API call.

Choose Whisper when accuracy matters more than pipeline simplicity. If you’re processing short clips under 10 minutes, don’t need speaker identification, or run on limited RAM (Whisper’s base model fits in 4GB), stick with Whisper. For audio exceeding 60 minutes, Whisper has no duration limit while VibeVoice requires manual splitting.

Cost becomes relevant at scale. Self-hosting VibeVoice on AWS g5.2xlarge costs $0.90/hour GPU compute. Whisper API charges $0.006/minute ($0.36/hour). Deepgram API runs $0.0218/minute ($1.31/hour). The break-even point sits around 40 hours monthly transcription. Beyond that threshold, VibeVoice’s infrastructure costs justify the complexity.

Production Deployment: GPU Requirements and API Wrapper

Running VibeVoice in production requires planning. You need 16GB VRAM minimum for short audio, 24GB+ for 60-minute recordings. Memory usage runs 18-60GB RAM depending on audio length. Cloud options include AWS g5.2xlarge, Azure NC6s_v3, or GCP instances with T4 GPUs.

A basic FastAPI wrapper handles async transcription:

from fastapi import FastAPI, UploadFile

from transformers import pipeline

import tempfile

app = FastAPI()

asr_pipeline = pipeline("automatic-speech-recognition",

model="microsoft/VibeVoice-ASR",

device="cuda:0")

@app.post("/transcribe")

async def transcribe(file: UploadFile):

with tempfile.NamedTemporaryFile(suffix=".mp3") as tmp:

tmp.write(await file.read())

result = asr_pipeline(tmp.name, max_new_tokens=32768)

return {"transcription": result}For audio exceeding 60 minutes, split into 55-minute chunks with 5-minute overlap. Manually realign speaker IDs across segments afterward. The 60-minute hard limit is architectural—it’s not changing without model redesign.

Microsoft warns against commercial use without further testing. The community disagrees. Real-world deployments show production-ready performance for English and Chinese audio. Other languages remain unreliable. The model cannot handle overlapping speech or background music.

Is VibeVoice Production-Ready Despite Microsoft’s Warning?

Microsoft’s “not recommended for production” disclaimer conflicts with community evidence. Developers are running it successfully at scale. The warning likely reflects legal caution rather than technical limitation. English and Chinese transcription works reliably. Speaker diarization performs consistently. The 60-minute limit and high RAM requirements are real constraints, not bugs.

The bigger limitation is language support. Non-English/Chinese languages produce unreliable output. If your use case involves multilingual content beyond those two languages, Whisper handles 99 languages more reliably.

Key Takeaways

VibeVoice solves a specific problem Whisper doesn’t: unified transcription and diarization for long-form audio. The 60-minute limit and 18-60GB RAM requirements restrict deployment scenarios, but for podcasters, meeting transcribers, and anyone processing multi-speaker content at scale, it’s the best open-source option available. The cost math works when you exceed 40 hours monthly. Below that threshold, commercial APIs remain simpler.

This isn’t a Whisper killer. It’s a complementary tool. Use VibeVoice for long-form content with speaker identification. Use Whisper when accuracy or multilingual support matters more than pipeline simplicity. The right choice depends on your constraints, not the hype cycle.