On April 24, 2026, Meta announced a multi-billion dollar deal with AWS to deploy tens of millions of Graviton5 ARM cores, becoming one of AWS’s largest Graviton customers. The move signals a strategic shift: while GPUs dominate model training, ARM CPUs win for AI inference and agentic workflows. For developers, the “just add more GPUs” era is over.

Why CPUs Matter in Agentic AI

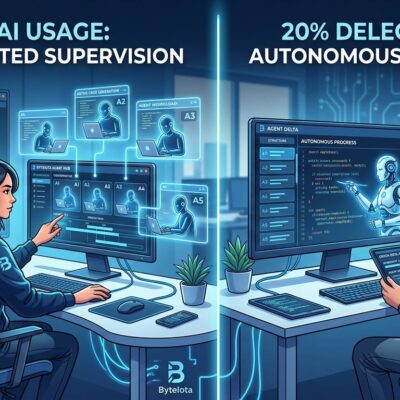

Agentic AI workloads break GPU-centric assumptions. Unlike model training’s GPU-friendly matrix multiplication, agents spend time orchestrating—coordinating models, managing control flow, handling branching logic, executing real-time decisions. These are CPU-bound tasks.

AMD research shows CPU:GPU ratios shifting from 1:8 (training-focused) to 1:1 (agentic AI)—roughly 86-120 CPU cores per GPU. The bottleneck moved from parallel computation to sequential orchestration, where CPUs excel.

Meta’s “tens of millions of cores” deployment validates this. Real-time AI agents coordinating searches, generating code, making API calls, and reasoning through workflows need fast single-core performance and low inter-core latency. Graviton5 delivers both.

Graviton5 Technical Advantages

AWS Graviton5 delivers 192 Arm Neoverse V3 cores (3nm process), 180MB L3 cache (5x larger than Graviton4), and 25-60% performance improvements. Databases run 30% faster, web apps 35% faster, ML workloads 35% faster versus Graviton4.

Key specs for AI inference: 33% lower inter-core latency despite doubling core count, 115-120 GB/s memory bandwidth (vs 60-90 GB/s for x86), and 300W TDP (vs 500W for comparable x86). Memory bandwidth matters for LLM serving. Massive core counts and low latency benefit multi-agent systems.

The Economics: 20-40% Cost Savings

ARM’s cost advantage over x86 amplifies for AI inference. Google’s Arm-based Axion CPUs deliver 2x better price-performance than Intel/AMD x86. AWS Lambda on Graviton2 achieves 34% better price-performance with 20% lower costs.

AWS case studies document 35% LLM inference cost reductions switching from x86 to ARM64. At Meta’s $125 billion AI capex scale, even 20% efficiency gains mean billions saved—justifying the Graviton5 commitment.

ARM’s RISC design uses simpler instructions and lower power per core, enabling more cores per watt and dollar. Saving 200W per server across millions of cores reduces power demand by gigawatts.

Meta’s Diversified Chip Strategy

Meta’s strategy diversifies across vendors: ~$50B to Nvidia (Blackwell/Rubin GPUs, Grace CPUs), ~$60B to AMD (MI450 GPUs), multi-billion to AWS (Graviton5), plus custom MTIA chips (RISC-V). Nvidia and AMD handle training. Graviton5 handles inference and agentic orchestration. MTIA handles specialized inference.

The lesson: profile workloads and choose compute accordingly. Training foundation models? GPUs required. Real-time chatbot inference? ARM CPUs deliver better cost-per-token. Multi-agent orchestration? High-core-count CPUs with low latency. One-size-fits-all is dead.

ARM Migration Is Straightforward

Pure Python, Node.js, Java run on ARM64 unchanged. Docker supports multi-arch builds: docker buildx build --platform linux/amd64,linux/arm64. Lambda functions migrate by changing architecture settings.

Native dependencies (numpy, pandas, Pillow, psycopg2) once caused friction, but most packages now ship ARM64 wheels. Docker local testing (--platform linux/arm64) catches edge cases.

Recommended path: start with stateless workloads (Lambda, containerized microservices, API gateways). Test in staging with canaries. Measure savings. Expand gradually. Migration friction is far lower than 20-40% cost savings suggest.

AI Infrastructure Fragmentation Accelerates

Every hyperscaler now builds custom silicon: AWS Graviton/Trainium, Google TPU/Axion, Azure Maia/Cobalt. Arm predicts ARM-based CPUs in custom AI servers jumping from 25% (2025) to 90% (2029). At gigawatt scale, ARM’s power efficiency saves $10B capex versus x86.

Developers face new complexity: multi-arch builds, cross-platform testing, vendor-specific optimizations. But opportunities emerge: cost arbitrage between compute types, negotiating leverage with providers, performance optimization through workload profiling.

What Developers Should Do

Audit GPU usage. Training justifies GPUs. Inference often doesn’t—especially agentic AI spending more time orchestrating than computing. Experiment with ARM instances for stateless workloads. AWS Graviton, Google Axion, Azure Cobalt offer trials.

Profile infrastructure costs. For LLM serving or AI agents, compare ARM CPU vs GPU costs for equivalent throughput. Economics often favor ARM by 2-5x for inference. That’s profitability versus cash burn.

Stop thinking “just throw GPUs at it.” Meta’s Graviton5 deal proves smart AI teams optimize for workload types, not blindly scale one architecture. That’s AI infrastructure maturity.