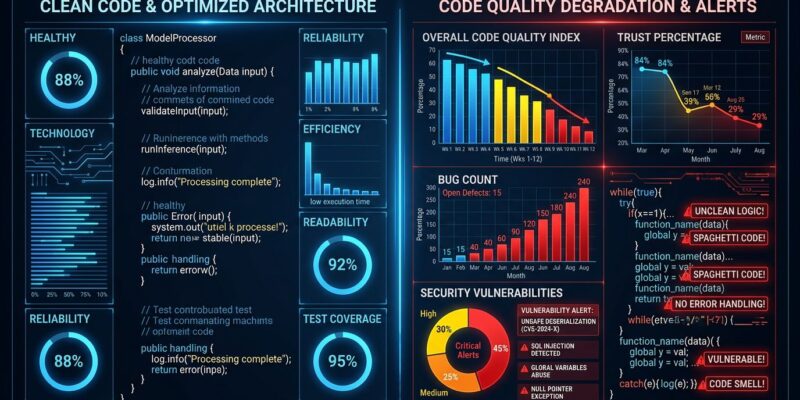

Eighty-four percent of developers use AI coding tools in 2026, but only 29 percent trust them—a trust gap that dropped 11 percentage points from 2024. This isn’t paranoia. CodeRabbit’s analysis of millions of pull requests shows AI-generated code contains 1.7 times more bugs than human-written code: 10.83 issues per PR versus 6.45 for human code. The industry promised AI would make developers faster and better. It delivered faster. The better part still needs work.

The Quality Data: Real Benchmarks, Real Problems

Multiple 2026 benchmark reports confirm what developers suspected. AI-generated pull requests average 10.83 issues each compared to 6.45 for human-generated PRs. That’s 1.7 times more problems when AI is involved. Moreover, critical issues are 1.4 times more common, and logic errors appear 1.75 times more frequently—194 per 100 AI pull requests.

The problems run deeper than bugs. A Stanford and MIT joint study published in March 2026 analyzed over two million AI-generated code snippets and found 14.3 percent contained at least one security vulnerability, compared to 9.1 percent in human-written code. Performance inefficiencies appear eight times more frequently in AI code. Furthermore, GitClear’s research across 211 million lines found heavy AI users generate nine times more code churn than developers who don’t rely on AI.

The “almost right” problem frustrates developers most. Sixty-six percent cite AI solutions that are almost correct but not quite as their biggest complaint. Additionally, forty-five percent say debugging AI code takes more time than before they adopted AI tools. Ninety-six percent struggle to trust that AI-generated code is functionally correct, yet only 48 percent always verify it before committing—a dangerous verification gap.

Why AI Code Quality Falls Short

AI coding tools pattern-match rather than understand. They generate code that compiles and looks reasonable but lacks system-wide context awareness, edge case consideration, and security implications understanding. Consequently, this creates specific failure modes that plague AI-generated code.

Silent failures are particularly dangerous. Recent large language models often generate code that runs without syntax errors or obvious crashes but doesn’t perform as intended. The code might remove safety checks to “make it work” or create fake output instead of real functionality. It passes basic tests but fails in production.

Over-editing compounds the problem. AI modifies code beyond the necessary scope, introducing unnecessary changes and reducing efficiency. Meanwhile, code duplication increases because AI doesn’t find existing functions—it creates duplicates instead. This leads to inconsistent business logic scattered across the codebase. Update one function and miss the duplicate, and you have conflicting implementations.

The Verification Burden: Time Reviewing, Not Writing

Developers complete 21 percent more tasks with AI and merge 98 percent more pull requests. That sounds like productivity. However, when you check the review data, pull request review time increased 91 percent. The bottleneck shifted from writing code to verifying it.

In 2024, developers spent 9.8 hours per week writing new code. In 2026, they spend 11.4 hours per week reviewing AI-generated code—more time reviewing than writing. The net result contradicts the productivity narrative. Experienced developers were 19 percent slower with AI tools despite reporting they felt 20 percent faster. Feeling productive isn’t the same as being productive.

Projects that lean too heavily on AI produce 41 percent more bugs. The speed gains evaporate when those bugs hit production. Therefore, quality processes must scale with AI adoption, or the verification burden cancels any time saved during code generation.

When to Use AI Coding Tools and When to Avoid Them

AI excels at boilerplate code. CRUD endpoints, server setup, repetitive patterns—errors in these areas are easy to catch and have minimal consequences. Similarly, AI also works well for rapid prototyping, test scaffolding with human verification, and explaining existing codebases.

Avoid AI for complex business logic, security-critical features like authentication and encryption, and sensitive codebases in healthcare, finance, or defense where sending code to cloud APIs isn’t an option. AI lacks strategic judgment for architectural decisions and fails on projects requiring deep context.

The best approach combines both. Use AI selectively for what it handles well. Verify rigorously. Higher test coverage for AI code—85 to 90 percent versus 70 to 80 percent for human code. Multi-layer verification: AI review first, human review second, comprehensive testing third. Track AI-related defects systematically instead of treating them as anecdotal.

Meta’s just-in-time testing demonstrates the path forward. Instead of static test suites, generate tests during code review using LLMs, mutation testing, and intent-aware workflows. This approach improved bug detection by four times in AI-assisted development.

2026: From Speed to Quality

The industry is shifting. 2025 was the year of AI speed. 2026 is the year of AI quality. Companies now track AI-related defects with the same rigor as security incidents. Notably, quality metrics are replacing speed metrics—defect density matters more than cycle time.

Multi-agent validation workflows are emerging. One agent writes code, another critiques it, a third tests it, a fourth validates compliance. This replaces single-agent generation with systematic verification. The reality check is simple: AI code can be four times faster but ten times riskier. Early adopters hit quality walls. Enterprises now demand reliability over velocity.

The trust gap between 84 percent adoption and 29 percent trust isn’t a perception problem. It’s a quality problem backed by benchmark data. AI coding tools work when used strategically—for the right tasks, with proper verification, and realistic expectations. The promise of faster and better code is half-fulfilled. Speed arrived. Quality is still catching up.