Hono—a Web Standards-based framework that runs identically on Cloudflare Workers, Deno, Bun, AWS Lambda, and Node.js—surged to 29,449 GitHub stars with 26.6% month-over-month NPM download growth in March 2026. At just 14KB minified and achieving 402,820 operations per second in benchmarks, it solves the edge computing framework gap that Express and Fastify can’t fill. Consequently, edge computing crossed from experimental to production-ready in 2026, and Hono provides the developer experience that makes edge deployment feel as simple as traditional serverless—but with global distribution, 0ms cold starts, and cost savings of 10-200% for high-volume APIs.

Why Edge APIs Need a New Framework

Express and Fastify are Node.js-only frameworks. Running them on edge runtimes like Cloudflare Workers requires polyfills and compatibility layers that bloat bundles and add complexity. Hono takes the opposite approach: built on Web Standards (Fetch API and WinterCG specification), it uses the same APIs that power Cloudflare Workers, Deno, and Bun natively. As a result, the same codebase runs everywhere without modification.

The performance difference is stark. Express weighs 572KB minified. Hono: 14KB. Express on AWS Lambda suffers 100-1000ms cold starts from container initialization. Hono on Cloudflare Workers: 0ms cold starts using V8 isolates instead of containers. Moreover, benchmarks show 402,820 ops/sec on Workers—faster than any comparable edge framework. This isn’t marginal improvement. It’s a different execution model.

Express made sense when backends ran on dedicated servers with minutes-long uptime. However, edge computing demands lightweight, globally distributed code that initializes instantly. That’s why frameworks built for edge runtimes—not retrofitted Node.js tools—are winning adoption.

Deploy an Edge API in Under 5 Minutes

Forget VPS provisioning, DNS configuration, and SSL certificate setup. Hono’s create-hono starter scaffolds a production-ready API and deploys to 300+ global edge locations in under 5 minutes. Run these commands:

npm create hono@latest my-api

cd my-api

npm install

npm run deployYour API is now live at a *.workers.dev subdomain or custom domain, distributed globally with 0ms cold starts. No server to provision. No regions to select. No CDN to configure. In other words, Cloudflare Workers handle global distribution automatically. This is why edge deployment is eating traditional VPS and serverless markets—the barrier to entry collapsed.

Production Patterns: Auth, Rate Limiting, Caching

Production APIs require authentication, rate limiting, request validation, and caching. Hono provides built-in middleware and integrates cleanly with Cloudflare’s platform services. The production API gateway pattern popularized in 2026 follows this flow: Auth → Rate Limit → Cache Check → Proxy → Log, with total overhead under 20ms.

JWT Authentication (Web Crypto API, No Polyfills)

Hono’s built-in JWT middleware uses the Web Crypto API—the same standard implemented across Workers, Deno, and Bun. No jsonwebtoken package needed (which requires Node.js polyfills on edge runtimes):

import { jwt } from 'hono/jwt'

app.use('/api/*', jwt({ secret: c.env.JWT_SECRET }))Invalid or missing tokens return 401 automatically. Verified tokens populate request context for downstream handlers. Overhead: 1-2ms per request.

Rate Limiting with Durable Objects

Cloudflare’s Durable Objects provide globally consistent state coordination—perfect for distributed rate limiting. Furthermore, each user gets a Durable Object instance that tracks requests using a sliding window algorithm (avoiding the fixed-window burst problem where traffic spikes at window edges):

import { rateLimiter } from '@hono-rate-limiter/cloudflare'

app.use(rateLimiter({

windowMs: 60000, // 60 second window

limit: 100, // 100 requests per window

standardHeaders: true

}))Critical gotcha: Don’t rate limit by client IP. IPs are easily spoofed and unreliable. Use authenticated user IDs or API keys for production systems. Durable Objects overhead: 5-15ms per request, but globally consistent even across 300+ edge locations.

Caching with Cache API

The Workers Cache API provides sub-5ms response times for cached GET requests. Check the cache first, serve immediately on hit, process and store on miss:

const cache = caches.default

const cachedResponse = await cache.match(c.req.url)

if (cachedResponse) {

return new Response(cachedResponse.body, {

headers: { ...cachedResponse.headers, 'X-Cache': 'HIT' }

})

}

// Cache miss: process request, store response

const response = await processRequest(c)

await cache.put(c.req.url, response.clone())

return responseCached responses bypass authentication, rate limiting, and upstream processing entirely. For read-heavy APIs, this drops p95 latency from 20ms to under 5ms.

Validation with Zod

Type-safe request validation with Zod middleware catches malformed requests before handlers execute:

import { zValidator } from '@hono/zod-validator'

import { z } from 'zod'

app.post('/api/users',

zValidator('json', z.object({

email: z.string().email(),

password: z.string().min(8)

})),

async (c) => {

const { email, password } = c.req.valid('json') // Type-safe!

// Create user...

}

)Invalid data returns 400 with detailed error messages. No manual validation logic needed. TypeScript knows the validated shape downstream.

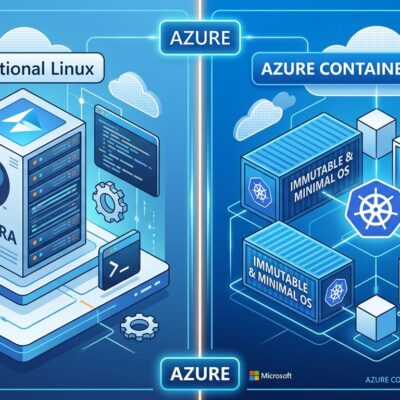

When to Choose Edge vs Traditional Serverless

Edge computing isn’t universally better. Workers excel at high-volume, low-processing workloads. However, CPU-intensive tasks cost more on Workers due to per-millisecond CPU billing. The decision framework: latency requirements + processing time + request volume.

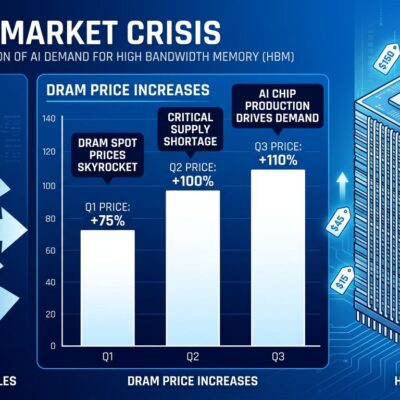

Cost comparison for 10 billion monthly requests with 15ms CPU time each: Cloudflare Workers cost $6,000 (requests: $3,000, CPU: $3,000). AWS Lambda + API Gateway: $12,000 (Lambda: $2,000, API Gateway: $10,000). Workers win by 50% for high-volume APIs. But flip the workload to 1 million requests with 500ms CPU time each: Workers cost $10.30, Lambda costs $8.53. Lambda wins for CPU-intensive processing.

Choose Workers when: You’re serving millions of requests with lightweight processing (< 50ms CPU), need global distribution, or handle spiky traffic patterns.

Choose Lambda when: Requests involve heavy CPU work (> 50ms), integrate deeply with AWS services, or fit the generous free tier (1M requests/month).

Skip edge entirely for database-heavy queries or long-running tasks. Centralized compute (Lambda, traditional servers) handles those patterns better. Indeed, edge computing is optimized for request routing, auth checks, API proxying, and serving cached content—not data science workloads.

Gotchas and Limitations

Hono’s ecosystem is smaller than Express. Fewer tutorials exist. Libraries may lack edge runtime compatibility. The framework is under 3 years old versus Express’s 10+ year maturity. Nevertheless, you’ll write more custom middleware than copying from npm.

Cloudflare Workers have a 1MB bundle size limit after compression. Large ORMs like Prisma may not fit. No file system access exists on edge runtimes—use object storage (R2) or key-value stores (KV) instead. Additionally, CPU time billing means inefficient code costs money directly. Profile and optimize hot paths.

Full-stack architectures using Hono with SSR frameworks require monorepo setup. Server-side rendering can’t internally convert API calls to function invocations—it must make external HTTP requests. This adds latency versus integrated full-stack frameworks.

These aren’t reasons to avoid Hono. They’re trade-offs. You gain performance, portability, and edge deployment. You trade ecosystem maturity and bundle size flexibility. Know the trade before you build.

Key Takeaways

- Hono’s 29K GitHub stars and 26.6% monthly NPM growth signal edge computing’s shift from experimental to production-ready, with Web Standards-based architecture enabling identical code deployment across Cloudflare Workers, Deno, Bun, Lambda, and Node.js

- Express and Fastify require polyfills to run on edge runtimes, but Hono’s native Web Standards foundation achieves 402K ops/sec at 14KB minified with 0ms cold starts versus 100-1000ms for containerized Lambda functions

- Production API patterns—JWT auth (1-2ms overhead), Durable Objects rate limiting (5-15ms), Cache API responses (< 5ms cached), and Zod validation—combine for under 20ms total gateway overhead with working code examples ready for deployment

- Edge computing economics favor high-volume, low-processing APIs (Workers 50% cheaper than Lambda + API Gateway at 10B requests/month) but CPU-intensive workloads cost more due to per-millisecond billing—choose based on processing time, not hype

- Trade-offs include smaller ecosystem versus Express, 1MB Workers bundle limit, no file system access, and monorepo complexity for full-stack apps—gains in performance and portability come at cost of maturity and flexibility