While OpenAI, Anthropic, and Google pour billions into scaling ChatGPT’s successors, the godfather of deep learning just raised $1.03 billion to prove they’re all wrong.

On March 10, Yann LeCun announced Advanced Machine Intelligence Labs (AMI Labs) secured one of the largest seed rounds ever. The Turing Award winner and former Meta Chief AI Scientist is betting that large language models are “a dead-end for superintelligence.”

LeCun’s thesis: While LLMs predict the next word, AMI Labs is building world models that predict the next state of reality. The difference matters. World models learn from video, understanding physics and causality. LLMs learn from text, understanding only patterns.

The twist: Nvidia co-led the $1.03 billion round—the same company making billions from LLM training chips. When the AI gold rush’s biggest winner hedges its bets, pay attention.

What World Models Actually Do

LLMs are impressive text predictors. Ask them what happens when you drop a glass, and they’ll describe it—but they don’t understand gravity, fragility, or consequences.

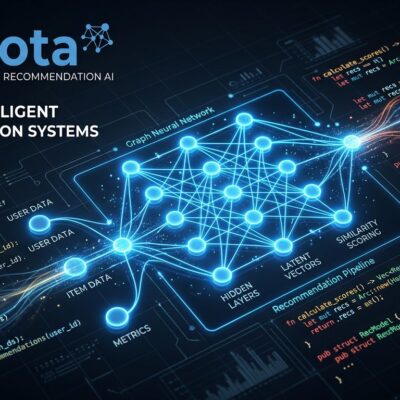

World models work differently. AMI Labs uses JEPA (Joint Embedding Predictive Architecture), LeCun’s framework that learns from video frames instead of text. It predicts in an abstract representation space, focusing on essential features while ignoring irrelevant details.

Feed JEPA sequential video frames, and it learns causality: actions lead to consequences, objects obey physics, time flows forward. Meta published V-JEPA and I-JEPA research, with PyTorch implementations on GitHub.

The architecture is action-conditioned—it can plan physical actions and predict outcomes. That’s why AMI targets robotics, aerospace, and industrial control, where “predict the next word” doesn’t work.

LeCun argues LLMs have fatal flaws: no physical understanding, no deliberate reasoning, and scaling makes them “more talkative but not more understanding.” AMI’s first partner is Nabla, a health AI startup, signaling that healthcare applications need more than plausible text.

The Nvidia Paradox

Nvidia’s H100 and H200 chips power nearly every major LLM. OpenAI, Anthropic, Google—all run on Nvidia silicon. The company’s AI chip dominance generated tens of billions from the LLM boom.

Yet Nvidia co-led AMI’s round, funding a startup claiming their biggest customers built the wrong technology.

Why would Nvidia bankroll the anti-LLM bet? Hardware fragmentation risk. If multiple AI paradigms emerge—world models, hybrid systems, alternatives not yet invented—hyperscalers might design custom chips, eroding Nvidia’s market share. Hedging both sides means Nvidia wins regardless of which approach dominates.

Other backers tell a similar story: Bezos Expeditions, Eric Schmidt, Tim Berners-Lee, Samsung, and Toyota. These aren’t venture capitalists chasing hype—they’re tech luminaries and industrial giants betting on fundamental AI architecture shifts.

Reality Check: 3-5 Years Is Forever

AMI’s timeline is aggressive. LeCun expects “fairly universal intelligent systems” deployable across domains in 3-5 years. That’s from a four-month-old company with zero products.

Meanwhile, LLMs aren’t standing still. Claude 4.6 Opus launched with a 1 million token context. Gemini 3.1 Pro’s reasoning scores doubled in one generation. OpenAI, Anthropic, and Google are addressing LeCun’s critiques with improved reasoning, grounding, and multimodality.

The race is on: Can AMI deliver before LLMs solve physical grounding? AMI has no revenue plans, focusing on R&D and testing with partners. Raising $1 billion buys patience, but aerospace and healthcare need proven reliability, not research demos.

What Developers Should Do

The emerging consensus: this isn’t replacement, it’s integration. Hybrid systems will combine LLMs for language understanding with world models for physical planning.

The practical take: Keep using LLMs for code generation and text tasks. But watch JEPA research on GitHub. If you’re building robotics or autonomous systems, world models are becoming critical. For enterprise apps, LLMs still dominate.

LeCun co-created convolutional neural networks and backpropagation, foundational technologies powering modern AI. When he says the industry took a wrong turn, people listen.

But declaring LLMs dead while ChatGPT runs half of GitHub is premature. Nobody knows which approach wins. Hedge your bets. Learn both paradigms. Use LLMs today. Prepare for world models tomorrow. And watch Nvidia’s next move—they’re betting on all sides, which tells you everything about how uncertain this race really is.