Mistral AI launched Forge yesterday at NVIDIA’s GTC conference—a platform that lets enterprises train custom AI models from scratch, not just fine-tune existing models. While OpenAI and Anthropic compete for consumer viral growth, the French startup is betting enterprises will pay premium prices for full training control. Mistral is on track to hit $1 billion in annual recurring revenue this year, with customers including Ericsson, the European Space Agency, and ASML.

This represents a fundamental strategic split in AI. Mistral is making an AWS-style infrastructure play—providing the training rails so enterprises don’t have to build them. But the uncomfortable question remains: is this actually necessary, or is it the new Kubernetes—powerful but overkill?

Why Mistral Claims Fine-Tuning Isn’t Enough

Mistral argues that fine-tuning and RAG are insufficient for true enterprise customization. CEO Arthur Mensch frames it bluntly: “Fine-tuning gets you to a proof-of-concept state. Whenever you want the performance you’re targeting, you need to go beyond.” This statement, from TechCrunch’s coverage, reveals the company’s core positioning.

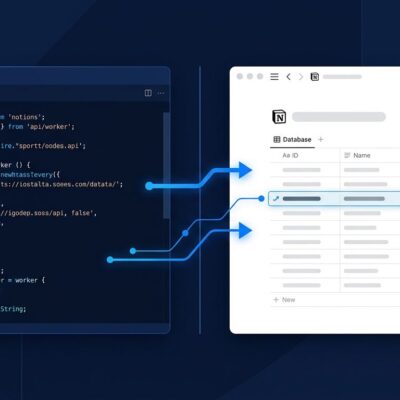

Forge supports the full training lifecycle—pre-training on internal datasets, post-training refinement, and reinforcement learning to align models with internal policies. Moreover, the platform packages Mistral’s internal methodologies, including data mixing strategies and distributed computing optimizations from building their own flagship models.

The target market reveals itself through use cases. Government agencies need models for specific languages and policy frameworks. Financial institutions require strict compliance alignment. Manufacturers possess domain-specific knowledge buried in decades of internal documentation. These are organizations where fine-tuning allegedly fails because the needed knowledge is fundamentally different from anything in public training data.

The Debate: Necessary or Expensive Overkill?

Here’s what industry consensus shows: fine-tuning works for 90% of enterprise use cases. Training from scratch costs 10-100x more, requires millions of examples instead of thousands, and takes months instead of weeks. Fine-tuning achieves solid results with 1,000-10,000 examples. Training from scratch? You need millions.

This is the equivalent of building your own data center when AWS exists—technically possible, occasionally necessary, usually wasteful. We’ve seen this pattern before with Kubernetes: powerful infrastructure that 80% of adopters didn’t actually need, leading to expensive complexity.

The core question is whether you can achieve 90% of desired results with fine-tuning plus RAG at 10% of the cost. Consequently, Mistral is betting that the 10% of cases where the answer is “no”—European governments, highly regulated institutions, specialized manufacturers—will generate enough revenue to justify building enterprise AI infrastructure.

Two Paths to AI Dominance: Infrastructure vs Consumer

While OpenAI and Anthropic chase consumer mindshare through ChatGPT and Claude, Mistral is selling picks and shovels. This is the AWS playbook: provide infrastructure while others mine for gold. The numbers validate the strategy. Mistral hit $400 million ARR in January 2026, up from $16 million at end of 2024—that’s 20x growth in 12 months, all from enterprises.

History suggests infrastructure plays can be more defensible than consumer platforms. AWS outlasted countless consumer web services. Snowflake built a data warehouse empire while flashier startups collapsed. Furthermore, Mistral is doubling down with €1.2 billion invested in a European AI data center, positioning as the infrastructure provider for organizations that will never use public AI services due to data sovereignty or regulatory requirements.

Who Mistral Forge Is For (And Who Should Skip It)

Forge isn’t for typical startups. It’s designed for organizations with four specific requirements: (1) millions of proprietary data points in unique domains, (2) strict regulatory requirements preventing use of public AI, (3) budgets to justify 10-100x higher costs, and (4) internal ML expertise to manage ongoing training.

The customer list proves the pattern. Government agencies need models trained on specific languages and regulatory texts that foundation models don’t understand. The European Space Agency needs AI trained on space mission data. ASML trains models on semiconductor manufacturing processes. However, Ericsson needs telecommunications infrastructure knowledge embedded at the model level.

If you’re not a government, highly regulated financial institution, or specialized manufacturer with millions in AI budget, you probably don’t need Forge. Fine-tuning plus RAG will deliver 90% of the value at 10% of the cost. Knowing when not to use cutting-edge technology is as valuable as knowing when to use it.

Does This Strategy Win Long-Term?

Mistral is betting that AI infrastructure follows the cloud infrastructure pattern—a few providers own the rails. But there’s a counter-argument: open-source training frameworks could commoditize what Mistral is selling, just as open-source software challenged proprietary platforms.

The winner depends on whether “training from scratch” becomes a common enterprise need or remains niche. The market will likely segment—specialized industries adopt Forge while typical companies stick to fine-tuning and RAG. Additionally, as foundation models improve, the performance gap may narrow, further limiting the addressable market.

For now, Mistral has found customers willing to pay for full control. Whether that’s a $1 billion annual business or a temporary advantage before open-source alternatives catch up remains an open question.