NVIDIA GTC 2026 (March 16-19) revealed a two-architecture roadmap reshaping AI hardware over the next three years. Vera Rubin platform (2026-2027) brings seven chips engineered as one AI supercomputer for agentic workloads. Feynman generation (2028) introduces Rosa CPU with custom 3D die-stacked design. CEO Jensen Huang projects $1 trillion in orders through 2027—double the previous $500 billion estimate—driven by enterprise agentic AI deployment. The pace signals accelerating evolution: new architectures every ~2 years (down from ~3 years), forcing developers to rethink infrastructure planning.

Vera Rubin: Seven Chips, One Agentic AI Supercomputer

Vera Rubin isn’t just a new GPU lineup—it’s seven chips co-designed to work as one unified AI supercomputer. The platform includes Vera CPU (88 cores, 1.2 TB/s bandwidth), Rubin GPU (50 petaFLOPS, 22 TB/s HBM), NVLink 6 (3.6 TB/s GPU-to-GPU bandwidth), ConnectX-9 (1.6 Tb/s networking), BlueField-4 (storage and security), Spectrum-6 (scale-out networking), and Groq 3 LPU (ultra-low latency inference). The architecture targets agentic AI workloads—MoE models with dynamic communication, reasoning pipelines interleaving tool use, and long-running training sessions—not just dense matrix multiplication.

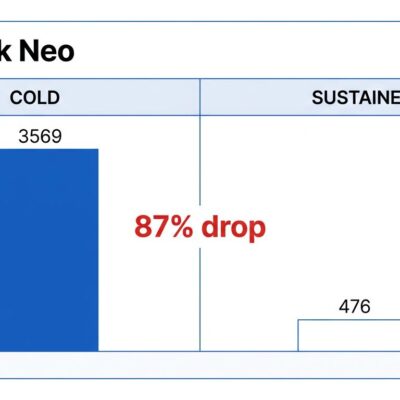

Performance claims reflect this specialized design: one-tenth the cost per million tokens compared to Blackwell for agentic AI, 4x fewer GPUs needed to train MoE models, and 10x more performance per watt. The design philosophy prioritizes adaptive execution across compute-heavy kernels, memory-intensive attention, and communication-bound expert dispatch. Consequently, GPUs stay productive across all execution phases rather than optimizing only for specific workload types.

Cloud adoption validates the approach. AWS announced deployment of 1+ million NVIDIA GPUs starting 2026, spanning Blackwell, Rubin, and Groq 3 LPU architectures. Microsoft Azure became the first hyperscaler to deploy Vera Rubin NVL72 systems with liquid-cooled Grace Blackwell infrastructure. Oracle integrated GPU-accelerated vector index builds through Oracle Private AI Services Container. This isn’t speculative infrastructure—it’s production deployment at hyperscale.

Feynman 2028: Why Two Architectures in Three Years?

Feynman generation (2028) reveals more than NVIDIA’s next product—it exposes a fundamental shift in AI hardware cadence. The timeline runs Blackwell (2024-2025) → Vera Rubin (2026-2027) → Feynman (2028), compressing architecture cycles from roughly 3 years to 2 years. This acceleration isn’t arbitrary. Computing demand “increased 1 million times over the last few years,” according to Jensen Huang, and agentic AI workloads require different infrastructure than pure training did.

Feynman introduces custom silicon integration beyond commodity components. The architecture features the first 3D die-stacked GPU design, custom HBM (not off-the-shelf HBM4), and Rosa CPU—named after DNA structure pioneer Rosalind Franklin—purpose-built for data and token movement. LP40 LPU, developed jointly with Groq, connects via NVLink for efficient GPU collaboration. The platform stack includes BlueField-5 DPU, NVLink-8 interconnect, Spectrum-7 networking, and CX10 connectivity.

The shift to custom memory integration matters. Commodity HBM works for general compute, but agentic AI’s communication patterns—expert routing in MoE models, tool-use coordination, persistent context management—benefit from memory architectures tuned specifically for those access patterns. NVIDIA is betting that AI workload diversity now justifies custom silicon beyond GPUs alone. That’s an expensive bet, and one that raises barriers for competitors trying to match performance.

$1 Trillion Orders: Market Demand Backs Architectural Pace

Jensen Huang’s $1 trillion order projection through 2027 represents a 2x increase from the previous $500 billion estimate through 2026. This isn’t just confidence—it’s backed by concrete deployment commitments. AWS committed to deploying over 1 million NVIDIA GPUs across global regions starting 2026. Microsoft deployed hundreds of thousands of liquid-cooled Grace Blackwell GPUs and was first to power Vera Rubin NVL72 systems. Oracle announced OCI Supercluster with Vera Rubin integration.

NVIDIA Cloud Partners have cumulatively deployed more than 1 million GPUs representing 1.7+ gigawatts of AI capacity, doubling AI factory footprint year-over-year. Sovereign AI initiatives compound this demand: Germany’s Sovereign AI Factory Frankfurt (powered by Polarise), Malaysia using Nemotron 3 for localized ILMU LLM family, European SOOFI consortium training sovereign open source models. Agentic AI isn’t replacing training infrastructure—it’s additive, requiring different compute profiles for real-time inference, reasoning chains, and tool orchestration.

The question isn’t whether $1 trillion in orders is plausible—hyperscaler commitments already substantiate significant portions. The question is whether enterprises can deploy and utilize this capacity fast enough to justify continued exponential spending. Infrastructure lead times, power constraints, and talent scarcity may cap adoption rates regardless of chip availability.

DGX Station: Desktop Supercomputing Goes Mainstream

DGX Station brings data-center-class AI to developers’ desks: GB300 Grace Blackwell Ultra with 748GB coherent memory, 20 petaFLOPS AI compute, 72-core Neoverse V2 CPU, and 900 GB/s CPU-GPU interconnect via NVLink-C2C. The system supports models up to 1 trillion parameters with pre-configured NVIDIA AI software stack, enabling local development of autonomous agents followed by seamless deployment to cloud or data center.

Systems ship now from ASUS, Dell Technologies, GIGABYTE, MSI, and Supermicro, with HP joining later. This matters for several reasons beyond raw specs. First, local development removes cloud dependency for sensitive workloads—healthcare, finance, defense applications benefit from air-gapped development environments. Second, cost models shift from ongoing cloud opex to one-time capex for teams running continuous training or inference. Third, pairing DGX Station with NemoClaw open source stack provides a complete platform for building long-running autonomous agents without external dependencies.

The skeptical take: Desktop supercomputing is still expensive workstations for large teams, not true democratization. Fair point. But the precedent matters—AI compute historically required data center access. Now it fits under a desk. That trajectory continues: Today’s $50K workstation becomes tomorrow’s $5K developer machine. The shift from “cloud-only possible” to “high-end workstation possible” unlocks new use cases regardless of current price points.

Key Takeaways

- NVIDIA’s two-architecture roadmap (Vera Rubin 2026-2027, Feynman 2028) compresses refresh cycles from 3 years to 2 years, accelerating AI hardware evolution and shortening planning horizons for developers

- Vera Rubin’s 7-chip platform delivers 10x token cost reduction and 4x fewer GPUs for agentic AI workloads compared to Blackwell, validated by AWS deploying 1+ million GPUs and Microsoft deploying liquid-cooled infrastructure

- Feynman 2028 introduces custom 3D die-stacked design and custom HBM (beyond commodity components), signaling NVIDIA’s bet that AI workload diversity justifies full-stack custom silicon integration

- $1 trillion order projection through 2027 (2x previous $500B estimate) is backed by concrete hyperscaler commitments: AWS (1M+ GPUs), Microsoft (Vera Rubin NVL72), Oracle (OCI Supercluster)

- DGX Station (20 petaFLOPS, 748GB memory, 1T parameter support) enables desktop supercomputing for local agent development, shifting from cloud-only to workstation-viable AI infrastructure