Cerebras went public last week. The stock surged 90% on debut day, raising $5.55 billion — the largest U.S. tech IPO since Uber. Every financial outlet covered it. Here’s the story that actually matters for developers: Cerebras is offering a public inference API that delivers up to 2,700 tokens per second on models like Llama 4 Scout and GPT-OSS 120B. That’s 5–8x faster than Groq, and roughly 20–30x faster than standard GPU cloud inference. It’s OpenAI-compatible. The free tier starts today, no credit card required.

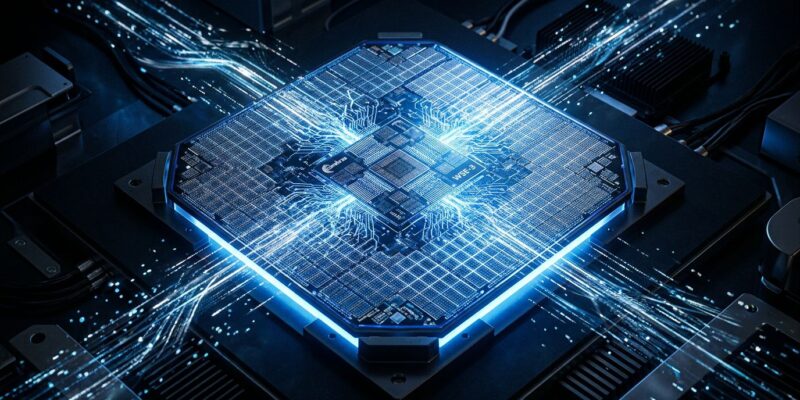

What the WSE-3 Actually Does

The hardware behind this is the Wafer Scale Engine 3 — a chip that spans an entire 300mm silicon wafer. It packs 900,000 AI-optimized cores and 44 GB of on-chip SRAM, connected by 21 petabytes per second of internal memory bandwidth. The critical difference from a GPU: model weights live on-chip. Standard GPUs repeatedly read weights from off-chip HBM memory on every forward pass — that round-trip is the bottleneck for large models. The WSE-3 eliminates it. You get inference that’s bound by compute, not memory access.

In benchmark terms: the CS-3 system running Llama 3 70B reasoning workloads hits 21x the throughput of an NVIDIA B200 at 32% lower total cost of ownership. For GPT-OSS 120B, Cerebras reaches 2,700+ tokens per second versus 900 on the B200. The speed is real — community benchmarks on Hacker News and r/LocalLLaMA have independently verified ~2,600 tokens per second on the free tier.

Two Lines to Switch

The Cerebras Inference API is fully compatible with the OpenAI client library. Here’s the complete migration:

import os

from openai import OpenAI

client = OpenAI(

base_url="https://api.cerebras.ai/v1",

api_key=os.environ.get("CEREBRAS_API_KEY")

)

response = client.chat.completions.create(

model="llama-4-scout-17b-16e-instruct",

messages=[{"role": "user", "content": "Your prompt here"}]

)

print(response.choices[0].message.content)Change the base URL. Change the API key. That’s it. The Cerebras Inference documentation covers additional SDK options including native Python and TypeScript clients. Available models include Llama 4 Scout 17B, Llama 3.3 70B, Qwen3 32B, and the recently released GPT-OSS 120B. The free tier gives you 1 million tokens per day with a 30 requests/minute cap and an 8,192-token context window — enough for most prototyping workloads.

Why Inference Speed Is an Engineering Problem

Inference speed isn’t just a bragging rights metric. Agentic workflows amplify latency across every step: a 10-call reasoning chain at 2 seconds per step means 20 seconds of dead air. At Cerebras speeds, that same chain completes in under 4 seconds. The threshold between “feels like software” and “feels like waiting for an API” is roughly 500ms — and that bar only gets harder to clear as agent architectures add more sequential calls.

There’s also a cost dimension. Research consistently puts inference at 80–90% of the total lifetime cost of a production AI system. Faster inference means more requests served per GPU-hour, which means better unit economics at every stage of growth. Speed isn’t vanity — it’s margin.

| Provider | Speed (tok/sec) | Notes |

|---|---|---|

| Cerebras WSE-3 | 2,100–2,700 | Model-dependent |

| Groq LPU | 300–500 | Next fastest |

| SambaNova | 200–400 | Good for batch |

| Standard GPU cloud | 40–150 | Most providers |

The Enterprise Path: AWS Bedrock (H2 2026)

In March 2026, AWS and Cerebras announced a multi-year partnership. The architecture pairs Cerebras CS-3 systems with AWS Trainium3 chips and Elastic Fabric Adapter networking. The key technique is inference disaggregation: Trainium handles the prefill stage (processing your prompt) while the WSE-3 handles decode (generating tokens). The partnership targets 5x faster inference than current Bedrock options, launching in the second half of 2026 through the standard Bedrock API — no hardware management required.

For developers already running production workloads on AWS, this is the path worth waiting for. You get Cerebras inference speeds without managing Cerebras directly.

The Risks Worth Knowing

The financial story has one detail developers should register: 86% of Cerebras’ 2025 revenue came from two UAE entities — Mohamed bin Zayed University of Artificial Intelligence (62%) and G42 (24%). This triggered a CFIUS national security review in 2024, which was resolved before the IPO, but the customer concentration remains. On the supply side, all WSE-3 wafers are manufactured by TSMC, with no long-term supply commitment. NVIDIA and Apple take priority on TSMC allocation. If that allocation tightens, so does Cerebras’ output.

The verdict: excellent for latency-sensitive workloads, agentic backends, and high-throughput batch inference. Use it for prototyping starting now. Don’t build sole-source production dependencies on it until the AWS Bedrock integration ships and the customer concentration improves.

Where to Start

Get an API key at inference-docs.cerebras.ai and browse working code at the Cerebras inference examples repository. One million tokens per day is enough to run meaningful load tests and integration work. When the AWS Bedrock integration lands in H2 2026, the path to production becomes significantly cleaner. The wafer-scale bet is real — Cerebras built the fastest inference hardware available, and it’s now accessible to any developer with an email address.