On March 9, 2026, Anthropic sued the Pentagon after being blacklisted as a “supply-chain risk to national security”—a label historically reserved for foreign adversaries like Huawei and Kaspersky. The conflict started when Anthropic refused to accept the Pentagon’s demand for “all lawful use” of its Claude AI, drawing two “redlines”: no mass domestic surveillance of Americans and no fully autonomous weapons. Hours after negotiations collapsed, OpenAI signed a Pentagon deal accepting the same terms Anthropic rejected. When $200 million in contracts met principles, principles lost—twice.

Selective Morality Theater: The Palantir Paradox

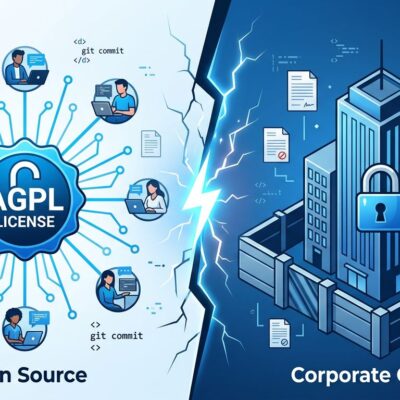

Anthropic positions itself as the “AI safety company” founded on Constitutional AI principles. Yet in November 2024, Anthropic partnered with Palantir—a defense and intelligence contractor with deep ICE contracts and surveillance work—to provide Claude to U.S. intelligence and defense agencies. Moreover, the company accepts $2 billion from Google (Project Maven history) and $8 billion from Amazon (AWS GovCloud, CIA contracts). Nevertheless, it draws the line at direct Pentagon contracts.

This isn’t principled ethics. Consequently, it looks more like selective morality theater. If you’re comfortable with Palantir using Claude for intelligence agencies, why reject Pentagon directly? Furthermore, Anthropic’s “redlines” appear less like ethical boundaries and more like arbitrary contract negotiations. The distinction between intelligence-connected investor money, Palantir partnerships, and Pentagon contracts is a technicality, not a principle.

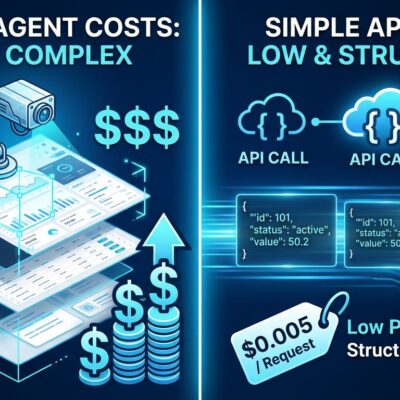

OpenAI Proved Ethics Are Negotiable

Hours after Anthropic’s negotiations collapsed on February 27, OpenAI swooped in. The company signed a Pentagon deal accepting the “all lawful use” terms Anthropic rejected. However, after employee and public backlash, OpenAI amended the contract on March 2 to add restrictions on surveillance and autonomous weapons. CEO Sam Altman admitted the rollout “looked opportunistic and sloppy.”

The amended terms exclude intelligence agencies like the NSA and prohibit mass domestic surveillance. Yet the full contract text remains unpublished. Privacy advocates at the Electronic Frontier Foundation titled their analysis “Weasel Words,” arguing vague language creates surveillance loopholes. The pattern is clear: sign first, add restrictions after backlash, keep contract details private. Consequently, when billions are at stake, ethics become negotiable—and negotiated in secret.

Related: AI Facial Recognition Wrongful Arrest – 6 Months in Jail

Financial Punishment as Compliance Tool

The Pentagon’s “supply-chain risk” designation carries severe financial consequences. Anthropic estimates losses of “hundreds of millions, or even multiple billions” in 2026 revenue. More than 100 enterprise customers contacted Anthropic with concerns after the March 4 designation. Additionally, defense tech companies immediately dropped Claude, while federal agencies have 180 days to remove Anthropic products.

The label itself sends a message. Historically, “supply-chain risk” has been reserved for foreign adversaries—Huawei from China, Kaspersky from Russia. Now the Pentagon applies it to a U.S. AI company that refused contract terms. The designation doesn’t just block government contracts; it stigmatizes the company across the enterprise market. Therefore, the message is clear: refuse Pentagon demands, get blacklisted, lose revenue, watch competitors take your contracts. It’s economic pressure designed to create compliance without legal compulsion.

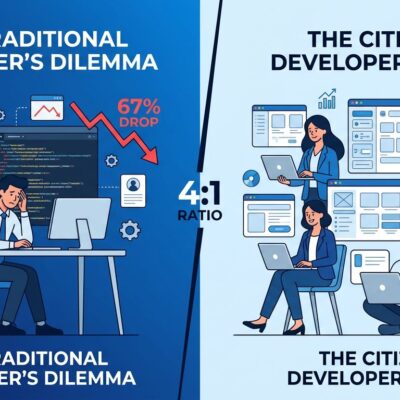

The Developer Rebellion Won’t Change Anything

More than 30 employees from Google DeepMind and OpenAI—Anthropic’s direct competitors—filed an amicus brief supporting Anthropic’s lawsuit. Google chief scientist Jeff Dean signed. Furthermore, the brief warns the Pentagon blacklist “threatens to damage the entire American AI industry.” Microsoft filed a separate brief. Former military leaders and civil rights organizations joined.

The amicus brief demonstrates unusual solidarity. However, employees at competing companies publicly opposing their own employers’ competitive positions and potential contract opportunities face a familiar pattern: internal dissent leads to public brief, company proceeds anyway. Google and OpenAI employees supporting Anthropic while their own companies pursue Pentagon contracts shows the limits of developer agency. Thirty employees signed a brief. Nevertheless, thousands remain employed building the same technology.

The Race-to-the-Bottom Logic

The Pentagon justifies the blacklist by arguing Anthropic’s restrictions harm U.S. competitiveness against China. China’s “Military-Civil Fusion” doctrine mandates all civilian AI development applies to military applications. No ethical restrictions on surveillance or autonomous weapons exist. Consequently, the Pentagon’s position: if China doesn’t restrict AI, the U.S. can’t afford to either.

This is race-to-the-bottom logic. The United States doesn’t match China on human rights, privacy protections, or free speech. Therefore, the argument that we must abandon AI ethics because an authoritarian adversary has none is the same justification for abandoning any democratic principle. Sixty countries endorsed ethical AI use norms; China opted out. The U.S. was among those 60. However, the Pentagon now argues those norms are a competitive disadvantage.

Key Takeaways

- Anthropic’s “ethics” are selective: partners with Palantir for intelligence agencies, accepts Google/Amazon money (both with defense contracts), but draws arbitrary line at direct Pentagon contracts—this is negotiation, not principle

- OpenAI demonstrated economics always win: signed Pentagon deal accepting terms Anthropic rejected, amended after backlash, kept full contract private—ethics became negotiable when billions were at stake

- Pentagon’s “supply-chain risk” designation—historically reserved for foreign adversaries like Huawei and Kaspersky—now weaponized against U.S. company refusing contract terms, creating financial punishment worth hundreds of millions to billions in lost revenue

- 30+ Google/OpenAI employees filed amicus brief supporting Anthropic while their own companies pursue Pentagon contracts—shows internal conflict but limited developer agency when company direction is set

- Pentagon’s “China doesn’t restrict AI” justification is race-to-the-bottom logic: U.S. doesn’t match authoritarian states on human rights or privacy, so why abandon AI ethics for competitive advantage?