SDL (Simple DirectMedia Layer), the foundational game development library used by thousands of cross-platform games and shipped as part of the Steam Runtime, banned all AI-generated code contributions on April 10-11, 2026. Maintainer Ryan C. Gordon created an AGENTS.md policy file explicitly rejecting code from ChatGPT, Claude, Copilot, Grok, or any other LLM. The policy states bluntly: “The SDL project does not accept contributions that are in any part created by AI agents.”

This isn’t a soft guideline. SDL modified its pull request template to warn every contributor upfront, and the reasoning cuts to the bone: unknown AI training sources threaten SDL’s Zlib license compatibility, LLMs hallucinate non-existent bugs at alarming rates, and open-source requires clear human authorship. For a library embedded in commercial games through Steam Runtime, licensing contamination isn’t theoretical risk—it’s potential legal exposure for thousands of studios.

The Ban: No AI-Generated Code, No Exceptions

SDL’s policy arrived after a GitHub issue the previous week asked whether the project had guidelines for AI contributions. Gordon’s response was decisive: create AGENTS.md and update the PR template with 20 lines of policy text. Contributors must now attest that their code is entirely human-authored.

The policy draws a clear line. AI can identify bugs—that’s fine. Humans must write the fixes. Copilot autocomplete sneaking into a PR? Rejected. ChatGPT-generated feature implementation? Rejected. Any amount of AI-generated code disqualifies the contribution.

SDL refined the policy language on April 11 based on early feedback, but the core message didn’t budge. This is an absolute ban, not a disclosure requirement. The enforcement mechanism? Contributor attestation in the PR template. It’s an honor system with teeth—violate it and your PR gets closed.

Why SDL Banned AI Code: Licensing, Hallucinations, Authorship

The licensing minefield is SDL’s primary concern. AI training data sources are opaque. LLMs could have ingested GPL code, MIT code, or proprietary codebases during training. If AI generates code similar to incompatibly-licensed sources, SDL unknowingly accepts licensing contamination. For a Zlib-licensed library that allows static linking in closed-source commercial games, that’s unacceptable risk.

Anthropic’s $1.5 billion copyright settlement in August 2025—the largest in U.S. history—demonstrated that AI training on pirated content has real legal consequences. The EU AI Act, taking effect August 2, 2026, will require vendors to publish training data summaries. But right now, in April 2026, those sources remain unknown. SDL can’t risk it.

The quality problem compounds the licensing risk. Research in 2026 shows 19.7% of AI-recommended packages are fabricated (they don’t exist), and 29-45% of AI-generated code contains security vulnerabilities. GitHub Copilot users have reported “measurable quality decline” since late 2025, with community discussions describing suggestions as “hallucinatory, complicating, wrong.” Gordon specifically cited previous SDL reviews involving Copilot and suspected LLM contributions as motivation for the ban.

For a small maintainer team, reviewing high volumes of AI-generated “slop” is exhausting. The ban shifts the burden from maintainers (who must verify every line) to contributors (who must write human code). That’s a defensible trade-off for critical infrastructure.

SDL Joins Tiny Minority: Only 5 of 112 Projects Ban AI

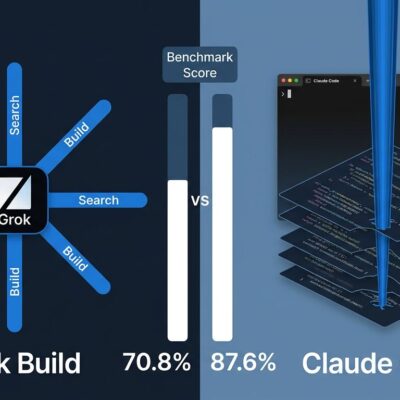

SDL picked the nuclear option. Of 112 open-source projects surveyed in 2026, only four had outright AI contribution bans before SDL: Zig, NetBSD, GIMP, and qemu. SDL is the fifth, and the most prominent due to its Steam Runtime reach.

Most projects—77 to 86 analyzed by RedMonk—have adopted disclosure-based policies. The Electronic Frontier Foundation allows AI-assisted contributions if contributors mark them clearly. Linux Kernel indirectly restricts AI through its “contributors must understand submitted code” requirement. The dominant approach is transparency, not prohibition.

The timing matters. SDL’s ban arrived the same week r/programming banned AI content in April 2026, citing community preservation against overwhelming AI-generated articles and tutorials. There’s a broader 2026 trend: “AI fatigue” is real, and maintainers are pushing back against the flood.

Related: r/programming Bans AI Content: 6.9M Developers Fight Overload

SDL’s ban makes sense given its constraints: licensing sensitivity, small maintainer team, critical infrastructure status. It’s a legitimate choice for projects that can’t handle the AI review burden. But it’s the exception, not the emerging standard.

The Practical Impact: Disable Copilot for SDL Contributions

Developers contributing to SDL must disable GitHub Copilot, Amazon CodeWhisperer, Claude Code, and other AI coding assistants. The workflow adjustment is straightforward: turn off AI tools, write code manually, submit PR.

Gray areas remain. Can you use ChatGPT to understand SDL’s architecture and then code manually? Yes—AI for research is allowed. Can you let Copilot suggest a bug location, then write the fix yourself? Yes—AI can identify issues, humans write solutions. The line is clear: no AI-generated code in the submission, period.

Enforcement relies on contributor honesty. There’s no technical detection for AI-generated code—some human-written code will match AI patterns by coincidence. SDL is betting on attestation plus maintainer judgment. Violate the trust and your contributions get rejected.

For developers who’ve integrated AI tools into daily workflows, this creates friction. Some will adapt by disabling AI for SDL work. Others may skip contributing to SDL entirely. The policy accepts that trade-off. Code quality and licensing clarity trump contributor convenience for a library embedded in thousands of commercial games.

Key Takeaways

- SDL banned all AI-generated code contributions on April 10-11, 2026, citing licensing risks from unknown training data, AI hallucination rates (19.7% fabricated packages), and the need for clear human authorship under open-source licensing terms.

- The policy is absolute: AGENTS.md and PR template changes reject any code created by LLMs like ChatGPT, Claude, or Copilot. AI can identify bugs, but humans must write the fixes. Enforcement relies on contributor attestation.

- SDL joins only four other projects (Zig, NetBSD, GIMP, qemu) with outright AI bans—3.6% of 112 surveyed projects. Most open-source projects allow AI-assisted code with disclosure requirements, making SDL’s ban the exception, not the rule.

- The ban makes sense for SDL’s specific constraints: Zlib license sensitivity (commercial games depend on it), small maintainer team (can’t handle AI “slop” review burden), and critical infrastructure status (licensing contamination could affect thousands of downstream projects).

- Developers must disable AI coding tools when contributing to SDL. The practical workflow: use AI for research if needed, write code manually, submit only human-authored code. Violations result in PR rejection.