Google is planning to put AI data centers in orbit. Not metaphorically — actual satellites, at 650 kilometers altitude, carrying custom TPUs, beaming compute down from sun-synchronous orbit via laser links. The project is called Suncatcher, the launch partner is Planet Labs, the first two prototype satellites go up in 2027, and SpaceX is now reportedly in talks to handle the rockets for a larger rollout. This is either the most rational response to a terrestrial data center crisis that has run out of good options, or the most expensive infrastructure moonshot since, well, the actual moonshot. Probably some of both.

The Crisis That Makes Orbital Sound Sane

The context matters here. Nearly half of the 12 gigawatts of US data center capacity planned for 2026 has been cancelled or delayed. Only 5 GW is actually under construction. The bottleneck is not chips or funding — it is the mundane reality of electrical infrastructure. Transformer lead times that used to run 12 to 18 months have stretched to 36 to 48 months. The US faces a projected 45 GW data center power shortfall by 2028, with 72 GW of new capacity needed through 2030 — roughly equivalent to building 72 large nuclear power plants in less than five years.

Power, land, and cooling are all constrained simultaneously. When your transformer has a four-year lead time and you cannot get permitted land near reliable grid capacity, “put it in space” stops sounding like a punchline and starts sounding like an engineering option worth exploring seriously.

What Project Suncatcher Actually Is

Google announced Project Suncatcher in November 2025 through its research blog. The vision: compact constellations of solar-powered satellites carrying Google’s Trillium-generation TPUs (the same V6e chips used in Google Cloud), connected to each other via free-space optical links — lasers, essentially — and communicating with ground stations when needed.

The target is an 81-satellite constellation operating at 650km altitude in a dawn-dusk sun-synchronous orbit, with satellites flying just 100 to 200 meters apart. That formation density is tighter than any existing constellation. The orbital choice is deliberate: sun-synchronous dawn-dusk orbits provide near-continuous solar illumination, with solar panels potentially 8x more productive than on Earth and no battery dependency for overnight gaps.

The hardware has been tested. Google’s V6e TPU and AMD host server were exposed to a 67 MeV proton beam to simulate LEO radiation conditions. The High Bandwidth Memory subsystems held up well past the expected five-year mission dose before showing irregularities, with no hard failures up to 15 krad(Si). That is a meaningful result — the hardware can survive in that environment. The 2027 milestone is two satellites, not 81. This is a proof-of-concept phase, nothing more.

The SpaceX Angle Is About More Than Rockets

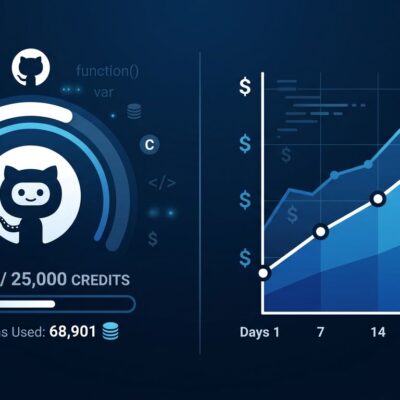

SpaceX’s involvement is worth unpacking. In February 2026, SpaceX acquired xAI in an all-stock deal valued at a combined $1.25 trillion — the largest merger in history. The combined entity now controls Colossus 2, the world’s first gigawatt-scale AI training cluster (live as of January 2026 at 1 GW, targeting 1.5 GW by April), alongside Starlink’s satellite infrastructure, SpaceX’s launch capability, and xAI’s Grok models.

Last week, Anthropic signed a deal to lease all of Colossus 1’s capacity — 300 megawatts across 220,000 Nvidia GPUs. The same deal included exploratory discussions about orbital compute. Now SpaceX is in talks with Google about launching orbital data center satellites. The pattern: SpaceX positioning its dual launch-and-compute capability as a unique moat ahead of its $1.75 trillion IPO later this year. When SpaceX tells investors that “space is the cheapest compute in a few years,” keep that incentive structure in mind.

The Reality Check the Headlines Are Skipping

The economics of orbital compute are, right now, not competitive. A 1 GW orbital data center constellation is estimated at $42 to $51 billion — compared to $16 to $20 billion for a terrestrial hyperscale facility. Energy delivery on orbit costs roughly $14,700 per kilowatt per year via Starlink, versus $570 to $3,000 per kilowatt per year on the ground. The crossover point requires launch costs around $500 per kilogram for parity, and around $200 per kilogram for orbital to genuinely win. Analysts put that in the 2030s, not 2027.

There is also a thermal problem that deserves more attention. In a vacuum, there is no air convection. Every watt of waste heat has to leave the satellite through thermal radiation, governed by the Stefan-Boltzmann law. Radiating 1 megawatt of waste heat requires roughly 1,200 square meters of radiator surface area — a 35-by-35 meter panel per megawatt. At data center scale, those radiators become a significant engineering and mass constraint.

The hardware refresh cycle is a separate issue. TPUs and GPUs iterate every 18 to 24 months. Satellites last five to ten years. Whatever hardware launches in 2027 is whatever hardware runs until that satellite deorbits. There is no rack swap in orbit.

What to Actually Watch For

The 2027 prototype launch is the signal that matters. If Google’s two Planet Labs satellites demonstrate stable thermal management, reliable laser inter-satellite links, and acceptable TPU performance in actual radiation, that meaningfully de-risks the full constellation path. If they encounter problems — and thermal management is the engineering concern most frequently cited — the timeline slides further right.

In the near term, the real story remains terrestrial: Anthropic locking up Colossus 1, GPU supply pressure, cloud pricing, and the transformer lead-time crunch. The orbital data center is real engineering, genuinely motivated by real infrastructure constraints, and likely to matter — eventually. That “eventually” is probably the 2030s. That is a reasonable outcome. It should just be stated clearly rather than buried under launch-day excitement.