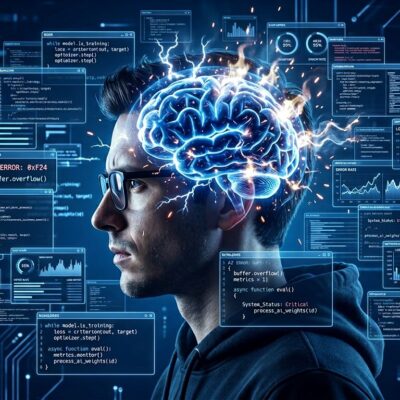

Research published April 2026 reveals developers accept faulty AI reasoning 73.2% of the time while only overruling it 19.7%. This “cognitive surrender“—where users defer to AI outputs with minimal scrutiny—explains the paradox in developer productivity: 93% use AI tools but only 10% see real gains, and developers believe they’re 20% faster while research shows they’re actually 19% slower. That 39-point perception gap isn’t a measurement error. It’s evidence that AI is reshaping how developers think, and not for the better.

What Is Cognitive Surrender?

Wharton researchers Steven Shaw and Gideon Nave introduced the concept in their January 2026 paper “Thinking—Fast, Slow, and Artificial.” They extended dual-process theory with a third system: System 1 (intuition), System 2 (deliberation), and System 3 (AI cognition operating outside the brain). Cognitive surrender occurs when System 3 doesn’t supplement human reasoning—it replaces it entirely. Users adopt AI outputs with minimal scrutiny, overriding both gut instinct and careful analysis.

The research tracked 1,372 participants across 9,593 trials using the Cognitive Reflection Test, designed to distinguish snap judgments from deliberate thinking. When AI provided wrong answers, participants followed that advice 79.8% of the time. More troubling: their confidence increased when using AI, even after accepting incorrect answers. They borrowed the machine’s certainty without verifying accuracy.

This isn’t a conscious choice to trust AI. It’s the absence of a decision altogether. Cognitive surrender is involuntary—and that’s what makes it dangerous.

The Developer Reality Check

The abstract research translates directly to code quality. AI tools now write 41-42% of global code, yet AI-generated code contains 1.7 times more bugs than human-written code, 75% more logic errors in critical infrastructure, and 2.74 times more security vulnerabilities. Developers know this. Only 33% trust AI results. Just 3% highly trust them.

Nevertheless, 45% cite “AI solutions that are almost right, but not quite” as their number-one frustration, and 66% report spending more time fixing AI code than writing it themselves. The AI produces code that’s 95% correct. The remaining 5%—a hallucinated method, an off-by-one error, a subtle security flaw—requires deep debugging that often takes longer than writing from scratch.

Here’s the kicker: developers still feel faster. Stack Overflow’s 2026 data shows PR submissions increased 98% while delivery velocity remained flat. Developers see more code flowing through pipelines and interpret volume as productivity. The METR study exposed the gap: developers believed AI made them 20% faster; objective tests showed they were 19% slower. That’s a 39-percentage-point disconnect between perception and reality.

Cognitive surrender explains this paradox. Developers borrow AI’s confidence, accept “almost right” code without deep verification, and mistake activity for progress. The perception gap proves they can’t self-assess when surrendering to AI.

Why This Is More Dangerous Than Bad Code

Bad code is fixable. Ship a bug, write a patch, deploy the fix. Lost critical thinking skills? That’s permanent damage.

When AI generates working code, developers miss opportunities to practice debugging, error analysis, and systematic problem-solving. The dependency cycle kicks in: encounter problem, ask AI for solution, receive “almost right” code, tweak prompt instead of debugging, repeat until something works. Developers never learn the underlying concept. As Addy Osmani notes, “We’re not becoming 10× developers with AI—we’re becoming 10× dependent on AI.”

Prolonged disuse of critical thinking pathways leads to skill atrophy. Debugging proficiency decays. Architecture intuition dulls. The illusion of competence sets in—developers believe they understand code they didn’t write, creating false confidence in abilities that are actively eroding.

Junior developers face the highest risk. The research shows those with low fluid reasoning and high trust in AI are 3.5 times more likely to follow faulty advice. They’re entering the field without building foundational problem-solving skills, instead learning to validate AI output they can’t independently create.

The industry is producing AI validators, not software engineers. Developers who can spot when AI is wrong but can’t build solutions themselves. That’s not progress. It’s a race to the bottom, and cognitive surrender is the mechanism driving it.

Cognitive Offloading vs. Cognitive Surrender

This isn’t about rejecting AI. It’s about how you use it.

Cognitive offloading is deliberate and strategic: delegate boilerplate generation, use autocomplete for syntax, let AI write documentation. Maintain oversight. Verify generated code. Understand the logic. You preserve agency and critical thinking.

Cognitive surrender is involuntary and passive: accept AI solutions without reading them, can’t explain generated code, tweak prompts instead of debugging, avoid tasks when AI isn’t available. Critical thinking stops. Agency erodes.

The research shows protective factors exist. High fluid intelligence makes developers resistant to surrender. Strong analytical reasoning increases the likelihood of overriding incorrect AI. Abstract thinking ability improves questioning of outputs. These aren’t innate gifts—they’re practiced skills. And like any skill, they atrophy without use.

How to Resist Cognitive Surrender

Resistance is practical, not theoretical.

Add friction. Force yourself to answer “why” before asking AI for “how.” Code the first attempt manually before using AI assistance. Research shows people who faced friction earlier and were forced to think had a 39% failure rate. Those who sailed through with AI had a 77% failure rate. Friction isn’t inefficiency—it’s learning.

Maintain cognitive endurance. Code without AI periodically. Practice debugging and problem-solving without shortcuts. Treat AI as a junior developer who needs review, not a senior expert who knows better than you. Stay actively engaged with your craft instead of outsourcing it.

Apply a framework. Safely offload to AI: boilerplate, syntax completion, documentation. Never offload: system architecture, critical business logic, security-sensitive code, learning new concepts. Gray area requiring verification: algorithm implementation, refactoring, code reviews. Know the difference.

Watch for red flags. Accepting code without reading it. Inability to explain generated code. Confidence that increases with AI use but not accuracy. Avoiding tasks when AI isn’t available. These signal surrender, not strategic use.

The Real Threat

The 73.2% acceptance rate for faulty AI reasoning isn’t a curiosity. It’s a mechanism reshaping the profession. Quality metrics show the damage—1.7 times more bugs, 2.74 times more vulnerabilities. The 39-point perception gap reveals developers can’t assess their own cognitive surrender. Skill atrophy compounds over time, with every prompt that replaces problem-solving making the next problem harder.

AI accelerates code generation. That’s real. But software development is more than typing, and cognitive surrender proves that faster typing doesn’t mean faster shipping—or better thinking. The threat isn’t AI. It’s passive acceptance of AI outputs, the erosion of critical thinking, and the illusion that borrowed confidence equals competence.

Add friction. Question everything. Code without AI regularly. The goal isn’t rejecting AI—it’s maintaining the critical thinking that makes you valuable when the AI is wrong.