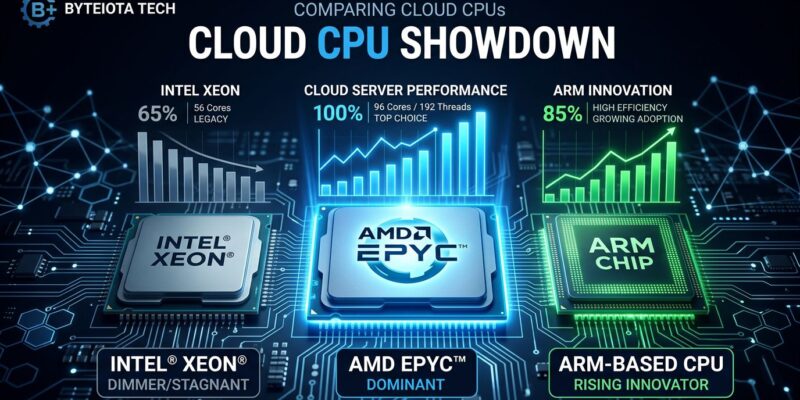

New cloud VM benchmarks published in February 2026 tested 44 instances across seven major providers and revealed a seismic shift in cloud compute. AMD EPYC Turin demolishes Intel Xeon 6 Granite Rapids by 24% while running cheaper. More surprising: Google’s ARM-based Axion processor beat x86 in multiple categories for the first time in cloud history. With cloud costs rising 5-10% in 2026, choosing the right VM just became mission-critical.

AMD Dominates: 24% Faster, 24% Cheaper

Phoronix ran nearly 500 benchmarks comparing AMD’s EPYC 9755 (128-core Turin) against Intel’s Xeon 6980P (128-core Granite Rapids). The results weren’t close. AMD delivered a 1.243x geometric mean performance advantage, hit 1.93x faster speeds in CPU cache tests, and peaked at 500 watts while Intel struggled to 547 watts.

Here’s the kicker: AMD’s 192-core EPYC 9965 costs $14,813. Intel’s 128-core Xeon 6980P? $17,800. You’re paying a 24% premium for a CPU that’s 24% slower. That’s not a competitive gap – it’s a rout.

The DEV Community benchmark study, which tested 44 VMs across AWS, GCP, Azure, Hetzner, and Oracle, confirmed AMD’s “clear performance lead” in single-thread workloads. Multi-thread scaling? Even more brutal. A single-socket AMD EPYC 9755 matched performance with a dual-socket Intel system. Half the physical CPUs, same output.

For CPU-intensive workloads – think Kubernetes clusters, PostgreSQL with complex joins, FFmpeg video encoding, or KVM virtualization hosts – AMD Turin isn’t just faster. It’s the only logical choice unless you’re contractually locked to Intel.

ARM’s Breakthrough: Google Axion Beats x86

The bigger story might be ARM. Google’s Axion processor, based on ARM Neoverse-V2 cores and launched in January 2026, didn’t just compete with x86. It won.

Google claims Axion delivers up to 50% better performance than current-generation x86 instances. Independent Phoronix testing backs this up. In database workloads, Axion showed 50% better price-performance than x86 alternatives and 2x better transactional throughput than AWS Graviton4. For AI inference, MLPerf DLRMv2 benchmarks showed 3x better full-precision performance versus x86.

Web server performance? NGINX on Axion (4 vCPUs) delivered 40% higher request throughput than Intel and 194% better than AMD. Energy efficiency improved 60% over x86 equivalents.

AWS Graviton4 also performed well. It’s 30% faster than Graviton3 for web applications, 40% faster for databases, and showed a 15.2% geometric mean advantage over AMD’s EPYC Genoa generation in HPC workloads. On AWS M8 instances, Graviton4 runs at $0.718 per hour compared to $0.974 for AMD Turin and $0.847 for Intel Granite Rapids.

ARM is no longer experimental. For databases, web servers, containerized microservices, and Java applications, ARM processors are production-ready and cost 20-30% less than x86 while matching or exceeding performance.

Provider Pricing: Hetzner and Oracle Crush AWS

The DEV Community study didn’t pull punches on cloud provider pricing: “AWS is definitely the worst value on-demand, with their Turin being the best they can do.”

Hetzner and Oracle Cloud Infrastructure topped the value rankings for on-demand instances. Hetzner’s shared-core instances start at €3.49 per month with strong performance-per-dollar ratios. Oracle matches Hetzner’s value with Turin processors and adds a generous free tier (two AMD-based VMs included in Always Free).

For reserved instances (1-3 year commitments), GCP’s Turin offerings provide competitive value. Oracle maintains price parity across all regions, making budget forecasting simpler.

Spot and preemptible pricing? GCP dominates with fixed discounts of 60-91% off on-demand rates. AWS spot pricing is market-based and volatile – less predictable, harder to budget.

The takeaway: don’t default to AWS without running the numbers. The convenience of AWS comes with a significant price penalty, especially for on-demand workloads.

Workload-Specific Recommendations

The “best” cloud VM depends entirely on what you’re running.

Choose AMD EPYC Turin for:

- Containerized workloads (Docker, Kubernetes) needing high core density

- Complex database operations (large joins, analytics, OLAP)

- Video encoding and transcoding (FFmpeg, media pipelines)

- Virtualization hosts (Proxmox, KVM with multiple VMs)

Choose ARM (Google Axion or AWS Graviton4) for:

- Database workloads (40% faster, 50% better price-performance)

- Web servers (NGINX sees 40%+ throughput gains)

- Cloud-native microservices and API backends

- AI inference workloads (3x better for certain models)

- Cost-sensitive deployments (20-30% savings over x86)

Choose Intel Granite Rapids only if:

- You’re contractually locked to Intel

- Running Intel-specific optimized software

- Prioritizing boost behavior stability over raw performance

Choose Hetzner or Oracle if:

- Budget is the primary constraint

- Non-U.S. regions are acceptable (Hetzner)

- Running small to medium workloads

Intel’s Decline Continues

Intel improved Granite Rapids over the previous Emerald Rapids generation, particularly around boost clock stability. But stability improvements don’t fix the fundamental problem: Granite Rapids is slower and more expensive than AMD’s equivalent.

The 128-core Xeon 6980P costs $17,800 and delivers 1.243x less performance than AMD’s 128-core EPYC 9755 at $14,813. Power consumption? Intel peaks higher (547W vs 500W) while delivering less work. The DEV Community study labeled AWS Intel instances “the worst value on-demand.”

Intel’s server CPU dominance is eroding. Don’t assume Intel equals best anymore. The data says otherwise.

Implications for Infrastructure Decisions

Cloud infrastructure costs are rising 5-10% in 2026 as underlying server prices increase 15-25%. The global cloud market will see $600 billion in capital expenditures this year, up 36% year-over-year, with $450 billion tied directly to AI infrastructure.

In this environment, choosing the right VM matters. The performance delta between AMD Turin and Intel Granite Rapids is 24%. The price-performance gap between Hetzner and AWS can exceed 100%. Make the wrong choice and you’re burning money at scale.

The benchmarks are clear: AMD leads for CPU-intensive work, ARM wins for databases and web workloads, and provider selection matters as much as CPU architecture. Intel only makes sense if you’re locked in. AWS only makes sense if convenience outweighs cost.

The infrastructure decisions you make today lock in costs for years. Choose based on data, not defaults.