Developers using AI coding tools believe they’re working 20% faster. Research shows they’re actually 19% slower. That’s a 39-percentage-point gap between perception and reality—and it reveals the fundamental crisis in how we measure AI productivity in 2026. With 93% of developers using AI tools but only 10% seeing measurable gains, the industry is waking up to an uncomfortable truth: speed metrics lie, and quality is collapsing under the weight of “almost-right” AI code.

The Perception Trap

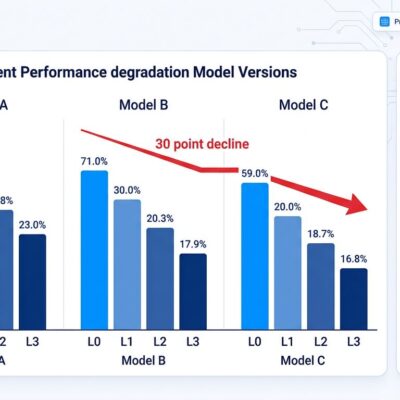

The numbers are stark. METR’s February 2026 study tracked 16 experienced open-source developers across 246 real-world tasks in mature codebases averaging 22,000+ stars and over a million lines of code. Before starting, developers predicted AI would make them 24% faster. After completing the study, they believed they were 20% faster. The actual result? They were 19% slower.

This isn’t a rounding error. It’s a fundamental disconnect between how productivity feels and how it measures. The illusion comes from volume: PR submissions increased 98% while delivery velocity remained flat. Developers see more code flowing through the pipeline and interpret that as speed. However, velocity isn’t completion—it’s just motion.

The hidden cost shows up in the cleanup. Developers now spend 9% of task time reviewing and cleaning AI output, which translates to four hours per week. That’s an entire workday per month spent acting as forensic auditors instead of engineers. AI shifts bottlenecks rather than removing them, and teams mistake activity for progress until the metrics expose the gap.

The “Almost Right” Quality Collapse

The top developer frustration in 2026 isn’t that AI code is wrong—it’s that it’s almost right. 45% cite “AI solutions that are almost right, but not quite” as their number-one pain point. Meanwhile, 66% report spending more time fixing AI-generated code than writing it themselves.

AI produces code that’s 95% correct. The remaining 5%—a hallucinated library method, an off-by-one error, a subtle security flaw—requires deep debugging that often takes longer than writing from scratch. Worse, it forces a mental shift from creator mode to auditor mode, a transition developers describe as “exhausting and inefficient.”

The quality metrics back this up. Opsera’s 2026 benchmark report, analyzing over 250,000 developers across 60+ enterprises, found that AI-generated code contains 1.7 times more bugs than human-written code and 75% more logic errors in critical infrastructure. Code duplication rose from 10.5% to 13.5%. Defect rates increased 15-18%, and those issues typically appear after release, not during testing.

Trust reflects the damage. Only 33% of developers trust AI results. Just 3% “highly trust” them. The promise of AI-assisted development was acceleration. The reality is surveillance: constant verification of output you can’t rely on.

The Sustainable Threshold

Here’s the insight teams are missing: there’s an optimal range for AI code generation, and most organizations are exceeding it. Right now, 41-42% of global code is AI-generated. Industry benchmarks place the sustainable range at 25-40%, with 30% as the sweet spot that delivers 10-15% productivity gains while keeping review overhead and quality standards manageable.

Beyond 40%, quality degrades sharply. Rework increases 20-30%. Bug rates climb. Review times extend. Above 50%, urgent reduction is recommended because the technical debt compounds faster than teams can service it.

Opsera’s data shows the mechanism: time-to-pull-request accelerated 48-58% with AI tools, but organizations sacrificed quality and security for speed without realizing the trade-off. The acceleration was real—code generation sped up. Nevertheless, software development isn’t just code generation. It’s writing, reviewing, debugging, integrating, and maintaining. AI only accelerates the first part, and the downstream costs eat the gains.

The Metric Shift

Speed no longer differentiates high-performing teams from struggling ones. Quality does. That’s why 2026 marks the industry’s pivot from velocity metrics to quality metrics.

In 2025, teams measured cycle time, deployment frequency, PR count, and lines of code. Those metrics rewarded volume. In 2026, the focus has shifted to defect density (benchmark: below 1%), merge confidence scores, test coverage (benchmark: above 80%), code churn (benchmark: below 10%), and long-term maintainability.

The change isn’t philosophical—it’s survival. When AI tools pushed PR volumes up 98% without increasing delivery velocity, teams discovered that velocity metrics were tracking motion, not outcomes. Defect density reveals whether the code shipped actually works. Merge confidence scores show whether reviews catch real issues or rubber-stamp submissions. Test coverage exposes whether teams verified correctness or assumed it.

What works in practice: multi-agent workflows where one agent writes, another critiques, and another tests. Third-party validation tools that provide objective quality assessments the generating AI can’t deliver. Governance frameworks that set explicit policies on AI usage patterns, documentation requirements, and percentage thresholds.

Where AI Actually Delivers

The paradox resolves when you separate controlled tasks from integrated development. In controlled experiments—writing a function, generating tests, producing boilerplate—AI tools like GitHub Copilot deliver 30-55% speed improvements. One study found developers completed an HTTP server task in 1 hour 11 minutes with Copilot versus 2 hours 41 minutes without, a 55.8% gain.

But controlled tasks aren’t software development. Real-world work involves integrating that function into a system, handling edge cases, debugging interactions, maintaining consistency across modules, and ensuring the change doesn’t introduce regressions. Consequently, that’s where the 19% slowdown appears. Review overhead, integration testing, and debugging eat the code generation gains.

The net effect with disciplined adoption: modest 10-15% productivity improvements. Without discipline—letting AI code percentages exceed 40%, measuring velocity instead of quality, skipping governance—teams see negative productivity and collapsing quality.

Quality Over Speed

The 39-point perception gap isn’t just a research curiosity. It’s the mechanism behind the quality crisis. Teams feel faster, so they don’t notice the hidden costs accumulating until defects spike, incidents increase, and rework consumes the supposed time savings.

The solution isn’t rejecting AI—it’s using it sustainably. Stay within the 25-40% AI code range. Measure defect density and merge confidence, not velocity and cycle time. Implement governance and validation. Accept that quality, not speed, is the competitive differentiator in 2026.

AI accelerates code writing. That’s real. But software development is more than writing code, and the industry is learning—slowly, expensively—that faster typing doesn’t mean faster shipping. The gap closes when we measure what actually matters.