ETH Zurich researchers just proved what many developers suspected: AGENTS.md context files don’t work the way the AI industry promised. Published February 12, 2026, the study tested 438 instances across four major coding agents and found LLM-generated context files hurt performance by 3% while increasing costs by 20%. Even human-written files only helped 4%—barely worth the effort. The kicker? Anthropic, Cursor, and OpenAI all recommend these files as best practice. Millions of developers followed that guidance. The data says they were wrong.

The Numbers Don’t Lie

The ETH Zurich team tested Claude Code (Sonnet-4.5), Codex (GPT-5.2, GPT-5.1 mini), and Qwen Code (Qwen3-30b-coder) across 438 real-world coding tasks. LLM-generated context files decreased resolution rates by 3% on average while increasing inference costs by 20-23%. Human-written files fared marginally better—a 4% performance boost—but still drove costs up 19%.

The study revealed why. Context files forced agents to take 2.45-3.92 more steps per task. Reasoning tokens increased 14-22% for GPT models. Agents explored more, tested more carefully, and followed instructions perfectly. They just didn’t succeed any more often. In one telling experiment, when researchers removed all documentation from repositories, LLM-generated context files improved performance by 2.7%—proving they simply duplicate what’s already there.

Industry Guidance Contradicts Research

Here’s where it gets uncomfortable. Anthropic’s official best practices recommend CLAUDE.md files containing “coding standards, architecture decisions, preferred libraries, and review checklists.” Cursor promotes .cursorrules with setup commands, build scripts, and coding style. Both suggest files under 300 lines. The research says even minimal files barely help.

The contradiction is stark. Vendors tell developers context files are essential. Academic research proves they hurt performance and cost money. Someone’s wrong, and it’s not the peer-reviewed study with 438 test cases.

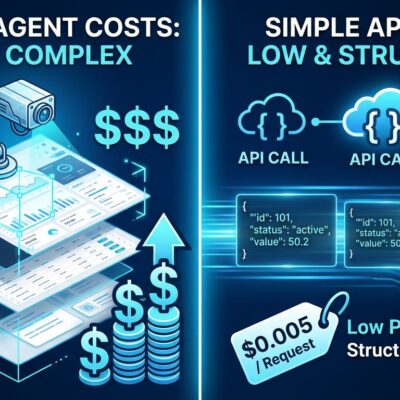

This Costs Real Money

A 20% cost increase doesn’t sound dramatic until you scale it. Consider an enterprise team running 1,000 agent tasks daily. That 20% penalty equals 200 wasted runs. At an average $0.50 per run, that’s $100 daily or $36,500 yearly—per team. Multiply that across organizations with dozens of engineering teams using AI coding agents.

The performance hit compounds the waste. Pay 20% more for 3% worse results with LLM-generated files, or 19% more for a marginal 4% gain with human-written ones. Developers following vendor guidance are unknowingly funding worse outcomes at scale.

And here’s the question nobody wants to ask: who benefits from 20% higher token usage? API providers. More tokens mean more revenue. Whether that influenced industry recommendations is speculation, but the incentive alignment is impossible to ignore.

What Actually Works for AI Coding Agents

The ETH Zurich researchers were blunt: “Unnecessary requirements from context files make tasks harder.” Their recommendation? Omit LLM-generated files entirely and limit human-written ones to “only minimal requirements”—non-discoverable details like custom tooling or unusual build commands.

Good examples: “Use pnpm, not npm” or “Run tests with pytest –no-cov.” Bad examples: “This project uses React with TypeScript” (obvious from package.json) or “Follow clean code principles” (vague and unactionable).

Some developers are going further. On Hacker News, one engineer described replacing AGENTS.md entirely with programmatic enforcement—TypeScript AST validation, pre-commit hooks, deterministic linters. The reason? “Even explicit instructions are routinely ignored.” If agents don’t reliably follow markdown guidance and the guidance hurts anyway, why not enforce rules through code?

The Industry Moved Too Fast

This isn’t the first time the AI industry promoted a practice based on assumptions rather than data. Context helps humans, so it must help AI agents, right? Except nobody tested that at scale before declaring it best practice. Vendors rushed guidance out, developers trusted it, and costs ballooned.

The ETH Zurich study is part of a necessary correction phase. As AI coding tools mature, we’re learning what actually works versus what sounds right. Sometimes the answer is less—fewer tokens, less context, less complexity. The agents are already good at exploration. Over-specifying their work creates problems that didn’t exist.

Question the Guidance

If you’re using AGENTS.md files, delete the LLM-generated ones. Pare human-written files down to the absolute minimum—specific tooling only. Consider programmatic enforcement for anything that matters. And next time a vendor recommends a best practice, ask whether they tested it rigorously or assumed it would work.

The data is clear. Context files cost more, deliver less, and represent the opposite of what the industry told us. Trust the research, not the sales pitch.