A critical security flaw in Ollama lets unauthenticated attackers steal API keys, system prompts, and active conversation data from exposed servers — with no credentials, no errors logged, and no trace in the server’s activity. The vulnerability, codenamed Bleeding Llama (CVE-2026-7482, CVSS 9.1), requires exactly three API calls to execute. A patch has been available since February, but a breakdown in responsible disclosure meant most operators had no idea they needed it until May.

What the Bug Actually Does

The vulnerability lives in Ollama’s GGUF model loader. When a developer submits a GGUF file to the /api/create endpoint, Ollama reads the file’s declared tensor dimensions without verifying they match the file’s actual length. An attacker crafts a GGUF file where the tensor size points well past the end of the file data. During quantization, Ollama’s Go runtime reads past the allocated heap buffer — using the unsafe package, which bypasses Go’s memory safety guarantees entirely.

Here’s where it gets clever: the exploit uses a lossless float-16 to float-32 conversion to preserve the stolen bytes in readable form, rather than garbling them through lossy quantization. The leaked heap contents — whatever the Ollama process had in memory at that moment — get folded into the resulting model artifact. The attacker then calls /api/push to ship that poisoned model to an attacker-controlled registry and reads out the stolen data at leisure. No errors appear in Ollama’s logs. The entire sequence looks identical to a routine model creation workflow.

What’s in that heap memory? Environment variables. API keys. System prompts. And the conversation data of whoever else was using the server at the time. Researchers at Cyera, who discovered and named the vulnerability, describe it as “Heartbleed for AI servers” — same attack class as the 2014 OpenSSL bounds-check failure, different ecosystem, higher stakes for proprietary AI workloads.

Why 300,000 Servers Are Exposed

Ollama’s default configuration binds to 127.0.0.1 (localhost only), which is safe. The problem is the documented OLLAMA_HOST=0.0.0.0 setting, which opens Ollama to every network interface with no authentication whatsoever — no username, no API key, no IP allowlist. This configuration is effectively required for any multi-machine setup: homelab servers, cloud GPU instances, team deployments. Ollama’s own documentation points developers toward it.

According to SecurityWeek and Cyera’s Shodan telemetry, more than 300,000 internet-facing Ollama servers are currently running vulnerable versions. The /api/create and /api/push endpoints at the center of this attack require no authentication in the upstream distribution.

The Disclosure That Didn’t Happen

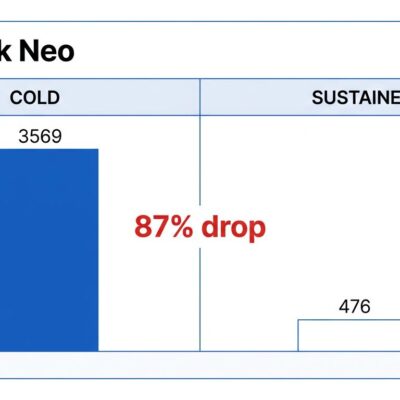

Here’s the part that should make everyone angry: the patch shipped on February 25, 2026 in Ollama v0.17.1. There was no security advisory. No mention in the release notes that a critical CVE was being addressed. MITRE took more than two months to respond to the CVE request. The result was a nearly three-month window where the vulnerability was technically patched but practically invisible — no CVE number, no scanner alert, no automated notification to prompt an upgrade.

The public CVE disclosure only arrived in May 2026. Operators running versions before 0.17.1 had no reason to know they were sitting on a CVSS 9.1 vulnerability. This is not how responsible disclosure is supposed to work, and the AI tooling ecosystem is moving too fast for a CVE pipeline that was already struggling with traditional software.

And There Are Two More CVEs

The same week Bleeding Llama went public, researchers at Striga disclosed two additional Ollama vulnerabilities: CVE-2026-42248 and CVE-2026-42249 (both CVSS 7.7) target the Windows auto-updater. Chained together, they let an attacker on the same local network deliver a persistent executable to the Windows Startup folder — remote code execution on every login, with no user interaction required.

A fix merged to Ollama’s main branch on May 11, but has not yet shipped in a tagged release. Windows users are exposed until it does.

Fix It Now

The steps are straightforward:

- Upgrade immediately: Update to Ollama v0.17.1 or later — this patches Bleeding Llama (CVE-2026-7482).

- Rebind to localhost: Set

OLLAMA_HOST=127.0.0.1unless you have a specific reason to expose Ollama to other machines. - Firewall port 11434: Block it from external access at the network level regardless of your Ollama config.

- Add a reverse proxy: If you need network access, put Nginx or Caddy in front with authentication enabled.

- Windows users: Disable auto-download updates in the Ollama tray app settings until the Windows CVE patch ships in a release.

To check exposure: run curl http://your-server:11434/api/tags from outside your local machine. If you get a response, attackers can reach your server.

Bleeding Llama is a case study in what happens when AI infrastructure security is treated as an afterthought. Ollama is the most popular way for developers to run local LLMs — and it shipped a critical memory leak vulnerability silently, with no advisory, relying on operators to notice a routine version bump. The community needs to demand security advisories as a first-class artifact, separate from release notes, for any framework running with this level of system access.