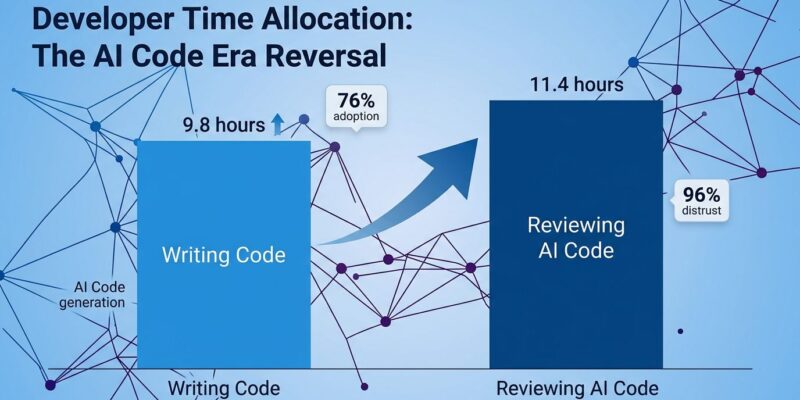

Developers are spending 11.4 hours per week reviewing AI-generated code versus 9.8 hours writing new code—a complete reversal of the 2024 pattern, according to multiple 2026 developer surveys from Stack Overflow, SonarSource, and Nitor. While AI coding tool adoption has surged to 76% (up from 44% in 2024), a critical “verification bottleneck” has emerged. The productivity paradox: AI promises a 35% boost, but 96% of developers don’t fully trust the output, creating a new burden that’s fundamentally transforming what it means to be a software developer.

This isn’t about whether AI tools work—they’re clearly being adopted at breakneck speed. It’s about the hidden cost nobody’s talking about: developers are shifting from code writers to code reviewers, and the implications for careers, junior developers, and software quality are profound.

The Trust Gap That’s Breaking Development

Here’s the uncomfortable reality: 96% of developers don’t fully trust AI-generated code’s functional accuracy, yet 72% who’ve tried AI use it every day. Making matters worse, only 48% always verify AI code before committing it. This creates a dangerous “trust gap” where code nobody trusts enters production anyway.

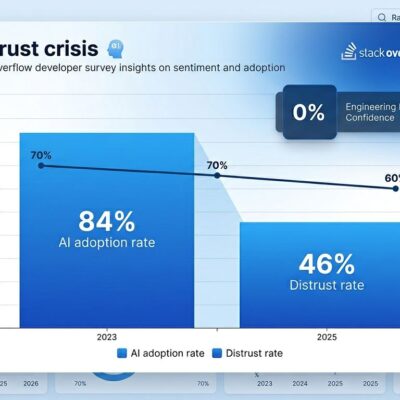

Stack Overflow’s 2025 survey revealed that while 84% use AI tools, 46% don’t trust the output. Developer sentiment tells the story: positive feelings about AI dropped from over 70% in 2023-2024 to just 60% in 2025. Trust is declining even as adoption accelerates.

The top frustration? “AI solutions that are almost right, but not quite”—cited by 66% of developers. That “almost” is the killer. Code that looks correct but harbors subtle bugs or inefficiencies compounds over time. Moreover, 45% say debugging AI-generated code is more time-consuming than writing it themselves. When three-quarters of developers say they wouldn’t trust AI for critical decisions, we have a systemic problem.

The Productivity Paradox Nobody Expected

AI delivers a reported 35% productivity boost and 54% of developers report higher job satisfaction—numbers that sound fantastic until you dig deeper. The reality? 95% of developers spend significant time reviewing, testing, and correcting AI output. One developer on Hacker News put it bluntly: “You should be spending something like 5-15X the time the model takes to implement a feature on reviewing and making it fix its errors and inefficiencies.”

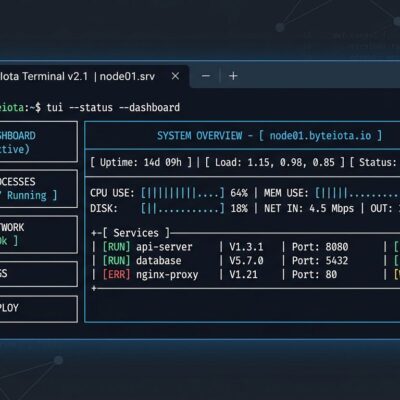

SonarSource’s research confirms what developers are experiencing: “Increased speed in code generation hasn’t automatically translated to faster deployment, and for many teams, new bottlenecks for AI code review and verification have been created.” The net effect isn’t pure efficiency gain—it’s role transformation.

Organizations expecting linear productivity gains are misunderstanding what’s happening. You’re not getting “2X developers.” You’re getting developers who work differently. Furthermore, sprint planning, budget allocation, and performance metrics all need to adapt to a reality where verification, not generation, is the bottleneck.

From Code Writer to Code Architect

The developer role is fundamentally changing. Your job title might still say “Software Engineer,” but your daily reality in 2026 is closer to “Code Reviewer and Systems Architect.” The AI writes syntax. You own logic, security, and architecture.

Skills that matter now: architecture design, code review expertise, security knowledge, testing strategy. Skills declining in value: syntax mastery, memorizing APIs, writing boilerplate, raw implementation speed. A new role is even emerging—”AI Code Auditor”—someone who reviews AI-generated code full-time.

As one Hacker News commenter observed: “More and more engineers are merging changes that they don’t really understand.” That sentence should terrify anyone who cares about software quality. Consequently, when the bottleneck shifts from writing to reviewing, but review quality suffers under time pressure, we’re not moving faster—we’re accumulating technical debt at machine speed.

The Junior Developer Crisis

AI is fundamentally disrupting how junior developers learn and enter the industry. The bootcamp graduate who could land a junior role in 2023? In 2026, they’re competing with AI that writes better code and doesn’t need salary or benefits.

Traditional “learning by writing” is being replaced by “learning by reviewing”—a much harder task requiring deep understanding. Junior developers historically learned by writing simple features. Now they’re expected to review AI code and spot the subtle bugs seniors might miss. The career ladder that took juniors to senior roles is being eliminated.

The question nobody has answered: If junior developers can’t learn by doing, how will the next generation of senior developers emerge? This could create a generational gap in fundamental coding knowledge that the industry will pay for in 5-10 years.

What This Means Going Forward

Is the verification bottleneck temporary or permanent? That’s the billion-dollar question. Optimists believe AI will improve enough that trust catches up with capability. Pessimists argue humans will always be skeptical of black-box code generation, and the trust gap is structural, not temporary.

What we know for certain: AI accounts for 42% of all committed code in 2026, and adoption is trending toward 85-90% by 2027. The time allocation reversal—more reviewing than writing—isn’t an anomaly. It’s the new normal.

Organizations need to stop treating AI tools as simple productivity multipliers and start adapting to the new reality. That means adjusting sprint timelines to account for verification overhead, training developers on effective AI code review, and rethinking how we measure productivity. It means grappling with the junior developer question before we wake up to a talent crisis we should have seen coming.

The developer who survives 2026 isn’t the one who codes best—it’s the one who architects best, validates best, and orchestrates best. That’s not the future. That’s now.