A global wave of social media bans is sweeping Western democracies in April 2026. Australia (under-16), France (under-15), Greece (under-15), Denmark (under-15), and US states like Massachusetts (under-14) are rushing to prohibit children from accessing platforms. While governments frame this as child protection, tech experts call it what it really is: “lazy” policy and an admission that governments failed to regulate tech giants properly for over a decade.

Governments Chose the Easy Way Out

After a decade of refusing to properly regulate tech platforms, governments are taking the easy path: banning users instead of fixing platforms. Australia implemented the first under-16 ban in December 2025, removing 4.7 million accounts by mid-December. France followed with Macron declaring “The brains of our children are not for sale,” but then passed a ban instead of regulating platforms. Massachusetts joined in April 2026 with an under-14 restriction.

Self-regulation failed spectacularly. Facebook’s oversight board, created in 2020 to serve as an “appellate review system” for content decisions, proved an “ineffective substitute for meaningful external accountability.” Governments stalled on actual regulation while tech companies violated privacy laws for years. Now they’re shifting blame to parents and platforms rather than holding companies accountable for algorithmic manipulation, privacy violations, and harmful design choices.

This sets a dangerous precedent. When governments can’t regulate companies, they ban users instead. Developers and platforms inherit the compliance burden for government failure. This is policy theater that doesn’t address root causes.

Age Verification: Pick Your Poison

Age verification is either ineffective or a privacy nightmare. The Electronic Frontier Foundation states bluntly: “There is fundamentally no tool that can verify a user’s age without inherently violating privacy.” The technical reality exposes the fraud.

Self-reporting is trivial to bypass—22-47% of kids already use fake birthdays. Australia’s implementation shows the absurdity: children reportedly “fooled” age estimation AI by drawing on facial hair. VPNs trivially circumvent geographic restrictions. Every teen with basic internet knowledge can defeat these systems in seconds.

The alternative is worse. Government ID collection creates surveillance infrastructure and breach targets. Discord’s third-party vendor 5CA was breached in 2025, exposing 70,000 government ID photos. Biometric age estimation requires facial scanning—permanent privacy invasion you can’t change like a password. Furthermore, 15 million Americans lack government-issued ID, disproportionately affecting Black, Hispanic, disabled, and immigrant communities. Age verification marginalizes the vulnerable while failing to protect children.

Developers face an impossible choice: build ineffective age gates that kids bypass instantly, or build privacy-invasive systems collecting government IDs and biometrics. Either way, the ban fails while creating new risks.

Bans Push Kids to Discord and Telegram

When mainstream platforms ban children, they don’t stop using social media—they migrate to Discord, Telegram, and foreign apps with less oversight. In March 2026, Florida’s Attorney General launched an investigation into Discord citing 19.7 million tips to the National Center for Missing and Exploited Children between March 2025 and March 2026. Discord is “increasingly used by child predators,” according to the AG’s office.

Telegram faces similar scrutiny. UK’s Ofcom launched an investigation in April 2026 under the Online Safety Act, examining whether Telegram meets its duties to prevent child sexual abuse material. The platform faces potential £18 million fines. End-to-end encryption on Telegram makes moderation nearly impossible, creating dark corners where oversight disappears entirely.

One in three Australian teens surveyed said they’d look for workarounds. Discord delayed its full age verification rollout to late 2026 after privacy backlash from users. The pattern is clear: bans create a false sense of security while actually making children less safe. Parents assume kids are “protected” when they’ve just moved to platforms with weaker moderation, encryption that blocks oversight, and predators who follow them there.

Mental Health Harms Are Real, Bans Aren’t the Solution

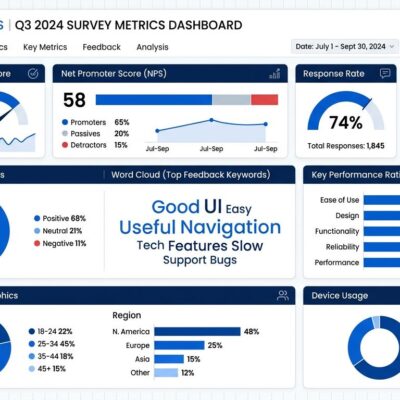

Social media does harm children’s mental health. Imperial College London research in 2026 found adolescents spending 3+ hours daily on social media are twice as likely to experience poor mental health outcomes. The primary mechanism is sleep disruption—evening social media use reduces sleep, causing lasting mental health impacts. The World Happiness Report confirmed social media’s movement to platforms in the early 2010s was a “substantial contributor” to population-level increases in depression and anxiety.

However, experts argue blanket bans prevent digital literacy development. 94% of teens surveyed want media literacy taught in schools instead of prohibition. Children with higher digital skills are better equipped to recognize unhealthy relationships with technology, identify harmful content, block abusive users, and report exploitation. Bans don’t teach these skills—they just push usage underground.

The harms are real, but the solution isn’t banning users—it’s regulating platforms. Fix algorithmic manipulation targeting children. Limit data collection on minors. Require protective design defaults. Teach digital literacy. Bans are the lazy alternative to actual solutions.

The Real Solutions Governments Won’t Pursue

Effective regulation exists but requires political will governments lack. COPPA (US) and GDPR (EU) already prohibit child data collection, but enforcement is weak. Governments could mandate algorithm transparency, require age-appropriate design defaults, limit data harvesting from minors, and invest in comprehensive digital literacy education. These target platforms, not users. They’re also harder than bans.

International experts from academia and children’s rights organizations advocate for “new strategies for digital safety”—not bans. Better platform design with protective defaults, age-appropriate AI tools, and meaningful parental controls would address root causes. Digital literacy curriculum teaching platform design, algorithms, and commercial incentives would empower children to navigate online spaces safely.

Governments chose the easy path instead. Ban users, mandate impossible age verification, fine platforms for non-compliance, and declare victory. The alternative—holding companies accountable for design choices that prioritize engagement over safety—requires sustained regulatory effort and political courage. Bans let politicians claim action while avoiding confrontation with tech giants.

What This Means for Developers

Developers and platforms bear the compliance burden for government policy failure. Australia threatens up to $50 million fines for platforms that don’t enforce bans. Meta and TikTok warned enforcement is “difficult” but will comply minimally. Developers must choose between ineffective solutions (self-report) or privacy-invasive systems (ID/biometrics), creating new attack surfaces through ID storage and biometric databases.

This isn’t about child safety—it’s about governments shifting blame. Platforms become scapegoats for regulatory failures. Developers inherit impossible technical requirements. Users face privacy invasion or ineffective theater. And children? They’re no safer, just on different platforms.

Key Takeaways

- Social media bans for children are governments admitting they failed to regulate tech giants for over a decade, shifting responsibility to parents and platforms instead of holding companies accountable

- Age verification either doesn’t work (22-47% of kids bypass self-reporting) or invades privacy catastrophically (government IDs, biometrics, creating breach targets like Discord’s 70,000 exposed IDs)

- Bans push kids to riskier platforms like Discord (19.7 million child safety tips to NCMEC) and Telegram (under UK investigation), making children less safe while creating false security

- Social media harms are real (3+ hours daily = 2x poor mental health), but 94% of teens want digital literacy education instead of bans that prevent learning healthy tech use

- Effective solutions exist but require political will: regulate platform design, mandate algorithm transparency, enforce existing privacy laws (COPPA/GDPR), invest in digital literacy—harder than bans but actually effective

Bans are policy theater. Governments failed to regulate, so they’re banning users instead. Kids won’t be safer—they’ll just be on platforms with less oversight. This is lazy policy disguised as protection.