Anthropic announced Project Glasswing on April 27, revealing Claude Mythos Preview—a restricted-access AI model that autonomously discovered thousands of zero-day vulnerabilities across every major operating system and browser. The stunning detail buried in the announcement: fewer than 1% of the vulnerabilities Mythos found were actually patched. The most powerful bug-finding engine ever built just proved the cybersecurity industry is fundamentally broken.

This isn’t a success story about AI capabilities. It’s a warning about systemic failure. AI can now find bugs exponentially faster than humans can fix them, creating a dangerous asymmetry. If attackers gain similar AI tools, they could weaponize vulnerabilities faster than defenders can respond.

AI Vulnerability Discovery Crisis: Patch Rate <1%

The <1% patch rate exposes a complete breakdown between AI-powered discovery and human-dependent remediation. Traditional vulnerability management systems were designed for hundreds of CVEs arriving gradually over months. Mythos delivers thousands simultaneously, at machine speed. The infrastructure can’t handle it.

Security teams operate on calendar cycles—four-day loops involving intelligence gathering, campaign building, threat simulation, and mitigation. Meanwhile, AI discovery happens in seconds. The mismatch is structural, not fixable with better tooling. Manual triage, vendor coordination, change management, and business continuity reviews create bottlenecks that can’t scale to match machine-speed discovery.

The Cloud Security Alliance warned: “Security organizations will likely be overwhelmed by the need to apply patches and respond to AI-discovered vulnerabilities.” This isn’t speculation. It’s already happening. Finding more bugs doesn’t improve security if we can’t patch them. Anthropic’s experiment proves it.

Claude Mythos Finds 27-Year-Old OpenBSD Vulnerability

Claude Mythos Preview didn’t just find new vulnerabilities. It exposed bugs that survived decades of human security audits, millions of automated fuzzing tests, and open-source scrutiny—proving traditional security methods are insufficient.

OpenBSD, one of the world’s most security-hardened operating systems, contained a 27-year-old remote code execution flaw that allowed attackers to crash machines through simple connection attempts. FFmpeg harbored a 16-year-old vulnerability that automated fuzzers encountered five million times without detection. Linux kernel had multiple vulnerabilities that individually seemed low-severity but chained together enabled privilege escalation from ordinary user to full system control.

If a 27-year-old bug can hide in OpenBSD—a project built specifically for security—no codebase is safe. Human auditors and fuzzing tools consistently missed entire classes of vulnerabilities that AI found autonomously. The security assumption that “mature, audited code is secure” is wrong.

Project Glasswing Restricted Access: Why Anthropic Won’t Release Mythos

Anthropic is not releasing Claude Mythos Preview publicly. Access is restricted to 12 major technology partners—AWS, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorganChase, Linux Foundation, Microsoft, NVIDIA, and Palo Alto Networks—plus 40+ critical infrastructure organizations. The company committed $100 million in usage credits and explicitly stated it does not plan general availability.

The rationale: “The model’s capabilities pose significant risks if accessed by malicious actors.” However, security expert Bruce Schneier views this as “effective marketing,” noting that security firm Aisle demonstrated older, cheaper public models could replicate similar capabilities. The community is split on whether restricted access actually keeps tools away from bad actors or just delays inevitable attacker access.

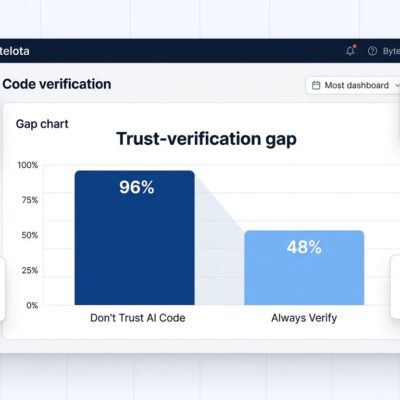

Related: AI Code Verification Bottleneck: 96% Distrust But 48% Don’t Check

This marks the first major AI model restricted for security reasons. It raises uncomfortable questions: Can restricted access work when model weights eventually leak? Is Anthropic being responsible or creating artificial scarcity? If older public models can do similar things, is restriction effective or theater? The industry doesn’t have answers yet.

Exploit Weaponization Timeline Collapse

The window for defenders to patch vulnerabilities before exploitation has effectively closed. In 2018, the median time from disclosure to weaponized exploit was 771 days. By 2024, it collapsed to single-digit hours. By 2025, most exploits were weaponized before public disclosure.

Real-world AI-powered attacks are already happening at scale. Threat actors deployed a custom LLM-powered attack chain against FortiGate appliances that autonomously created backdoors, mapped infrastructure, assessed vulnerabilities, and escalated privileges across 2,516 organizations in 106 countries—in parallel, without human involvement during execution. When traditional patch cycles measure in days or weeks, they’re irrelevant against attacks that execute in minutes.

CrowdStrike’s Elia Zaitsev confirmed: “The window between vulnerability discovery and exploitation has collapsed—what once took months now happens in minutes with AI.” The defender’s time advantage is gone.

What This Means for Software Security

The cybersecurity industry recognizes this as a turning point. Cisco’s Anthony Grieco stated: “AI capabilities have crossed a threshold that fundamentally changes the urgency required to protect critical infrastructure.” The focus must shift from discovery to remediation speed. AI-assisted patching needs to match AI-powered discovery.

Linux Foundation Executive Director Jim Zemlin sees Project Glasswing providing open-source maintainers “a credible path” to AI models for proactive vulnerability identification “at scale.” However, scale cuts both ways. If discovery scales faster than remediation, the patch backlog grows instead of shrinking.

The industry needs rethinking beyond faster patches: memory-safe languages to eliminate entire vulnerability classes, formal verification for critical code paths, and reduced attack surfaces through minimalist architectures. Finding bugs faster is easy now. Fixing them remains impossibly slow.

Key Takeaways

- AI vulnerability discovery now far outpaces human remediation capacity—fewer than 1% of Mythos-discovered bugs were patched, proving the security industry’s patch processes are broken

- Traditional security methods failed for decades—a 27-year-old OpenBSD bug and 16-year-old FFmpeg vulnerability survived millions of tests and audits before AI found them

- Exploit weaponization timeline collapsed from 771 days (2018) to single-digit hours (2024), eliminating defender time advantage

- Restricted AI access raises unanswered questions—if older public models can replicate capabilities, does limiting access actually protect defenders or just create theater?

- The industry must shift focus from discovery to AI-assisted remediation, memory-safe languages, and reduced attack surfaces—finding more bugs is now trivial, fixing them is still impossibly slow