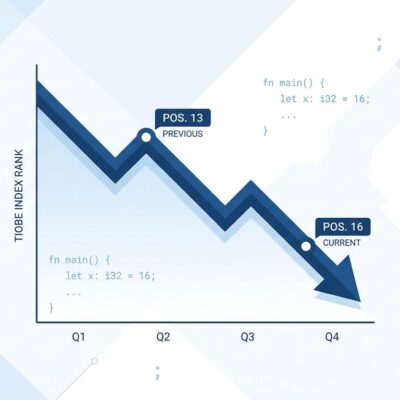

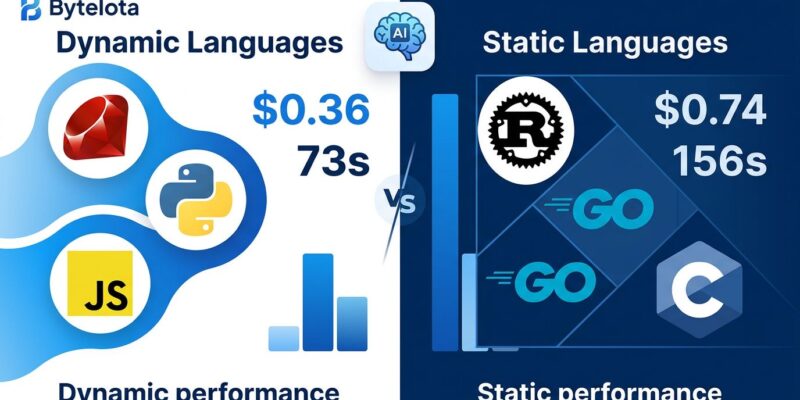

Ruby committer Yusuke Endoh ran a 600-trial benchmark testing Claude Code across 13 programming languages to implement a simplified Git. The results defy conventional developer wisdom: dynamic languages (Ruby, Python, JavaScript) beat static languages by 1.4 to 2.6x on cost, speed, and stability. Ruby averaged $0.36 per run and 73 seconds, while Go cost $0.50 and took 102 seconds, Rust $0.54 and 114 seconds, and C $0.74 and 156 seconds. Moreover, type checking imposed even harsher penalties—adding mypy to Python slowed generation by 1.6-1.7x, while Ruby with Steep type checking was 2.0-3.2x slower than plain Ruby.

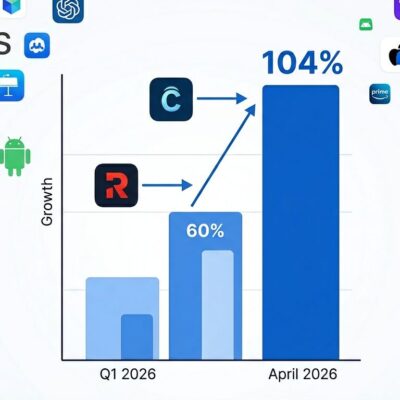

This matters because AI generates 42% of committed code today, rising to 65% by 2027. If language choice affects AI generation cost and speed by 2x, developers are making decisions based on pre-AI assumptions. The conventional wisdom “static typing saves time long-term” may be obsolete for AI-generated code.

The Benchmark Results: Dynamic Languages Dominated

Across 600 runs, Ruby ($0.36/73s), Python ($0.38/75s), and JavaScript ($0.39/81s) consistently outperformed all static languages. Go ($0.50/102s) was the best static language but still 38% slower and more expensive than Ruby. Rust ($0.54/114s) and C ($0.74/156s) lagged even further behind. Furthermore, variance tells a similar story: Ruby’s ±4.2 second standard deviation crushed Rust’s ±54.8 seconds—predictability matters for automated workflows.

Only 3 failures occurred across 600 runs—2 in Rust, 1 in Haskell—all in statically-typed languages. Type systems didn’t prevent generation failures. They slowed them down.

The numbers aren’t small differences. At 1000 AI generations per month, Ruby costs $360 versus C at $740—that’s $3,800 annual savings per developer. Consequently, productivity compounds the gap: a developer using Ruby produces 394 generations per 8-hour day versus 184 for C, a 2.1x output difference. For agencies billing by deliverable or startups racing to market, language choice directly affects profitability. More details in the original benchmark study and InfoQ’s analysis.

Related: GitHub Copilot Token Billing Kills AI Subscriptions

Why Dynamic Languages Win: The Type System Penalty

Type checking imposed severe overhead on AI generation. Python with mypy strict mode ran 1.6-1.7x slower than plain Python. Ruby with Steep type checking was 2.0-3.2x slower than plain Ruby. Similarly, TypeScript cost $0.62 versus JavaScript’s $0.39—59% more expensive despite similar code length.

Endoh attributes this to “thinking token overhead.” AI models spend more tokens reasoning about type constraints instead of implementing logic. Type systems create decision trees the model must navigate. Every type annotation, every interface, every generic constraint adds cognitive load. In fact, the model burns tokens satisfying the type checker rather than solving the problem.

This challenges the “gradual typing gives you the best of both worlds” narrative. If adding types to Python makes AI 1.6x slower, teams face a choice: generate without types (fast) or with types (slow). The sweet spot isn’t TypeScript—it’s generating dynamic code and adding types during human review, avoiding the generation penalty while gaining type safety when it counts. Research on token-efficient programming languages corroborates these findings.

The Business Implications at Scale

At enterprise scale, language choice has massive business impact. Ten thousand AI generations per month means Ruby costs $3,600 annually versus C at $7,400—a $3,800 difference per developer. Additionally, for a 10-developer team, that’s $38,000 in annual API costs saved by choosing Ruby over C.

Time differences compound productivity gaps. An 8-hour workday equals 28,800 seconds. Ruby at 73 seconds per run yields 394 generations daily. Go at 102 seconds drops to 282 generations—28% fewer. C at 156 seconds plummets to 184 generations—53% productivity loss. Therefore, developers using C for AI generation produce half the code of Ruby developers in the same timeframe.

These aren’t theoretical numbers. Consultancies maximizing margin, agencies billing hourly, and startups validating MVPs all face the same math: faster, cheaper AI generation means higher profit or faster iteration. Language choice is now a business decision, not just a technical preference. The real cost of AI coding in 2026 makes this clear.

The Limitations Nobody Should Ignore

Endoh acknowledges critical limitations: the benchmark tests 200-line prototypes, not production codebases. It creates greenfield projects, not modifications of existing code. Moreover, it excludes scenarios where static typing shines—refactoring 100,000-line systems, onboarding new team members, catching bugs during compilation instead of runtime.

“The task is too small. Static typing should shine at larger scales,” Endoh notes. He’s right. Static types provide navigation (jump to definition works), validation (compiler catches mistakes before deployment), and documentation (types self-document contracts). Consequently, these advantages emerge when codebases grow beyond prototype size.

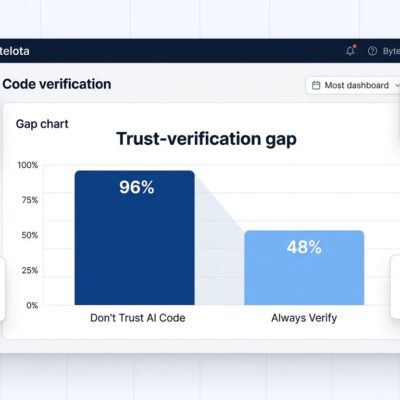

Furthermore, GitHub and YUV.AI research argues types become MORE important with AI, not less. A 2025 academic study found 94% of LLM-generated compilation errors were type-check failures. Their argument: AI-generated code needs external validation precisely because humans didn’t write it. Types serve as specification for code you didn’t author.

This creates tension: Endoh’s benchmark shows types slow generation by 1.6-3.2x, but GitHub’s research shows types catch 94% of errors. The resolution? Types matter for VALIDATION (catch errors), but slow GENERATION (AI reasoning overhead). Optimal workflow: generate without types (fast), validate with types (safe). Speed plus safety.

Related: AI Code Verification Bottleneck: 96% Distrust But 48% Don’t Check

The Decision Framework: When to Choose Each

Language choice now has dual criteria: human readability plus AI efficiency. The decision depends on three factors.

First, AI authorship ratio. If AI generates more than 50% of your codebase, dynamic languages win on speed and cost. However, if humans write more than 70%, static typing’s long-term maintenance benefits outweigh generation overhead. Measure what percentage of your commits are AI-generated versus human-written.

Second, codebase scale. Prototypes and MVPs favor dynamic languages—fast iteration matters more than type safety. Meanwhile, codebases exceeding 50,000 lines favor static types—refactoring tools, compile-time validation, and team onboarding justify the generation penalty.

Third, bottleneck analysis. If AI generation takes 73 seconds but human review takes 30 minutes, optimize for easy review—types help. Nevertheless, if generation takes 156 seconds but review is 5 minutes, optimize for fast generation—dynamic wins. Profile your workflow before choosing.

The hybrid approach works best for most teams: use dynamic languages for AI-generated scaffolding, boilerplate, and tests. Use static languages for core business logic, performance-critical paths, and stable APIs. Generate without types to avoid the 1.6-3.2x penalty, then add types during code review to gain safety where it matters.

Key Takeaways

- Dynamic languages (Ruby, Python, JavaScript) beat static languages by 1.4-2.6x on AI generation cost and speed across 600 rigorous benchmark runs—$0.36/73s versus $0.50-$0.74/102-156s.

- Type checking imposes severe AI generation penalties: mypy slows Python by 1.6-1.7x, Steep slows Ruby by 2.0-3.2x, TypeScript costs 59% more than JavaScript—AI models burn thinking tokens satisfying type constraints instead of solving problems.

- At enterprise scale (10,000 generations/month), language choice drives $3,800 annual cost difference per developer and 2.1x productivity gap—business impact, not just technical preference.

- Benchmark limitations matter: 200-line prototypes don’t reflect 100,000-line production systems where static typing provides refactoring, navigation, and validation advantages at scale.

- The resolution: generate without types for speed (dynamic languages), validate with types for safety (add during review)—optimal workflow balances both, matching language to AI authorship ratio and codebase scale.

The question isn’t “dynamic versus static” anymore. It’s “what’s your AI authorship ratio?” Measure your workflow, choose accordingly, and stop optimizing for pre-AI assumptions.