Cloud waste has reversed a five-year decline, hitting 29% in 2026—approximately $262 billion of the $905 billion cloud market wasted on overprovisioned resources, idle GPUs, and underutilized commitments. The culprit isn’t organizational neglect: 71% of enterprises now have Cloud Centers of Excellence, 63% run dedicated FinOps teams, and shift-left initiatives have embedded cost guardrails into CI/CD pipelines. The problem is AI workloads—22% of cloud spend—which break every traditional FinOps assumption about predictable, linear resource consumption.

AI Workloads Break Traditional FinOps Assumptions

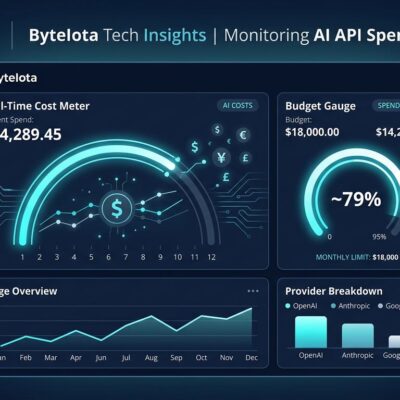

AI and machine learning workloads now represent 22% of cloud costs, but they don’t behave like traditional SaaS infrastructure. Where conventional applications follow predictable usage curves that FinOps teams can model and optimize, AI training runs spike unpredictably, inference workloads scale non-linearly, and one in five organizations miss their AI spend forecasts by more than 50%.

The commitment discount strategies that worked for steady-state workloads—Reserved Instances and Savings Plans designed around consistent capacity—fail spectacularly for bursty AI training jobs that might consume hundreds of GPUs for three days then sit idle for a week. GenAI adoption has reached 100% across surveyed organizations, with 45% reporting extensive use (up from 36% last year). FinOps teams are tracking costs at token-level and GPU-level granularity just to understand where money is going, let alone control it.

GPU Waste Reaches 30-50% From Idle Resources

The GPU waste problem is staggering and specific: 30-50% of GPU spending is wasted on resources left running during debugging sessions, meetings, or overnight. H100 rental costs have stabilized around $2.10-$3.50 per hour, but when training frontier models requires thousands of GPU-hours, even small inefficiencies compound into massive losses.

Teams that tackle GPU waste directly report 40-70% cost reductions by right-sizing GPU tiers and implementing automated idle detection. The low-hanging fruit is obvious—shut down GPUs that aren’t actively training—but organizations struggle to enforce it. With HBM prices forecast to rise another 30-40% in 2026, every wasted GPU-hour will cost even more.

Shift-Left FinOps Promised Control, Delivered Complexity

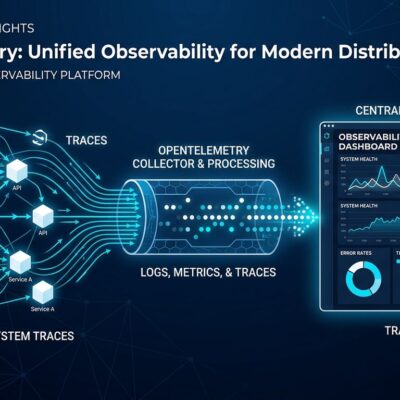

The shift-left movement promised to solve cloud cost problems by bringing cost awareness to developers early in the lifecycle. Infrastructure-as-Code templates now include built-in cost guardrails. Pull requests trigger automatic cost evaluations. CI/CD pipelines preview cost impacts before deployment. Developers get pre-commit, pre-merge, and pre-deploy policy checks.

Despite widespread adoption of these practices, cloud waste just hit its highest level in five years. Shift-left added complexity—more tools, more alerts, more policy checks—without reducing waste. Developers experience alert fatigue and route around guardrails designed for predictable workloads when they’re trying to train a model that might need 10x the resources tomorrow. The philosophy was sound; the implementation assumed a world where AI wasn’t rewriting the rules.

More Teams Involved, Same Waste Problem

Organizations responded to rising costs by spreading responsibility. Software Asset Management (SAM) team involvement jumped from 6% to 15%. Business unit involvement increased from 20% to 25%. Seventy-one percent of enterprises have Cloud Centers of Excellence, and 63% run dedicated FinOps teams.

More cooks in the kitchen hasn’t improved the outcome. Cloud waste is rising despite cross-functional engagement, and 84% of organizations report struggling to manage cloud spend. The data suggests diffused accountability creates gaps where no single team owns the problem. When SAM, FinOps, business units, and engineering all share responsibility, critical waste—like those idle GPUs burning $3/hour—falls through the cracks.

Commitment Discounts Remain Underutilized

The most basic FinOps lever—commitment-based discounts like Reserved Instances and Savings Plans—is still underutilized. Fewer than half of organizations use any given commitment discount program per cloud provider, despite modest uptick in adoption. This represents low-hanging fruit that organizations haven’t picked even as they invest in sophisticated shift-left tooling and cross-functional governance structures.

What Actually Works: Real-Time Control Over Theoretical Guardrails

Organizations achieving cost control for AI workloads share common practices. They implement real-time GPU tracking with automated idle shutdown, eliminating the 30-50% waste from forgotten resources. They right-size GPU tiers based on actual workload requirements rather than defaulting to premium hardware. They adopt weekly or monthly forecasting cadences for fast-moving AI projects instead of quarterly planning cycles. They track costs at token-level and GPU-level granularity and set budget thresholds that trigger alerts before spending spirals.

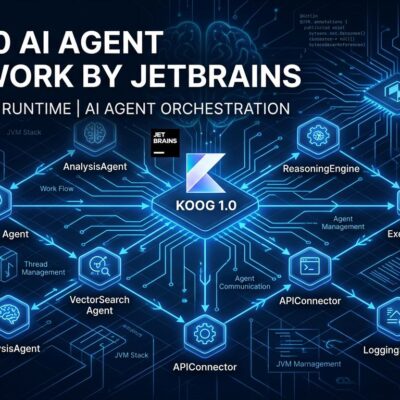

These approaches accept AI’s unpredictability rather than trying to force it into traditional FinOps frameworks. Automated controls—shutdowns, budget limits, right-sizing recommendations—work better than manual policy enforcement because they operate at the speed and scale AI workloads demand.

The Core Problem: FinOps Built for the Wrong Workload

Traditional FinOps assumes workloads are predictable, capacity planning is possible, and usage trends follow consistent patterns. AI training and inference break all three assumptions. The five-year decline in cloud waste represented FinOps maturity for traditional workloads. The 2026 reversal represents AI exposing the limits of that framework.

Shift-left philosophy remains sound—engineers should understand cost implications of their infrastructure decisions. But the tools were designed for steady-state applications, not workloads where next week’s compute needs might be 10x or 0.1x this week’s. Organizations need AI-specific FinOps strategies: granular real-time tracking, automated controls that respond faster than humans can, and acceptance that forecasting AI costs means building in significant variance rather than optimizing for precision.

The 29% waste rate won’t decline until FinOps evolves past its linear-workload assumptions and builds frameworks that match AI’s reality.