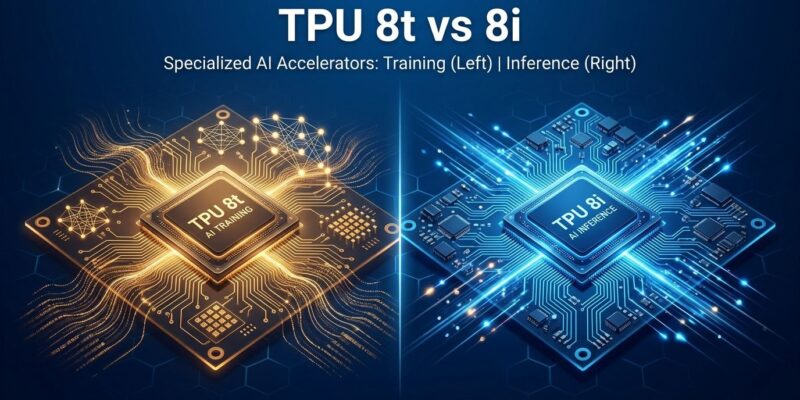

Google unveiled its eighth-generation Tensor Processing Units at Cloud Next 2026, splitting them into two distinct chips: TPU 8t for massive model training and TPU 8i for lightning-fast inference. The move breaks with NVIDIA’s generalist approach and signals a broader shift toward specialized AI hardware—choosing the right chip for the workload rather than paying for performance you don’t need.

The Strategic Split: Why Google Divided Its TPUs

This is Google’s first time splitting TPUs by workload. TPU 8t handles training—pre-training foundation models, generating embeddings, and reinforcement learning. TPU 8i handles inference—serving deployed models, running AI agents, and powering real-time applications. NVIDIA’s H100 and H200 chips do both, which sounds convenient until you’re paying premium prices for training hardware you never use during inference.

Google isn’t alone in betting on specialization. Microsoft launched Maia 200, an inference-only chip, in January 2026. AWS offers Trainium for training. The pattern is clear: hyperscalers are abandoning one-size-fits-all chips in favor of workload-specific silicon.

Performance Numbers That Matter

The TPU 8t delivers 2.7x performance-per-dollar over Google’s seventh-generation Ironwood TPU for large-scale training. A single superpod packs 9,600 chips, yielding 121 exaflops and 2 petabytes of shared memory.

The TPU 8i claims 80% better performance-per-dollar for low-latency inference, especially for Mixture-of-Experts models like GPT-4 and Claude. It triples on-chip SRAM compared to Ironwood, cutting memory access latency. Pods of 1,152 chips deliver 11.6 FP8 exaflops.

Both chips connect through Google’s Virgo Network, delivering 47 petabits per second of non-blocking bandwidth. Virgo links 134,000 TPU 8t chips in a single fabric with 4x the bandwidth per accelerator versus the previous generation.

Agentic AI Drives Inference Demand

Google designed these chips for the “agentic era”—AI systems that reason through multi-step workflows rather than answer single questions. Traditional AI inference is simple: one user query, one model call, one response. Agentic AI flips this. A single user task might trigger 10, 20, or 50 inference calls as the agent plans, retrieves data, reasons, and iterates.

This changes the economics. Training happens once. Inference was historically cheap because you serve millions of requests from a single trained model. However, when every agent task requires dozens of inference calls, inference costs explode. TPU 8i’s 80% performance-per-dollar improvement multiplies across every inference call, compounding savings.

Competition: NVIDIA, Microsoft, AWS

NVIDIA’s H100 and H200 remain the default for most AI builders, partly due to CUDA’s ecosystem lock-in. However, that flexibility costs. You pay for training performance even when you’re only serving models.

Microsoft’s Maia 200, announced in January 2026, delivers 3x the FP4 performance of AWS Trainium3 and already powers OpenAI’s GPT-5.2 models. Meanwhile, AWS Trainium targets training, competing with TPU 8t. The hyperscalers are converging on specialization.

What Developers Should Know

Both TPU 8t and TPU 8i support PyTorch (via TorchTPU, currently in preview), JAX (native and optimized), and vLLM for fast inference. General availability is slated for later in 2026, with early access available now by request.

Use TPU 8t if you’re training foundation models, generating embeddings at scale, or running reinforcement learning. Use TPU 8i if you’re serving AI agents, running low-latency LLM inference, or handling high-concurrency production workloads. Stay on NVIDIA if your code is tightly coupled to CUDA.

The cost optimization is real. A 2.7x training improvement or 80% inference boost translates to substantial savings at scale. Splitting training and inference across specialized chips could cut your AI infrastructure bill in half.

Google’s split isn’t an isolated experiment. Microsoft went inference-only with Maia 200. AWS doubled down on training with Trainium. Even NVIDIA is facing pressure to specialize as cloud providers optimize for specific workloads. The era of general-purpose AI chips is ending. For developers, infrastructure decisions got more granular: choose chips based on training versus inference needs, or overpay for unused performance.