Mercor, a $10 billion AI staffing platform connecting contractors with OpenAI, Google DeepMind, and Meta, confirmed on March 31, 2026 that attackers stole personal data from over 40,000 contractors—including Social Security numbers, passports, and voice recordings collected during AI-powered interview screenings. The breach came through LiteLLM, a Python library managing API keys for AI services, which was backdoored for just 40 minutes but downloaded 3.4 million times daily. Lapsus$ and TeamPCP claimed the 4-terabyte haul, and five federal lawsuits hit Mercor within the first week of April.

Unlike passwords or credit cards that can be replaced, biometric voice and facial data is permanent. The 40,000 contractors now face lifetime identity theft risk through deepfake fraud.

Biometric Data Can’t Be Changed—Permanent Identity Theft Risk

When Target’s 2013 breach exposed 40 million credit cards, all cards were replaced within weeks. When the 2015 OPM breach exposed 5.6 million federal employee fingerprints, those individuals remain vulnerable indefinitely. Biometric data is immutable—you can change your password, but you can’t change your voice.

Mercor’s stolen cache includes everything needed for permanent identity theft. The platform collected ~20-minute AI-powered video interviews containing voice recordings, facial geometry scans, and full transcripts, alongside Social Security numbers, passports, and birthdates. Moreover, in 2020, attackers stole $35 million by using AI to replicate a company director’s voice and deceive a bank manager. With high-quality voice samples from 40,000+ contractors working for frontier AI labs, attackers now possess the raw material for sophisticated deepfake impersonation attacks that can bypass phone-based authentication, fool employers and banks, and enable social engineering campaigns that traditional fraud detection can’t catch.

Supply Chain Attack Exposed “Thousands of Companies” via LiteLLM

The breach started when attackers compromised LiteLLM’s CI/CD pipeline on March 24, 2026 at 10:39 UTC. Within 13 minutes, they published malicious versions 1.82.7 and 1.82.8 to PyPI. Researcher Callum McMahon discovered the compromise when his system crashed at 11:48 UTC, and PyPI quarantined the packages by 13:38 UTC—roughly 3 hours total exposure. However, despite the relatively fast response, thousands of companies were compromised because LiteLLM sees 3.4 million downloads daily.

LiteLLM provides a unified interface to 100+ AI providers—OpenAI, Anthropic, Vertex AI, Bedrock, Hugging Face, Replicate, and more. Consequently, a typical deployment manages more API keys than almost any other service in an infrastructure. The malware targeted exactly that: SSH keys, cloud credentials for AWS/GCP/Azure, Kubernetes secrets, API keys, and database credentials. Mercor confirmed it was “one of thousands of companies” affected, demonstrating how a single compromised library can breach an entire ecosystem simultaneously.

Five Lawsuits in One Week—BIPA Creates Massive Liability

Five federal lawsuits were filed against Mercor in California and Texas courts between April 1-7, 2026, seeking unspecified monetary damages for violations of data privacy and consumer protection laws. The timing is notable: the first lawsuit dropped April 1, the same day the Seventh Circuit ruled that Illinois’ 2024 BIPA amendment limiting damages applies retroactively. Lawyers were ready.

The Illinois Biometric Information Privacy Act has generated over 1,500 lawsuits since 2019, with at least 100 class actions filed in 2025. Furthermore, BIPA explicitly covers “Artificial Intelligence applications that may be harvesting your biometric data without your knowledge” and allows individuals to sue without proving actual injury (2019 Rosenbach v. Six Flags ruling). Recent AI-related settlements include Incode Technologies paying $4 million for facial geometry scans. Nevertheless, while the 2024 amendment capped damages at one recovery per person rather than per scan, class action liability for 40,000 affected contractors remains substantial.

AI companies collecting biometric data for training, screening, or authentication face a brutal choice: comprehensive data collection enables better matching and performance, but creates catastrophic liability if breached. Mercor’s platform demonstrates the trade-off—AI-powered interviews that analyze voice, face, and responses provide superior candidate evaluation, but collecting immutable biometric identifiers from 40,000 people turned a supply chain attack into an existential legal threat.

Lapsus$ Re-Emerges Targeting AI Infrastructure

Lapsus$, the extortion-focused hacking group behind high-profile breaches of Microsoft, Nvidia, Samsung, and Okta, has re-emerged in 2026 collaborating with TeamPCP on supply chain attacks specifically targeting AI infrastructure. Security firm Wiz confirmed that “TeamPCP was explicitly collaborating with the notorious extortion group Lapsus$ to perpetuate the chaos,” with TeamPCP handling initial compromise and credential theft while Lapsus$ manages monetization and extortion.

Lapsus$ published samples of allegedly stolen data on its leak site, including Slack conversations, internal ticketing information, and two videos showing Mercor’s AI interview system in action. Additionally, the groups claim a 4-terabyte haul including source code, database records, and “the AI training methodologies of multiple frontier labs”—OpenAI, Anthropic, and Meta all use Mercor contractors for post-training tasks like data labeling and evaluation framework design. This isn’t just about stolen personal data; it’s competitive intelligence theft that could reveal how frontier AI labs train their models.

This represents an evolution from generic supply chain attacks to AI-focused attacks targeting libraries that manage AI API keys, training data access, and model credentials. LiteLLM was an ideal target: 95 million downloads per month, used by major AI frameworks like CrewAI and DSPy, and managing dozens of AI provider keys per deployment. Expect more attacks targeting AI infrastructure—not just for financial gain, but for the strategic advantage of stealing training methodologies from companies building the most valuable AI systems in the world.

Even Fast Response Can’t Stop Supply Chain Breaches

The LiteLLM attack window was extraordinarily narrow. Attackers compromised the CI/CD pipeline at 10:39 UTC, published malicious packages within 13 minutes, were discovered by a researcher at 11:48 UTC, and saw PyPI quarantine the packages by 13:38 UTC—roughly 3 hours total. By open-source security standards, this was a fast response. It didn’t matter.

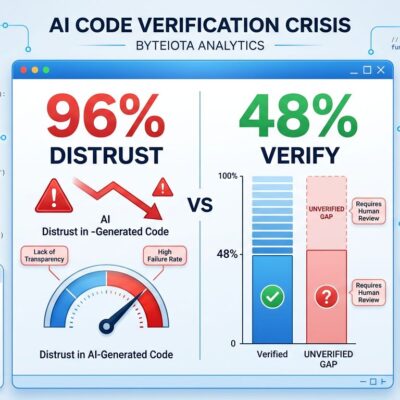

With 3.4 million daily downloads, even a few hours of exposure meant thousands of compromised systems. The malware used .pth file injection to ensure persistence across Python environment restarts, and Mercor’s data wasn’t fully exfiltrated until March 31 (seven days later) when the breach was confirmed publicly. Current supply chain defenses—package signing, automated rollback, dependency pinning—all have trade-offs. There’s no simple fix for the trust problem in open-source ecosystems, especially when critical dependencies see millions of downloads daily.

AI companies are handling irreplaceable biometric data while depending on the same fragile supply chain as everyone else. The Mercor breach demonstrates the gap: companies building the most sophisticated technology in the world can’t implement security adequate for the sensitive data they’re collecting. When a $10 billion startup storing 40,000 people’s voice recordings gets compromised through a dependency that was backdoored for three hours, the problem isn’t individual negligence—it’s systematic failure to match security investment to data sensitivity.

Key Takeaways

- Biometric data theft is permanent—voice and facial data can’t be changed like passwords, creating lifetime identity theft risk through deepfake fraud

- LiteLLM’s compromise exposed thousands of AI companies simultaneously, demonstrating how high-value dependencies with millions of daily downloads become single points of catastrophic failure

- BIPA lawsuits against AI platforms collecting biometric data will escalate—five lawsuits in one week signals class action litigation is just beginning

- Lapsus$ and TeamPCP are specifically targeting AI infrastructure to steal not just credentials but competitive intelligence (training methodologies from frontier labs)

- Even fast incident response (3 hours from compromise to quarantine) can’t prevent supply chain breaches when critical dependencies see millions of downloads daily—the trust model is broken

AI companies rushing to collect biometric data for training and evaluation are creating permanent security risks their infrastructure can’t support. The gap between data sensitivity and security posture isn’t closeable with current supply chain practices.